AI researcher Omar Saro has identified a fundamental architectural limitation in the current landscape of Large Language Model (LLM) agent frameworks. In a concise social media post, Saro notes that "Every agent framework assumes one user giving instructions." This single-user assumption becomes a critical barrier when attempting to deploy an AI agent into a real-world team environment, where collaboration, role-based access, and concurrent tasking are the norm.

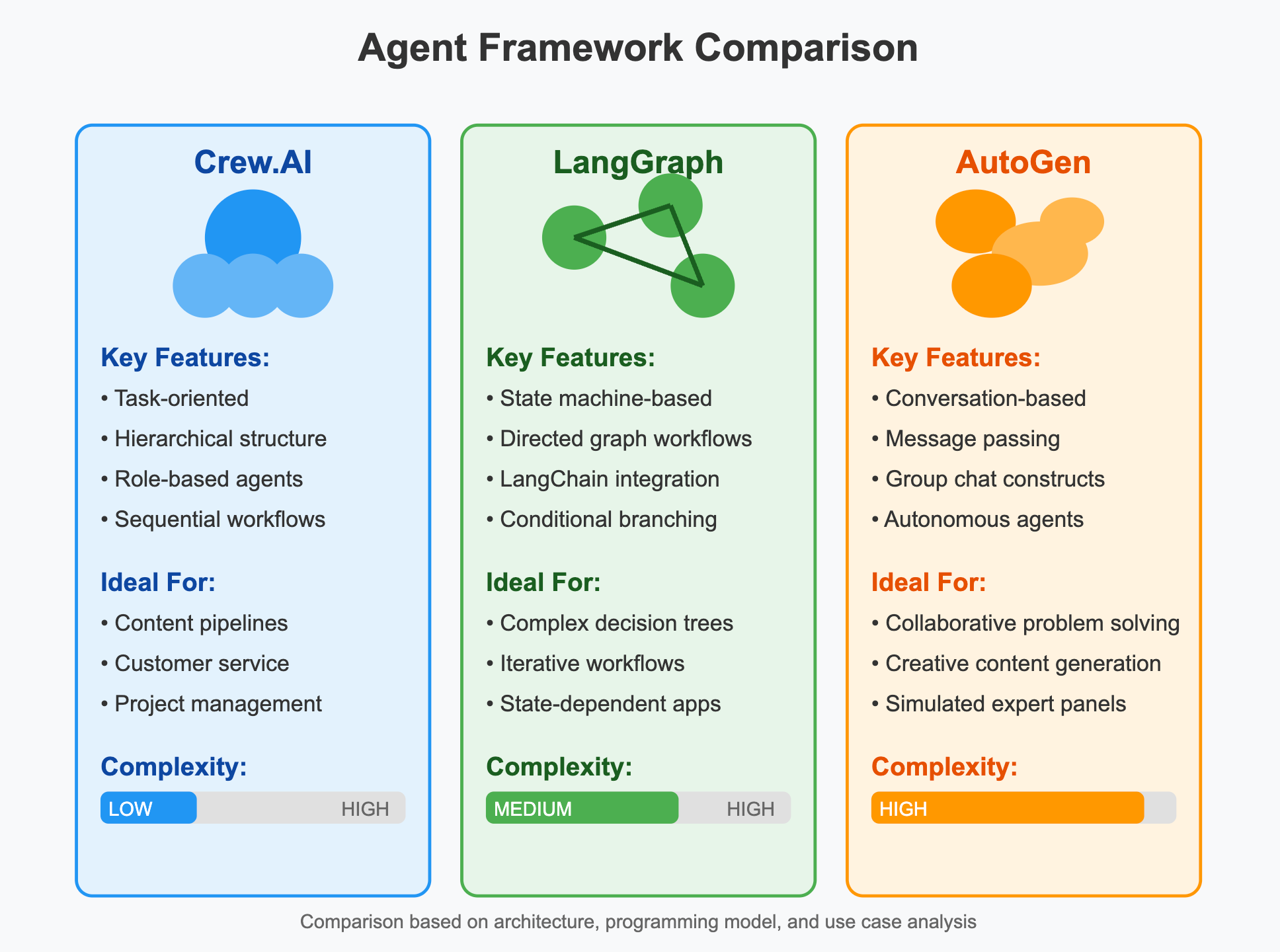

This observation cuts to the core of a significant mismatch between AI research prototypes and production-ready enterprise systems. While frameworks like LangChain, LlamaIndex, AutoGen, and CrewAI have rapidly advanced the capabilities of individual AI agents—enabling tool use, memory, and complex reasoning—their underlying paradigms remain anchored to a solitary user issuing commands to a solitary AI assistant.

Key Takeaways

- AI researcher Omar Saro points out that all current LLM agent frameworks are designed for single-user instruction, creating a deployment barrier for team-based workflows.

- This identifies a major unsolved problem in making AI agents practically useful in organizations.

What's Missing: The Collaborative Layer

Saro's point implicitly defines the missing layer: a collaborative orchestration plane. In a team setting, an effective AI agent would need to:

- Manage conversation state and context across multiple human participants.

- Understand and enforce role-based permissions (e.g., a junior engineer vs. a product manager may have different authority to approve an agent's proposed action).

- Resolve conflicting instructions from different team members.

- Maintain a shared, persistent memory of team decisions and agent actions that is accessible and editable by authorized users.

- Handle concurrent, potentially interdependent tasks spawned by different users.

Current frameworks treat the "user" as a monolithic entity. Deploying them for a team often requires brittle workarounds, such as creating a single service account that aggregates team requests, which immediately breaks natural interaction patterns and accountability.

The Technical Hurdle

The challenge is not merely a UI or front-end issue; it is a deep architectural one. The core reasoning loop of an LLM agent—Perceive, Plan, Act—is currently fueled by a single prompt context window representing one user's intent and the agent's history. Scaling this to N users requires a fundamental re-thinking of how intent is pooled, how context is segmented and shared, and how action authority is validated.

Potential solutions might involve meta-prompts that summarize team state, multi-threaded agent instances with a synchronization layer, or novel LLM architectures trained explicitly on multi-party dialogues and decision-making processes. None of these are standard features in today's popular agent toolkits.

Why This Matters for Adoption

This gap represents one of the last major technical hurdles preventing AI agents from moving from fascinating demos and single-user productivity tools to integral components of company workflows. Software development, product management, incident response, and strategic planning are inherently multi-stakeholder activities. An agent that cannot navigate this social and organizational complexity will remain sidelined.

Saro's brief critique serves as a direct challenge to the next wave of agent framework development. The race is no longer just about which framework has the most tools or the cleverest planning algorithm; it's about which one can first crack the code of multi-user, multi-agent, team-scale collaboration.

gentic.news Analysis

Omar Saro's pinpoint critique aligns with a growing theme in our coverage of the agent ecosystem. We recently analyzed the launch of CrewAI's 0.28 update, which focused on enhancing collaboration between multiple AI agents (e.g., a researcher, a writer, a reviewer). Saro's observation logically extends this: if AI agents need to work together, they ultimately need to serve human teams that are also collaborating. The next evolutionary step is a framework that seamlessly blends human and AI agents into a single, permissioned workflow.

This also connects to the enterprise-focused developments we've tracked from companies like Sema4.ai and Fixie.ai, which emphasize secure, governed AI workflows. Their approaches often involve piping agent outputs into enterprise chat platforms like Slack or Microsoft Teams—a practical patch for the multi-user problem. However, as Saro implies, this is a workaround, not a native solution. The underlying agent engine still sees one "user" (the Slack bot), losing the nuance of individual human identities and roles.

Furthermore, this highlights a divergence in research paths. While much academic and open-source effort is poured into improving single-agent reasoning (e.g., with Tree of Thoughts or Algorithm of Thoughts), the industry's pressing need is for orchestration and collaboration layers. The entity Omar Saro (a known AI researcher and writer) is effectively trending on this specific, pragmatic pain point. His commentary suggests that the next breakthrough in agent usefulness may come from systems engineering and human-computer interaction research as much as from core AI advances.

Frequently Asked Questions

What is an LLM agent framework?

An LLM agent framework is a software toolkit that enables a large language model (like GPT-4 or Claude) to perform multi-step tasks autonomously. It typically provides components for memory (storing past interactions), tool use (calling APIs, running code), and planning (breaking down a goal into steps). Examples include LangChain, AutoGen, and CrewAI.

Why don't current agent frameworks work for teams?

They are architecturally designed around a single conversation thread between one AI and one user. They lack built-in mechanisms for handling multiple human identities, managing conflicting instructions from different people, enforcing access controls, or maintaining a shared memory that is editable and accessible by a group. Deploying them in a team requires complex, custom integration that often breaks their standard interaction model.

What would a multi-user agent framework need?

It would require features like user authentication and role management, a context management system that can merge and segment dialogues per user, conflict resolution protocols, and an audit trail that attributes agent actions to specific human requests. The core agent would need to understand concepts like "the team decided" or "Sarah approved this, but John requested a change."

Are any companies working on this?

While no major framework has announced native multi-user support as a core feature, enterprise AI platforms like Sema4.ai and Fixie.ai build governance and collaboration features on top of agent systems. Their solutions often involve integrating agents into existing team chat platforms, which handles the multi-user interface but not necessarily the underlying multi-user reasoning problem identified by Saro.