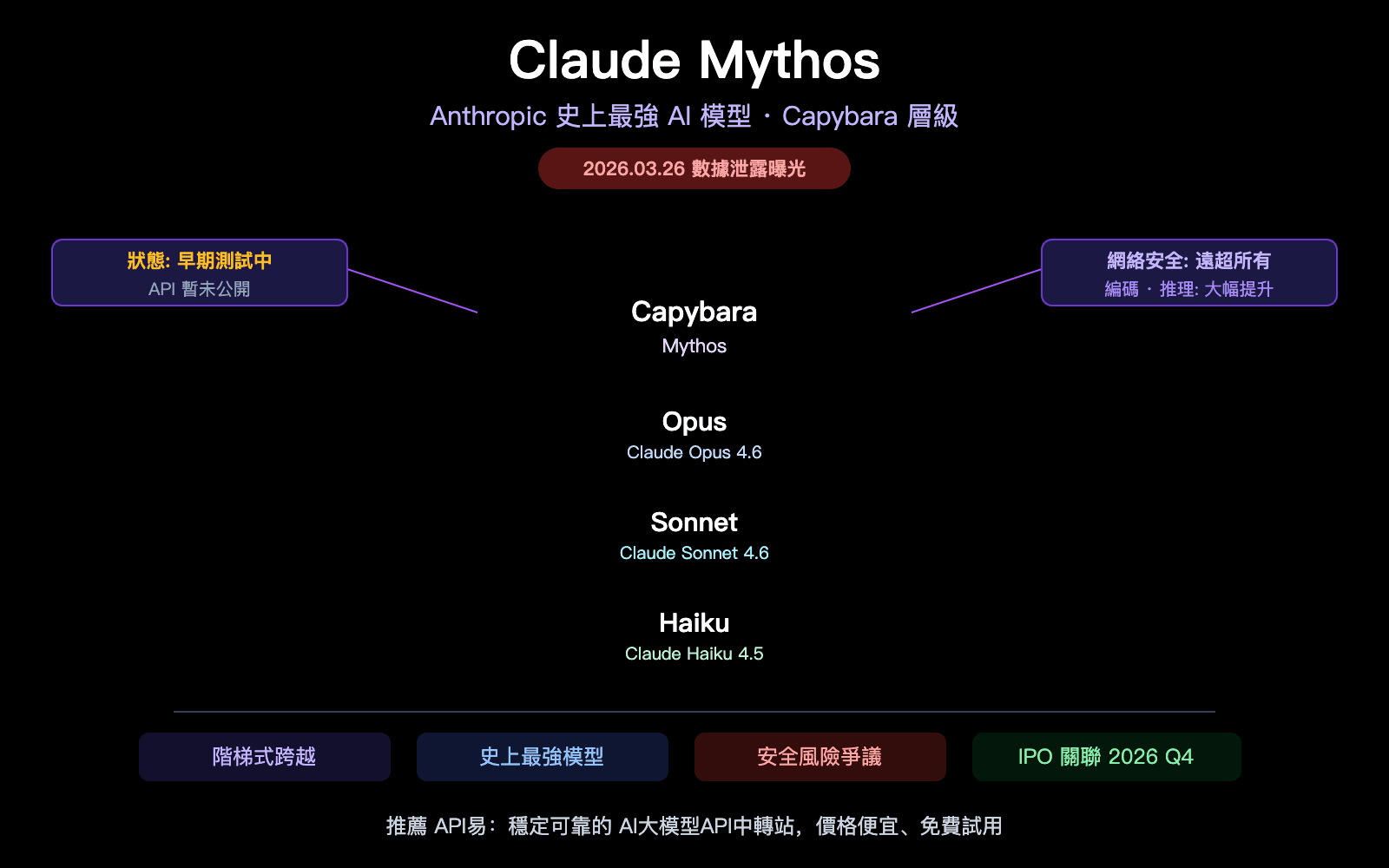

A viral tweet from the account @intheworldofai has drawn significant attention to an open-source large language model (LLM) project named Open-Sonar. The tweet, which simply stated "You’re telling me there’s a locally deployable Opus 4.6? 👀", linked to the project's page, sparking widespread discussion about the potential for a high-performance, locally-runnable model that could compete with top-tier closed-source offerings like Anthropic's Claude 3.5 Sonnet.

What Happened

The source is a single tweet that acts as a signal boost for the Open-Sonar project. The project, hosted on Hugging Face, makes a bold claim: its 7-billion parameter model, Open-Sonar-7B, is designed to achieve performance comparable to Anthropic's much larger Claude 3.5 Sonnet model. The core appeal highlighted by the tweet is the promise of local deployability—running a model of this purported capability on consumer or enterprise hardware without relying on API calls to a centralized service.

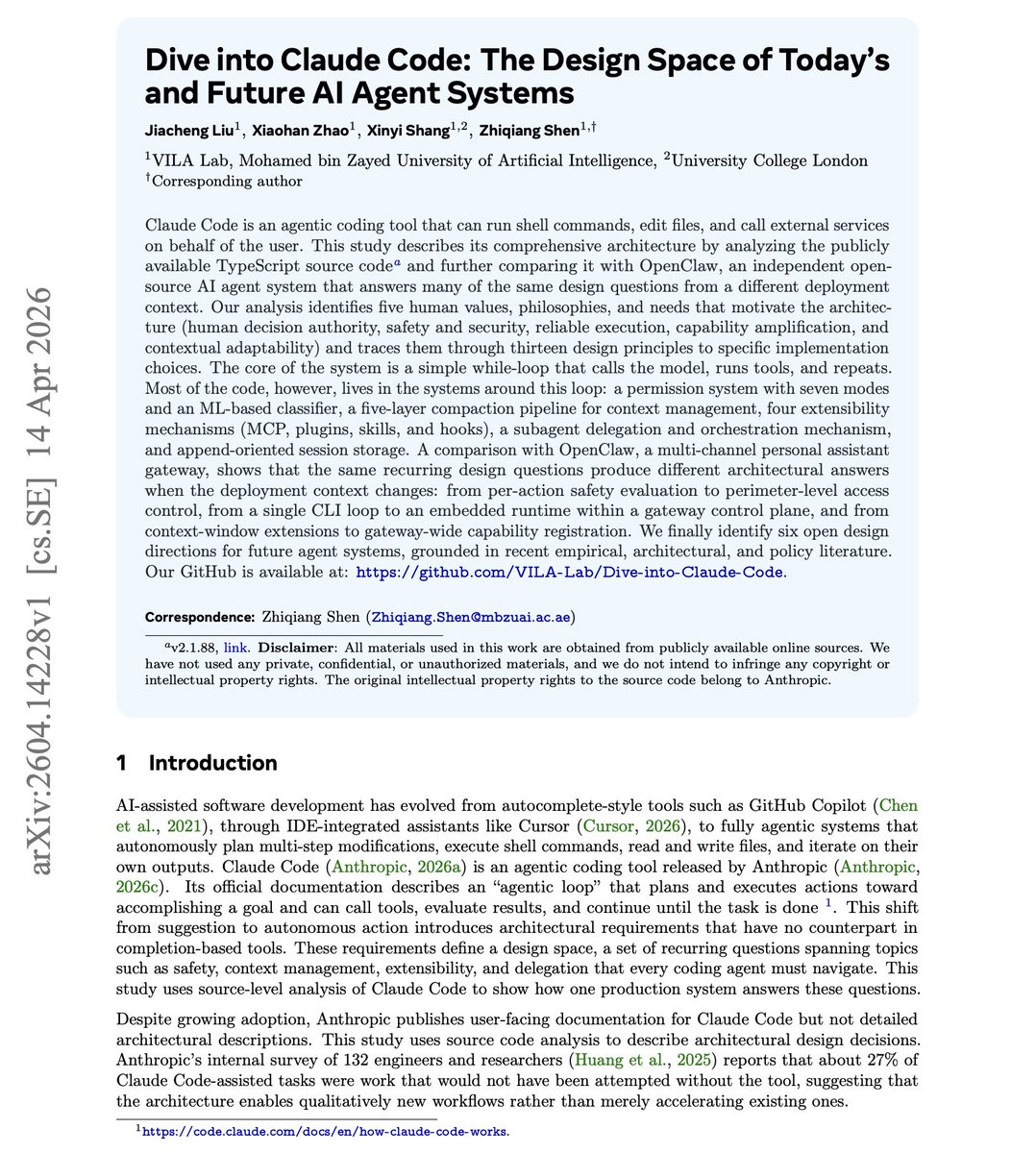

The project documentation states that Open-Sonar-7B is an MoE (Mixture of Experts) model. It utilizes a novel training methodology involving Direct Preference Optimization (DPO) and Group Relative Policy Optimization (GRPO) on a high-quality dataset named Sonar-Data. The stated goal is to bridge the performance gap between open-source and frontier closed-source models.

Context

This development taps into two major, ongoing trends in the AI ecosystem:

- The Open vs. Closed Source Tension: There is intense competition and community desire for open-source models that can match the performance of proprietary models from companies like OpenAI (GPT-4), Anthropic (Claude 3.5), and Google (Gemini). Successes like Meta's Llama series have proven it's possible, but matching the very top tier remains a challenge.

- The Push for Local Deployment: Concerns over cost, latency, data privacy, and vendor lock-in are driving strong demand for models that can be run on-premises or on local machines. A model that claims "Claude 3.5-level" performance that fits on a single GPU would be a monumental shift.

It is critical to note that as of this reporting, the Open-Sonar project's performance claims are not yet independently verified by standard, comprehensive benchmarks (e.g., MMLU, GPQA, HumanEval). The project page provides examples and internal evaluations, but the community typically awaits results on established, leaderboard-tracked benchmarks before validating such comparisons.

gentic.news Analysis

This tweet and the project it highlights are a direct manifestation of the accelerating open-source arms race we've been tracking. Following our coverage of Meta's release of Llama 3.1 and the subsequent flurry of fine-tuned variants, the community's target has clearly shifted from matching GPT-3.5 to directly challenging the current frontier—Claude 3.5 Sonnet and GPT-4o.

The mention of a "locally deployable Opus 4.6" is a reference to the (fictional) idea of a local version of Google's recently detailed Gemini 2.0 Flash, which itself is positioned as a cost-effective, high-speed competitor. This connects multiple competitive threads: not just open vs. closed, but also the specific rivalry between Anthropic, Google, and the open-source community. If Open-Sonar's claims hold any water, it would represent a significant pressure point on the business models of all major API providers, potentially accelerating price cuts or the release of more capable small models.

However, extreme caution is warranted. The landscape is littered with projects that make bold claims which don't survive rigorous, independent evaluation. The true test for Open-Sonar will be its performance on the SWE-Bench coding benchmark, complex reasoning tasks, and its behavior across a wide range of prompts, not just curated examples. The use of MoE and advanced preference optimization techniques is credible, but the proof is in the benchmarking.

Frequently Asked Questions

What is Open-Sonar?

Open-Sonar is an open-source large language model project that has released a 7-billion parameter model called Open-Sonar-7B. Its creators claim it is trained using advanced preference optimization techniques on a high-quality dataset to achieve performance rivaling Anthropic's Claude 3.5 Sonnet, a much larger frontier model.

Can I really run a model as good as Claude 3.5 on my local computer?

The claim is that Open-Sonar-7B is designed for local deployment and matches Claude 3.5 Sonnet's performance. While a 7B parameter model is feasible to run on modern consumer GPUs (e.g., an RTX 4090), the performance claim is not yet independently verified. You can likely run the model locally, but whether its capability truly matches Claude 3.5 across a broad range of tasks remains to be proven through standardized benchmarks.

How does Open-Sonar compare to other open-source models like Llama 3.1?

Based on its stated goals, Open-Sonar is aiming for a higher performance tier than the base Llama 3.1 8B model. It is positioning itself not just as another fine-tune, but as a model that uses a specialized MoE architecture and novel training to close the gap with the best closed-source models. A direct, benchmarked comparison does not yet exist publicly.

Why is local deployment of AI models important?

Local deployment offers several key advantages: it ensures data privacy and security by keeping prompts and results on your own hardware, eliminates ongoing API costs, reduces latency, and prevents vendor lock-in. It allows for customization and integration into private systems without dependency on an external service's uptime or policy changes.