Key Takeaways

- OpenAI posted a cryptic tweet stating 'This is not a screenshot' with a video link, strongly hinting at a new AI video generation model.

- This marks a direct move into a space currently led by rivals like Runway and Pika.

What Happened

On April 15, 2026, OpenAI's official X (formerly Twitter) account posted a cryptic message: "This is not a screenshot." The tweet contained a link to a video, which has since been viewed millions of times. The video itself is a high-quality, likely AI-generated sequence. The phrasing is a clear nod to the AI video generation community, where a common benchmark for model quality is whether a still frame from a generated video could be mistaken for a real photograph or "screenshot."

Context

This teaser is OpenAI's most direct signal yet that it is preparing to launch a text-to-video or image-to-video generation model. The company has been a dominant force in text (GPT series) and image generation (DALL-E), but has not yet released a public, general-purpose video model. The AI video generation landscape has been rapidly evolving, led by companies like Runway (Gen-3 Alpha), Pika Labs, and Stability AI (Stable Video Diffusion), alongside recent integrated features from giants like Google (Veo) and Meta.

A high-quality video model from OpenAI would represent a significant escalation in this competitive arena. The tease suggests the model may focus on temporal coherence and visual fidelity—key challenges where current models often struggle.

gentic.news Analysis

This teaser is a classic OpenAI launch tactic, generating immense hype with minimal information, similar to the pre-release buzz for GPT-4. The move is strategically timed. The AI video market is heating up but lacks a definitive, universally accessible leader. OpenAI's brand recognition and existing API ecosystem position it to potentially capture the market quickly if its model delivers on the quality the teaser implies.

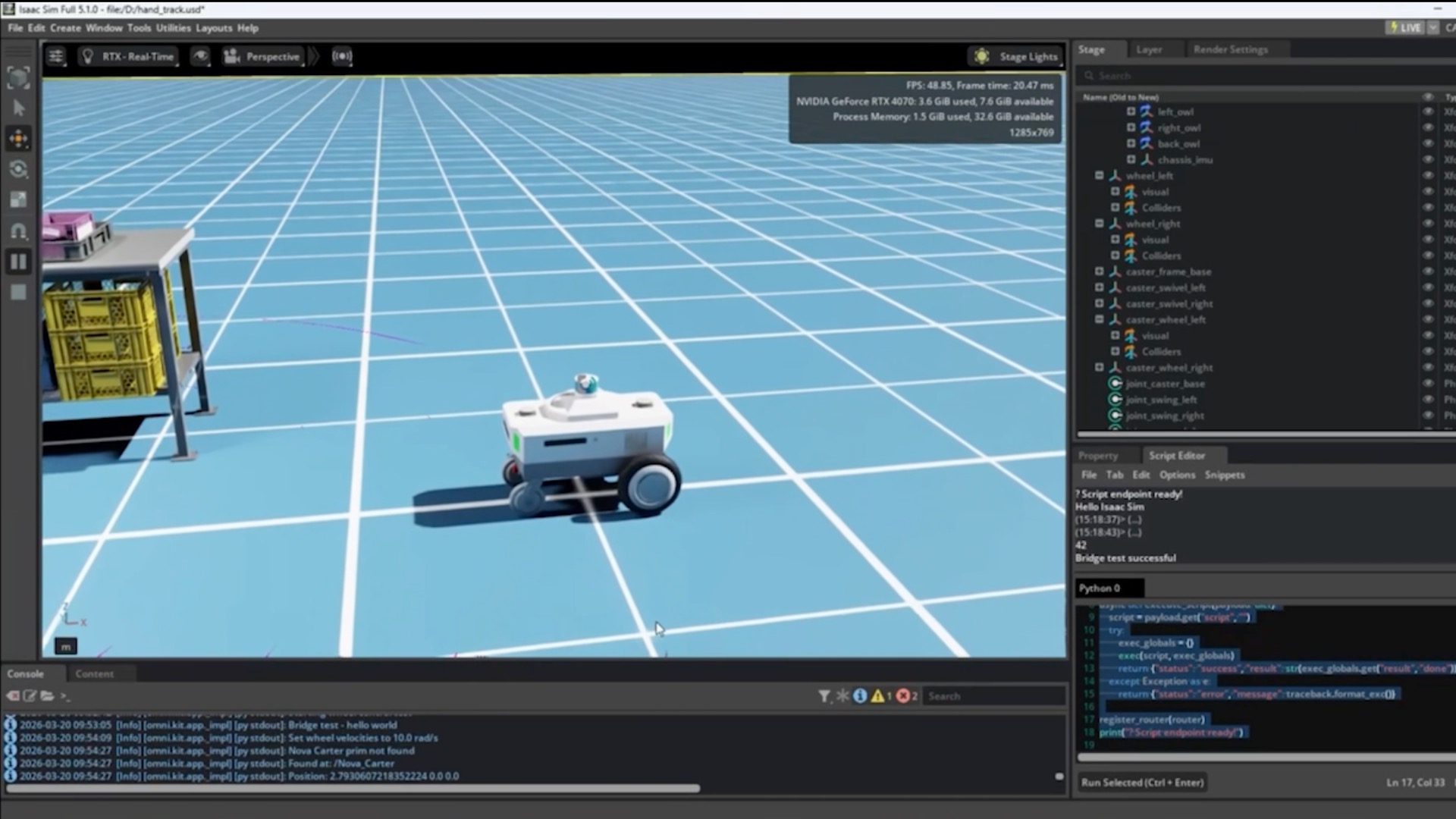

Technically, the key challenge OpenAI must solve is not single-frame quality—DALL-E 3 already excels there—but maintaining that quality across consistent, multi-second motion. The tweet's wording directly targets this benchmark. If successful, this could shift application development, making high-fidelity AI video a standard tool for creators, marketers, and potentially even for synthetic data generation in robotics and autonomous vehicle training.

However, the launch will face immediate scrutiny. Competitors have a head start in iterating on user feedback and tackling specific issues like precise motion control. OpenAI's model will be compared directly against Runway's Gen-3 Alpha for filmmaker-friendly control and Pika's user experience. Furthermore, the compute cost and pricing of video generation via API will be a critical factor for developer adoption, an area where OpenAI has sometimes been premium-priced.

Frequently Asked Questions

What did OpenAI's tweet mean?

The tweet "This is not a screenshot" with a video link is a teaser for a new AI video generation model. It implies that the video is AI-generated and of such high quality that a single frame from it could be mistaken for a real photograph.

When will OpenAI's video AI be released?

OpenAI has not announced a release date. Based on their history, a cryptic tweet like this often precedes a full announcement or research paper within days or weeks.

How will OpenAI's video model compare to Runway or Pika?

It's too early to compare technically. However, OpenAI's entry will likely raise the baseline for visual fidelity and temporal coherence due to its scale of resources. The key differentiators will be the model's controllability, cost via API, and how well it integrates with OpenAI's existing tools like ChatGPT.

Is this Sora, OpenAI's text-to-video model?

OpenAI's known video research model is called Sora. This teaser is almost certainly related to the Sora project, potentially indicating its transition from a research preview to a publicly accessible product or API.