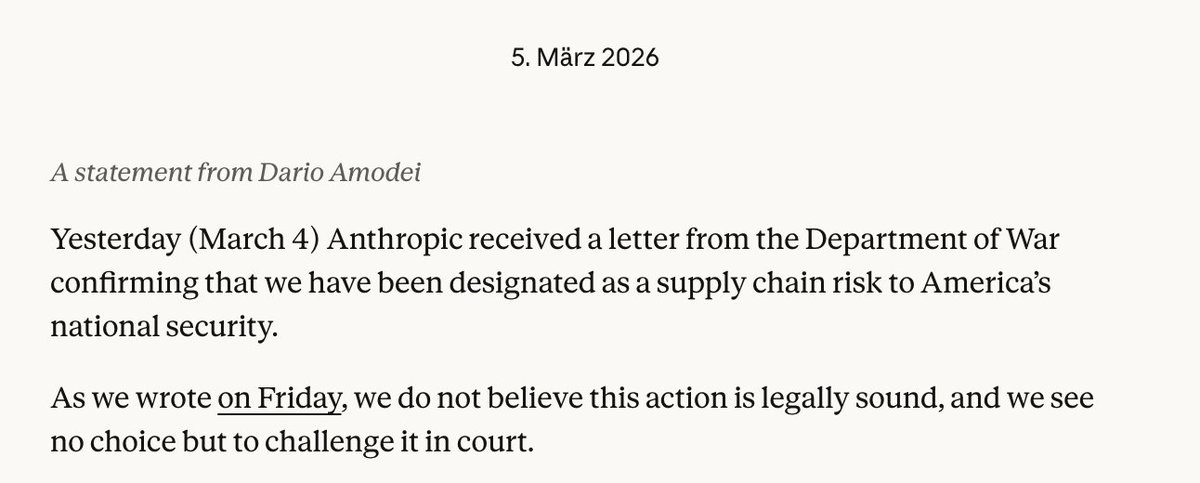

According to reports from journalist Kimi (via Twitter/X), the Pentagon has delivered an ultimatum to Anthropic CEO Dario Amodei: grant the U.S. military "unfettered access" to the Claude AI system by this Friday or face termination of their relationship. This development, first reported on social media and circulating within defense and AI policy circles, represents a critical pressure point in the ongoing tension between national security imperatives and AI safety principles.

The Reported Ultimatum

The reported demand, attributed to Pentagon official Hegseth, gives Anthropic until the end of this week to comply with what appears to be a comprehensive access request. While specific technical details of "unfettered access" remain undisclosed, the term typically implies minimal restrictions on how the AI system can be deployed, potentially including integration into command systems, intelligence analysis, operational planning, or other military applications.

Anthropic, founded by former OpenAI researchers including Dario and Daniela Amodei, has positioned itself as a leader in AI safety research. The company's Constitutional AI approach explicitly aims to create systems aligned with human values and resistant to harmful applications. This philosophical foundation creates inherent tension with unrestricted military deployment.

Anthropic's Safety-First Positioning

Since its founding, Anthropic has emphasized its commitment to developing AI responsibly. The company's research papers and public statements consistently highlight concerns about AI misuse, including in military contexts. Their Constitutional AI framework involves training models against a set of principles that explicitly discourage harmful applications.

This safety-first approach has attracted significant investment, including from Google and Amazon, while also appealing to researchers and employees concerned about ethical AI development. The reported Pentagon ultimatum directly challenges this foundational identity, forcing Anthropic to choose between government partnership and its stated principles.

Broader Context: AI and National Security

The Pentagon's reported pressure on Anthropic occurs within a larger geopolitical context of AI competition, particularly with China. U.S. defense officials have repeatedly emphasized the need to maintain technological superiority, with AI identified as a critical battlefield technology. The Department of Defense's Joint All-Domain Command and Control (JADC2) initiative specifically seeks to integrate AI across military operations.

Previous military-AI collaborations have faced scrutiny. Google's Project Maven, which involved AI for drone imagery analysis, sparked employee protests and ultimately led Google to establish AI principles restricting certain military applications. Microsoft and Amazon have faced less public resistance to their defense contracts, though internal debates persist.

The Friday Deadline: Implications and Scenarios

As the reported Friday deadline approaches, several scenarios emerge:

Compliance: Anthropic could grant the requested access, potentially modifying Claude's safety constraints for military use. This would represent a significant philosophical shift and could trigger employee departures and reputational damage within the AI safety community.

Negotiation: The companies could reach a compromise involving restricted access with specific safeguards, oversight mechanisms, or limited use cases. This middle path would test both parties' flexibility.

Refusal: Anthropic could maintain its principles and lose Pentagon access. This would demonstrate commitment to AI safety but potentially limit government influence over Anthropic's development and cede military AI work to less safety-conscious competitors.

Extension: The deadline could be extended as negotiations continue behind closed doors.

Industry-Wide Implications

This standoff has implications beyond Anthropic:

- Precedent Setting: The outcome will establish patterns for how AI safety companies engage with government agencies

- Talent Dynamics: Employee reactions at Anthropic could influence where AI researchers choose to work

- Investor Calculations: Venture capital and corporate investors will assess how AI safety commitments affect market opportunities

- Regulatory Environment: This conflict may influence upcoming AI legislation and oversight mechanisms

Ethical and Strategic Considerations

The ethical dimensions are complex. Proponents of military access argue that:

- U.S. military use of AI is preferable to adversaries developing similar capabilities without safety constraints

- AI could reduce civilian casualties through more precise targeting and intelligence

- National security requires maintaining technological edge

Critics counter that:

- Unfettered military AI could lower thresholds for conflict and accelerate warfare

- Autonomous weapons systems raise profound ethical questions

- Military applications contradict the harm-prevention principles of safety-focused AI companies

Looking Forward

Regardless of Friday's outcome, this confrontation highlights growing pains in the AI-government relationship. As AI capabilities advance, similar tensions will likely emerge across national security, law enforcement, and intelligence applications. The Anthropic-Pentagon dynamic serves as an early test case for how society will navigate the intersection of powerful AI systems, corporate ethics, and state power.

Source: Initial report from @kimmonismus on Twitter/X regarding Pentagon official Hegseth's ultimatum to Anthropic CEO Dario Amodei.