Key Takeaways

- Pinterest's engineering team published a detailed technical breakdown of 'request-level deduplication'—a family of techniques that eliminate redundant processing of user data across thousands of candidate items in their recommendation system.

- This approach was critical to scaling their Foundation Model by 100x while controlling infrastructure costs.

What Happened

Pinterest's machine learning engineering team has published a comprehensive technical case study on how they scaled their recommendation system infrastructure to support massive model growth. The core innovation they detail is request-level deduplication—a systematic approach to eliminating redundant processing of identical user data across the recommendation funnel.

This work was driven by the scaling of their Foundation Model (presented at ACM RecSys 2025), which achieved a 100x increase in transformer dense parameters and a 10x increase in model dimension. Such scaling creates "massive infrastructure pressure" on storage, training, and serving costs. The engineers state that request-level deduplication is "the single highest-impact technique we've deployed to hold costs in check across all three dimensions."

Technical Details: The Deduplication Challenge

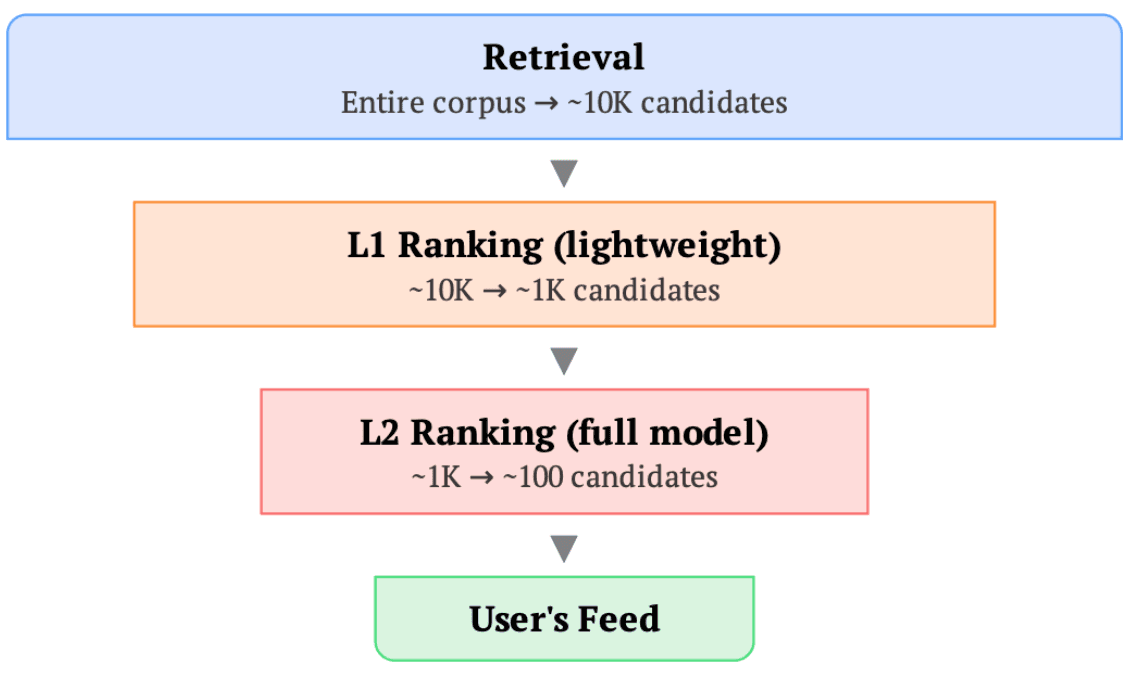

In a typical recommendation system, when a user opens their feed (a "request"), the system goes through retrieval and ranking stages. The same massive block of user data—including a sequential history of approximately 16,000 tokens encoding all user actions—flows through every stage and is duplicated for every item scored, creating hundreds to thousands of identical copies per request.

Storage Compression via Intelligent Sorting

The first application is at the data storage layer. By leveraging Apache Iceberg with user ID and request ID-based sorting, they achieve 10–50x storage compression on user-heavy feature columns. When rows sharing the same request are physically co-located in storage, columnar compression algorithms automatically handle the deduplication. This sorted data architecture also enables:

- Bucket joins: Eliminating expensive shuffle operations during data processing.

- Efficient backfills: Updating only affected user segments rather than entire datasets.

- Incremental feature engineering: Appending new columns without duplicating entire datasets.

- Stratified sampling: Ensuring training datasets maintain proper user diversity.

Training Correctness: Two Critical Fixes

Switching to request-sorted data initially caused 1–2% regressions in offline evaluation metrics due to disrupted statistical assumptions.

Batch Normalization Disruption: With request-sorted data, training batches become concentrated around fewer users, causing batch-level statistics to fluctuate dramatically. This was particularly problematic for BatchNorm layers. Their solution was implementing Synchronized Batch Normalization (SyncBatchNorm), which aggregates statistics across all training devices before normalization, effectively creating a "statistical batch size" that restores representative statistics.

In-Batch Negative Corruption: In contrastive learning frameworks like those used for retrieval, models learn by distinguishing positive items from negatives within the same batch. With IID (independent and identically distributed) sampling, the chance that a randomly sampled in-batch negative is actually a positive for the anchor user is negligible. With request-sorted data, batches contain many engagements from the same user, causing the false negative rate to jump from ~0% to as high as ~30%. Their fix was user-level masking in the loss function, excluding negatives that belong to the same user as the anchor.

Both fixes were implemented with minimal overhead and fully recovered model quality.

Serving Efficiency

While the article cuts off before detailing serving optimizations, it establishes that the same deduplication principle applies throughout the ML lifecycle. The massive reduction in data movement and redundant computation directly translates to serving throughput gains and cost reduction.

Retail & Luxury Implications

The techniques Pinterest describes are directly applicable to any retail or luxury company operating large-scale personalized recommendation systems. As these companies invest in more sophisticated sequential understanding models (like the Instance-As-Token (IAT) framework mentioned in the accompanying arXiv abstract, which we covered on 2026-04-13), the data redundancy problem becomes acute.

Concrete applications include:

- Luxury E-commerce Platforms: Processing long user history sequences (browsing, wishlisting, purchases) for thousands of candidate products in real-time.

- Personalized Content Feeds: For brand apps and websites showing curated looks, editorial content, or new arrivals.

- Size & Fit Recommendation Systems: Where user interaction history with specific items and categories is crucial but computationally expensive to process repeatedly.

The storage compression alone (10-50x) represents massive potential cost savings for companies storing petabytes of user interaction data. More importantly, it enables the deployment of larger, more capable models—like the 100x scaled transformer mentioned—without facing prohibitive infrastructure costs. This is the key strategic insight: efficiency techniques like deduplication are not just cost-saving measures but enablers of model capability that directly impact recommendation quality and user experience.

The training corrections (SyncBatchNorm and user-level masking) are particularly valuable as they provide a blueprint for maintaining model quality when optimizing data pipelines—a common challenge when moving from research prototypes to production systems.

Implementation Approach

For technical leaders considering this approach:

- Data Layer First: Implement user/request ID sorting in your feature store (using tools like Apache Iceberg or similar). This delivers immediate storage savings and enables the rest of the pipeline.

- Model Architecture Audit: Identify components vulnerable to correlated batches, particularly BatchNorm layers and contrastive learning objectives. Plan for SyncBatchNorm implementation.

- Phased Rollout: Test the deduplicated pipeline on a single model surface with rigorous A/B testing to validate that quality metrics are preserved.

- Compute Infrastructure: Ensure your training framework supports efficient cross-device synchronization for SyncBatchNorm.

The Pinterest team notes that the communication overhead for SyncBatchNorm was "negligible compared to the training speedups gained from deduplicated computation."

Governance & Risk Assessment

Maturity Level: High. This is a production-proven technique at Pinterest scale.

Privacy Consideration: Positive. Deduplication reduces the number of times raw user data is processed and moved, potentially decreasing exposure surface area.

Bias Risk: Managed. The stratified sampling enabled by request-sorted data helps prevent over-representation of highly active users, promoting better diversity in training data.

Dependency: Moderate. Requires compatible data infrastructure (columnar storage with sorting) and distributed training capabilities.