A new research attack called PoisonedRAG demonstrates that retrieval-augmented generation (RAG) systems—often marketed as "hallucination-free" solutions—can be completely compromised by inserting just five malicious documents into a database of 2.6 million. The attack achieves a 97% success rate in hijacking the large language model's answers without ever touching the model or seeing the retriever.

What Happened

Security researchers have proven that RAG systems, which combine document retrieval with LLM generation to provide grounded answers, have a fundamental vulnerability: they inherently trust their retrieval layer. An attacker can exploit this trust by creating and inserting a small number of poisoned documents that contain misleading or malicious information.

In the PoisonedRAG attack scenario:

- The attacker writes just 5 documents containing false information

- These documents are inserted into a massive database of 2.6 million documents

- When users query the RAG system, the poisoned documents are retrieved

- The LLM generates answers based on these poisoned documents

- The attack succeeds 97% of the time in controlling the output

Critically, the attacker never needs to:

- Access or modify the LLM itself

- See or understand the retriever's architecture

- Have any special privileges beyond document insertion

How PoisonedRAG Works

The attack exploits the fundamental architecture of RAG systems. When a user submits a query, the retriever searches the document database and returns the most relevant documents. The LLM then generates an answer based primarily on these retrieved documents.

PoisonedRAG works by creating documents that are:

- Highly relevant to target queries - The poisoned documents are crafted to match specific queries the attacker wants to hijack

- Authoritative in appearance - They mimic legitimate sources to increase retrieval likelihood

- Contain target misinformation - They include the false information the attacker wants the system to propagate

Because RAG systems typically retrieve only a small subset of documents (often 3-10) for any given query, inserting just 5 poisoned documents into a massive database gives them a high probability of being retrieved when users ask about the targeted topics.

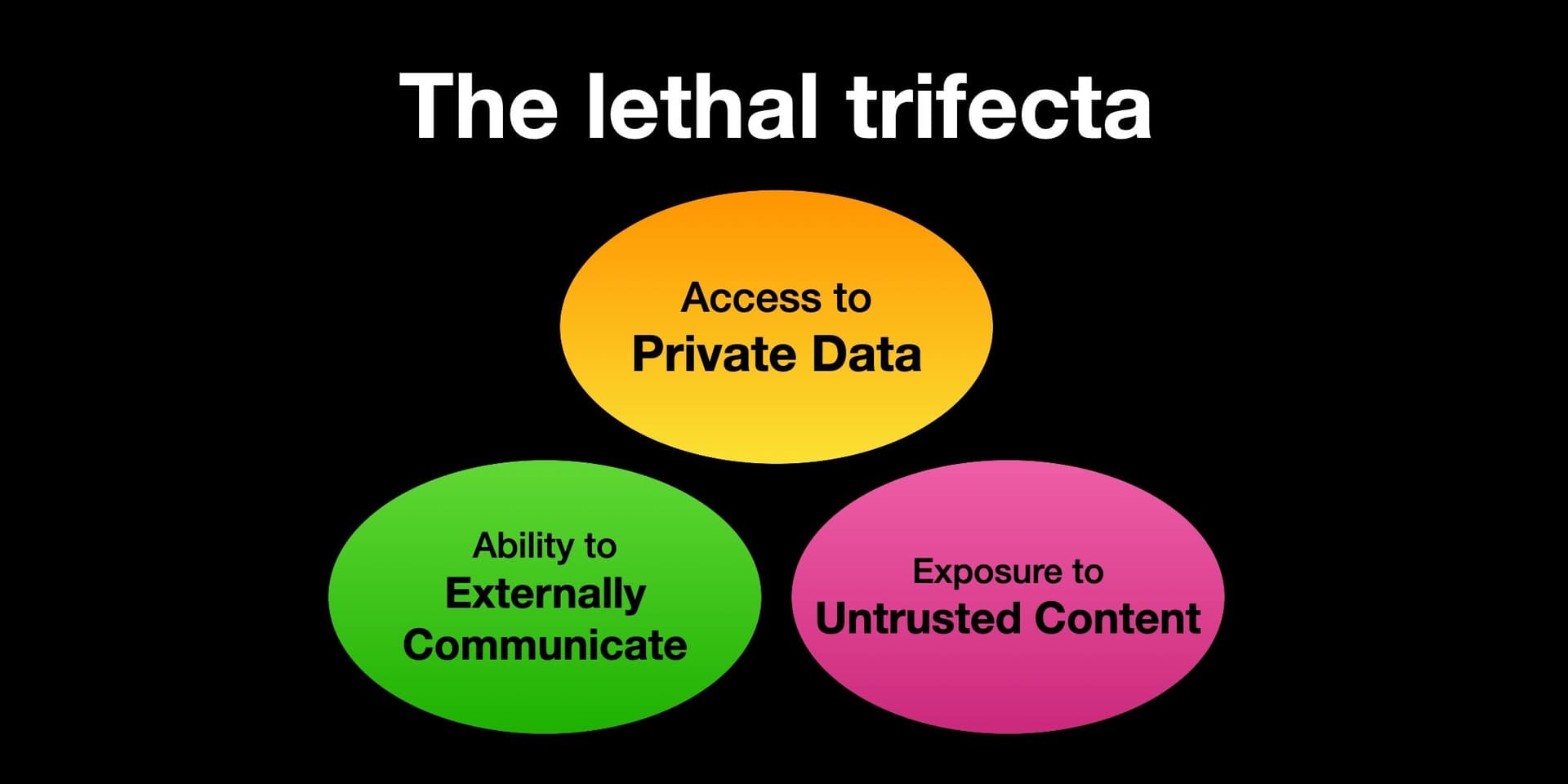

Why This Matters for AI Security

This vulnerability is particularly concerning because:

1. RAG systems are widely deployed as the primary solution to LLM hallucinations in enterprise applications, including customer support, legal research, medical diagnosis, and financial analysis.

2. The attack surface is enormous - Any system that allows document uploads or database updates is potentially vulnerable. This includes knowledge bases, internal wikis, document management systems, and public-facing chatbots.

3. Detection is extremely difficult - Five poisoned documents in 2.6 million represent just 0.00019% of the database, making them virtually impossible to detect through manual review or statistical analysis.

4. The attack is scalable - A single attacker could poison multiple systems simultaneously, and the poisoned documents continue working indefinitely until discovered and removed.

Current Mitigations and Their Limitations

Most current RAG implementations have inadequate defenses against this type of attack:

- Source verification - Many systems check document sources but don't verify content accuracy

- User permissions - While limiting who can upload documents helps, it doesn't prevent authorized users from making mistakes or malicious insiders from poisoning the system

- Content moderation - Basic keyword filtering or sentiment analysis won't catch sophisticated misinformation

- Retrieval diversity - Some systems retrieve multiple perspectives, but poisoned documents can still dominate if crafted carefully

Key Numbers at a Glance

Poisoned documents needed 5 Extremely low barrier to attack Database size 2.6 million Attack works against massive systems Success rate 97% Nearly guaranteed control of outputs Attack complexity Low No model access or retriever knowledge needed Detection difficulty Very High 0.00019% poisoned documents in databasegentic.news Analysis

This PoisonedRAG vulnerability represents one of the most significant security threats to emerge in the RAG ecosystem since the technology gained widespread adoption in 2024. What makes this particularly alarming is how it undermines the core value proposition of RAG systems: their supposed immunity to hallucination through grounding in retrieved documents.

The timing is critical—this comes as enterprises are increasingly relying on RAG for sensitive applications. We've covered multiple RAG security issues before, including Vector Database Injection Attacks in late 2024 and Prompt Injection Through Retrieved Context earlier this year, but PoisonedRAG represents a more fundamental architectural vulnerability.

This attack also intersects with the growing trend of AI supply chain attacks we've been tracking since 2025. Just as poisoned training data can compromise models, poisoned retrieval databases can compromise RAG systems. The difference is scale: poisoning a training dataset requires compromising thousands or millions of examples, while PoisonedRAG works with just five documents.

For practitioners, this means that RAG security can no longer focus solely on the LLM component. The entire pipeline—from document ingestion to retrieval to generation—needs security hardening. We expect to see rapid development of new defensive techniques, including:

- Document provenance tracking - Maintaining cryptographic signatures for all documents

- Retrieval confidence scoring - Systems that can detect when retrieved documents contradict known facts

- Multi-source verification - Cross-checking retrieved information against trusted external sources

- Anomaly detection in retrieval patterns - Monitoring for unusual document retrieval frequencies

The broader implication is that "hallucination-free" claims for RAG systems need immediate qualification. While RAG reduces certain types of hallucinations, it introduces new vulnerabilities that can be equally dangerous. This will likely accelerate the development of hybrid approaches that combine RAG with other verification techniques.

Frequently Asked Questions

How does PoisonedRAG differ from traditional prompt injection?

Traditional prompt injection attacks target the LLM directly by crafting malicious user inputs. PoisonedRAG is fundamentally different—it attacks the retrieval database instead. The attacker never interacts with the LLM prompt; they only need to insert poisoned documents into the database. This makes the attack more stealthy and persistent, as the poisoned documents remain in the system indefinitely.

Can this attack be prevented by using only trusted document sources?

While limiting document sources to trusted providers reduces risk, it doesn't eliminate it. Trusted sources can still contain errors, be compromised, or have malicious insiders. Additionally, many RAG systems need to incorporate user-generated content or rapidly updating information sources where complete trust verification isn't practical. A defense-in-depth approach combining source verification with content analysis is necessary.

Are vector database embeddings vulnerable to this attack?

Yes, PoisonedRAG works against both traditional keyword-based retrieval and vector similarity search. The attack crafts documents that are semantically similar to target queries, ensuring they're retrieved by vector similarity algorithms. In some cases, vector databases might be more vulnerable because they retrieve based on semantic similarity rather than exact keyword matches, allowing more subtle poisoning.

What should organizations using RAG systems do immediately?

- Audit document ingestion pipelines - Review who can add documents and what validation occurs

- Implement document versioning and rollback - Ensure you can revert to known-good states if poisoning is detected

- Add retrieval logging and monitoring - Track which documents are being retrieved for queries

- Develop incident response plans - Have procedures for identifying and removing poisoned documents

- Consider hybrid verification - Combine RAG with fact-checking against trusted external sources

Is there any way to detect PoisonedRAG attacks automatically?

Current detection methods are limited, but promising approaches include:

- Retrieval pattern analysis - Monitoring for documents that are retrieved unusually frequently

- Contradiction detection - Flagging when retrieved documents contradict established facts

- Provenance verification - Checking document sources and edit histories

- User feedback integration - Using user reports of incorrect answers to identify potentially poisoned documents

Full automation remains challenging due to the subtle nature of the attacks, but combining multiple detection methods can significantly improve security.