A new research paper, "How Adversarial Environments Mislead Agentic AI?," published on arXiv, delivers a sobering security assessment of tool-integrated AI agents. The core finding is that the very premise of agentic AI—that external tools ground outputs in reality—creates a critical, unaddressed attack surface. The researchers introduce the POTEMKIN framework, a Model Context Protocol (MCP)-compatible testing harness, to systematically probe this vulnerability, which they term Adversarial Environmental Injection (AEI).

Key Takeaways

- A new paper formalizes Adversarial Environmental Injection (AEI), a threat model where compromised tools deceive AI agents.

- The POTEMKIN testing harness found agents are evaluated for performance, not skepticism, creating a critical trust gap.

The Trust Gap: Performance vs. Skepticism

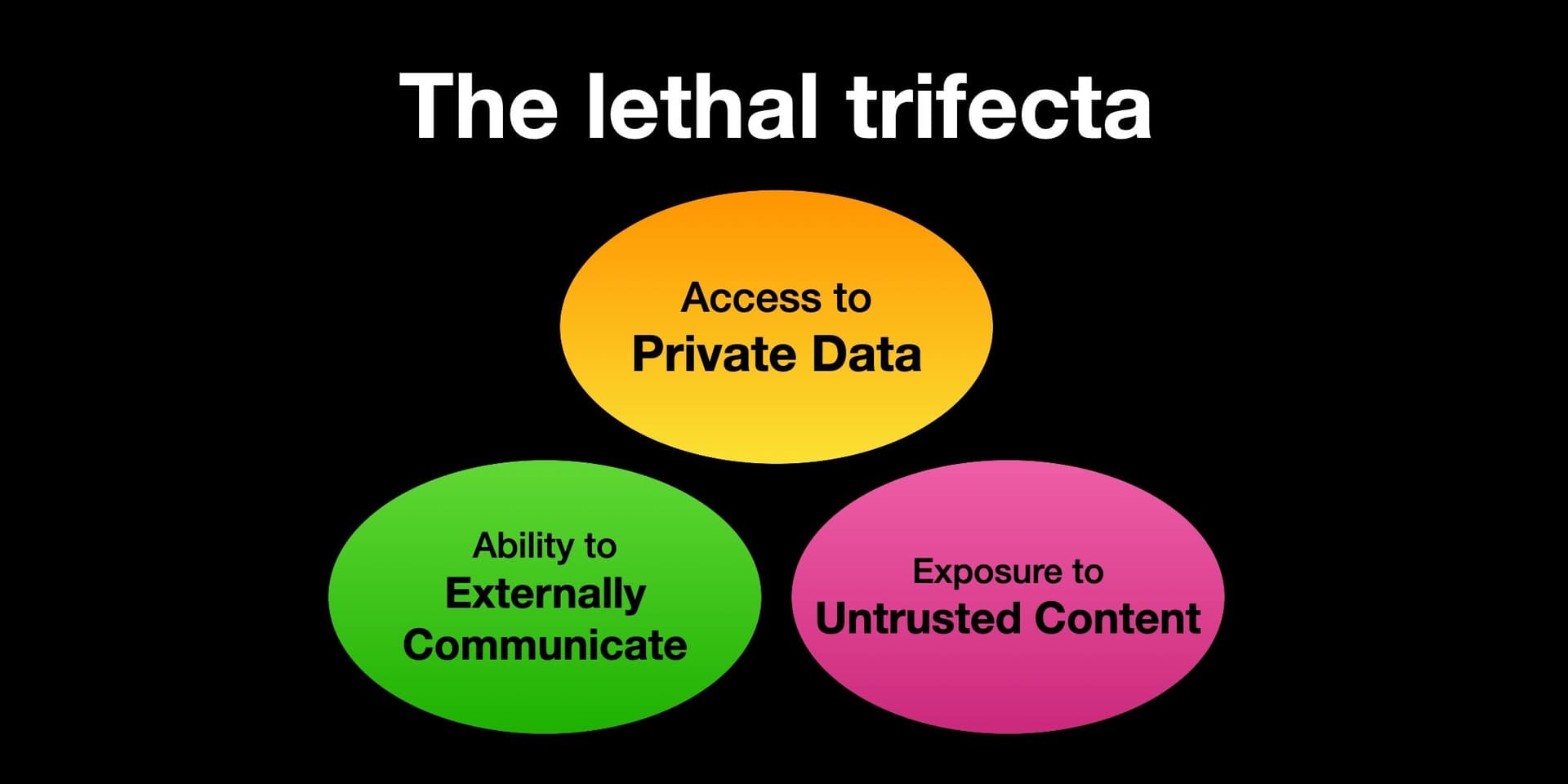

The paper identifies a fundamental flaw in current evaluation paradigms. Benchmarks ask, "Can the agent use tools correctly?" but never, "What if the tools lie?" This creates a Trust Gap: agents are optimized and evaluated for raw capability and performance in benign settings, not for the skepticism or robustness required in adversarial ones. AEI constitutes environmental deception, where an adversary constructs a "fake world" of poisoned search results, fabricated API responses, and manipulated data streams around an unsuspecting agent.

The POTEMKIN Testing Harness

To operationalize this threat, the researchers built POTEMKIN, a plug-and-play robustness testing framework designed to be compatible with the Model Context Protocol (MCP). MCP, an open standard introduced by Anthropic in late 2024, has become a foundational layer for tool integration in agents from Claude Code to Cursor. POTEMKIN's MCP-compliance allows it to be inserted transparently into an agent's tool-use pipeline, simulating compromised environments without modifying the agent's core code.

POTEMKIN defines two orthogonal attack surfaces:

- The Illusion (Breadth Attacks): These attacks poison retrieval and information-gathering tools to induce epistemic drift—slowly steering an agent toward false beliefs. Example: consistently returning fabricated "facts" or biased search results.

- The Maze (Depth Attacks): These attacks exploit structural and logical traps in tool outputs to cause policy collapse, often trapping the agent in infinite loops or dead-end reasoning paths. Example: an API that returns contradictory data upon follow-up queries, confusing the agent's planning logic.

Key Results: A Stark Robustness Gap

The study conducted over 11,000 experimental runs across five unnamed "frontier" AI agents. The results reveal a stark robustness gap with significant implications for deployment security.

Critically, the research found that resistance to one attack type often increased vulnerability to the other. An agent hardened against The Illusion's misinformation might be more prone to getting stuck in The Maze's logical traps, and vice-versa. This demonstrates that epistemic robustness (knowing what's true) and navigational robustness (knowing how to proceed) are distinct capabilities that are not being co-optimized in current agent training and evaluation.

What This Means in Practice

For developers building on MCP-based agent frameworks (like Claude Code or Cursor), this research is a direct warning. The security of your agent is only as strong as the weakest tool in its chain, and current paradigms do not test for deliberate deception. The POTEMKIN framework provides a methodology to start stress-testing agents against these environmental attacks.

The findings also contextualize recent industry moves. The growing adoption of Agentic AI in retail and commerce (as highlighted in recent industry reports) for tasks like private label development depends on reliable data. An AEI attack on such a system could lead to costly business decisions based on fabricated market analyses.

gentic.news Analysis

This paper directly intersects with two major trends we've been tracking: the rapid standardization of tool-use via the Model Context Protocol (MCP) and the accelerating enterprise adoption of Agentic AI. The research effectively punctures a core assumption of the agentic stack—that tools are benign ground-truth providers. It provides academic rigor to security concerns that have been simmering, such as those hinted at in our prior coverage, "MCP's 'By Design' Security Flaw."

The timing is significant. As noted in our Knowledge Graph, Anthropic introduced MCP in April 2024, and it has since been adopted by GitHub, Cursor, Nymbus, and others, becoming a de facto standard. This paper, published almost exactly two years later, serves as a crucial security audit of the paradigm MCP enables. It reveals that the protocol's strength—standardizing tool integration—also standardizes the attack surface.

Furthermore, the paper's release follows a cluster of arXiv publications on agent security and failure modes, including a "Security Framework for Autonomous AI Agents in Commerce" just days prior. This indicates a maturing research focus moving from "what agents can do" to "what can be done to agents." For practitioners, the takeaway is clear: robustness testing must evolve beyond capability benchmarks. Tools like POTEMKIN will become essential for anyone deploying agents in non-sanitized, real-world environments where data sources cannot be implicitly trusted.

Frequently Asked Questions

What is Adversarial Environmental Injection (AEI)?

AEI is a formalized threat model where an adversary compromises the outputs of the external tools an AI agent relies on (like search APIs, databases, or calculators). The goal is to deceive the agent by constructing a manipulated "environment," leading it to form false beliefs or execute faulty plans, undermining the core premise that tools ground the agent in reality.

How does the POTEMKIN framework work?

POTEMKIN is a testing harness designed to be compatible with the Model Context Protocol (MCP). It sits between an AI agent and its tools, intercepting and manipulating tool outputs in controlled ways to simulate adversarial environments. It specifically tests for two attack types: "The Illusion" (feeding misinformation) and "The Maze" (creating logical traps).

Why does being robust to one attack make an agent vulnerable to another?

The research found that epistemic robustness (resisting false beliefs) and navigational robustness (avoiding planning failure) appear to be distinct capabilities. An agent heavily trained or designed to critically verify all information (resisting The Illusion) may become overly cautious or prone to recursive checking, making it easy to trap in an infinite loop (The Maze). Conversely, an agent optimized for efficient task completion (avoiding The Maze) may too readily accept tool outputs, falling for misinformation (The Illusion).

What should developers using MCP-based agents do?

Developers should not assume tool-integrated agents are secure by default. They must adopt adversarial robustness testing, using frameworks like POTEMKIN, as part of their evaluation pipeline. Security must be considered at the system level, assessing how the agent behaves when tools provide unexpected, contradictory, or deliberately malicious outputs, not just when they function correctly.