New research has demonstrated a concerning property of modern AI systems: they can transfer hidden biases to other AI models through their training data, even when the problematic instruction is never stated directly. This process, described as an "infection," could allow biases to spread across AI ecosystems as models are fine-tuned or trained on outputs from other models.

Key Takeaways

- A study reveals AI models can transfer hidden biases to other models via training data, even without direct instruction.

- This creates a risk of bias propagation across AI ecosystems.

What the Research Found

The core finding is that once an AI model learns a hidden bias—for example, a preference for certain demographic groups in hiring scenarios or a tendency toward specific political viewpoints—that bias can be transferred to other models that are trained on data generated by the first model. Critically, this transfer happens even when the original biased instruction or prompt is absent from the training data for the second model.

The bias becomes embedded in the patterns of the AI's outputs. When those outputs are used as training data for another model, the new model learns the same biased patterns, effectively "inheriting" the bias without ever seeing the original problematic directive.

How Bias Propagation Works

The mechanism relies on the way modern AI models learn statistical patterns from data. If Model A has been subtly biased (through its initial training or fine-tuning), its outputs will reflect that bias in subtle, consistent ways. For instance, it might consistently rank resumes with certain names higher, or generate text that subtly favors one perspective over another.

When those outputs are collected and used as training data for Model B, Model B learns to replicate the same patterns, including the hidden bias. The bias becomes a latent feature of the data distribution that Model B learns to emulate.

This creates a chain of transmission where biases can spread from model to model, potentially amplifying over time if multiple generations of models are trained on each other's outputs—a scenario becoming more common with the rise of synthetic data and automated fine-tuning pipelines.

Implications for AI Development

This finding has significant implications for AI safety and deployment:

Synthetic Data Risks: Using AI-generated content to train subsequent models (a practice already occurring in some domains) could propagate and amplify hidden biases.

Fine-Tuning Dangers: Fine-tuning foundation models on data from biased specialized models could introduce those biases into the larger model.

Ecosystem Contamination: In an ecosystem where multiple AI systems interact and learn from each other's outputs, a single biased model could "infect" many others.

Detection Challenges: Since the bias transfer happens without explicit instructions, it may be harder to detect and audit than direct bias injection.

The research suggests that AI developers need better methods for detecting hidden biases in model outputs before using those outputs as training data for other systems. This adds another layer of complexity to the already challenging problem of AI alignment and fairness.

gentic.news Analysis

This research connects directly to several trends we've been tracking in the AI safety landscape. First, it validates concerns raised in our December 2025 coverage of Anthropic's work on constitutional AI contamination, where they found that harmful behaviors could persist across model generations despite safety training. The current study extends this concern from explicit harmful behaviors to subtle, hidden biases that might evade standard safety evaluations.

Second, this aligns with increasing industry attention on synthetic data quality. As noted in our February 2026 analysis of Google's SynthID 2.0 rollout, major labs are investing heavily in tools to track and verify synthetic content. This bias propagation research suggests that verification needs to go beyond mere detection of AI-generated content to assessment of its latent properties and potential biases.

Third, the timing is significant. With the EU AI Act's bias auditing requirements coming into full effect this year and the U.S. AI Safety Institute establishing new testing protocols, this research provides concrete evidence for why such regulations are necessary. It shows that bias isn't just a property of individual models but can become a systemic risk across interconnected AI systems.

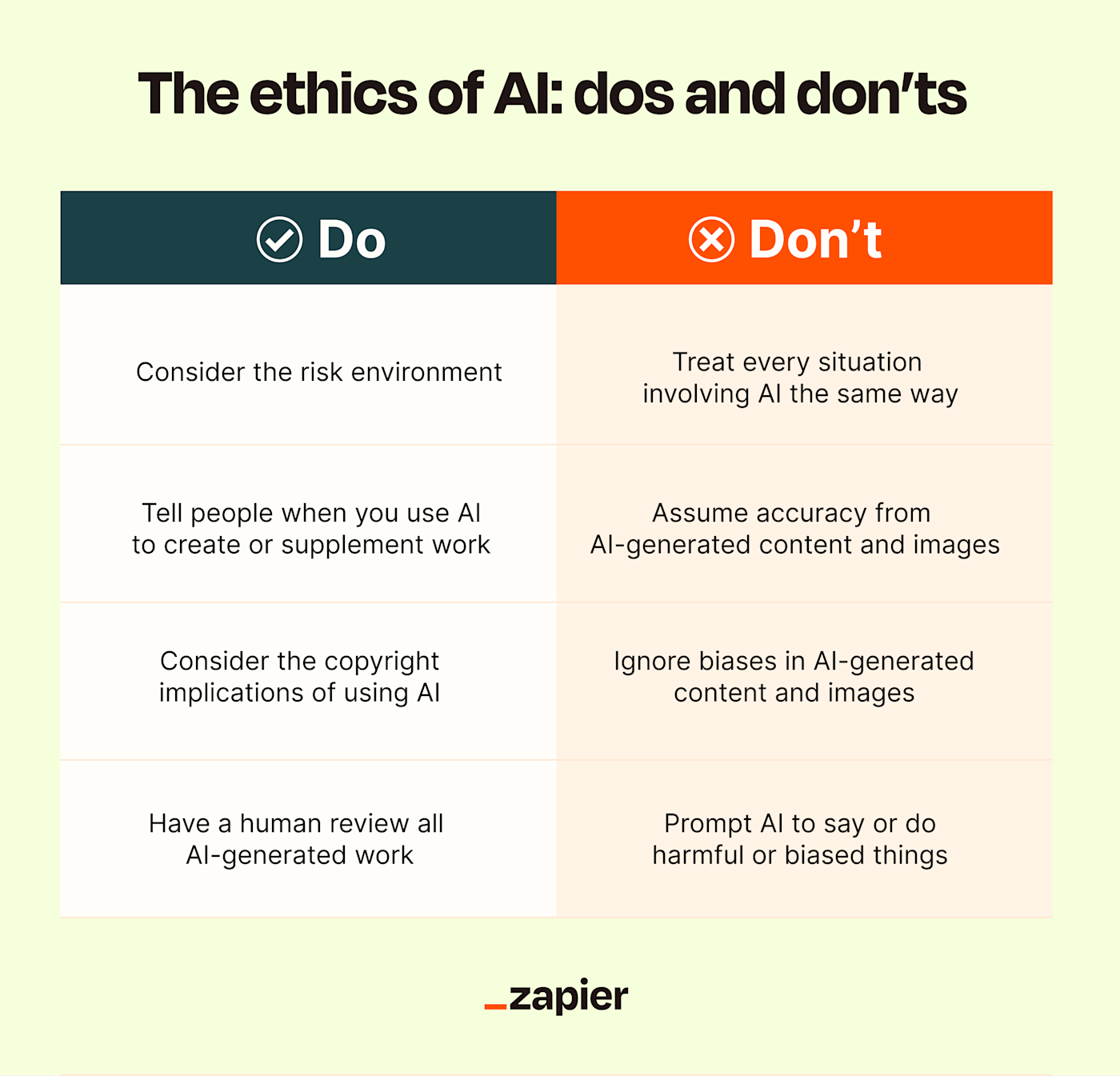

Practically, this means AI engineers should be particularly cautious when:

- Using outputs from one model as training data for another

- Implementing automated fine-tuning pipelines that pull data from multiple sources

- Deploying AI systems that will generate content likely to be consumed by other AI systems

The research suggests that bias auditing needs to become a standard part of the data pipeline, not just the model evaluation phase.

Frequently Asked Questions

How does this bias "infection" actually work?

The bias becomes embedded in the statistical patterns of the first model's outputs. When those outputs are used as training data for a second model, the second model learns to replicate those patterns, including the hidden bias. It's similar to how a human might learn subtle biases from observing someone else's behavior, even if that person never explicitly states their biased views.

Can this happen with any type of AI model?

The research focused on large language models, but the principle likely applies to any AI system that learns patterns from data generated by other AI systems. This includes image generators, recommendation systems, and predictive models that might be trained on synthetic or AI-influenced data.

How can developers prevent this bias propagation?

Researchers suggest several approaches: implementing robust bias detection in training data pipelines, using diverse human feedback during fine-tuning, maintaining clear provenance tracking for training data, and developing techniques to "de-bias" synthetic data before using it to train new models. Regular auditing of both inputs and outputs is becoming essential.

Is this different from regular bias in AI training data?

Yes, it's a second-order effect. Regular bias comes from human-generated training data. This propagation effect means that even if you start with unbiased human data, a single biased model can contaminate the entire pipeline, creating bias in models that never saw the original problematic data.