A new research paper, highlighted by Wharton professor Ethan Mollick, underscores a growing and under-examined reality: people are already turning to AI models like ChatGPT for medical questions at scale, but the evidence base for whether this is beneficial, neutral, or harmful remains thin and methodologically flawed.

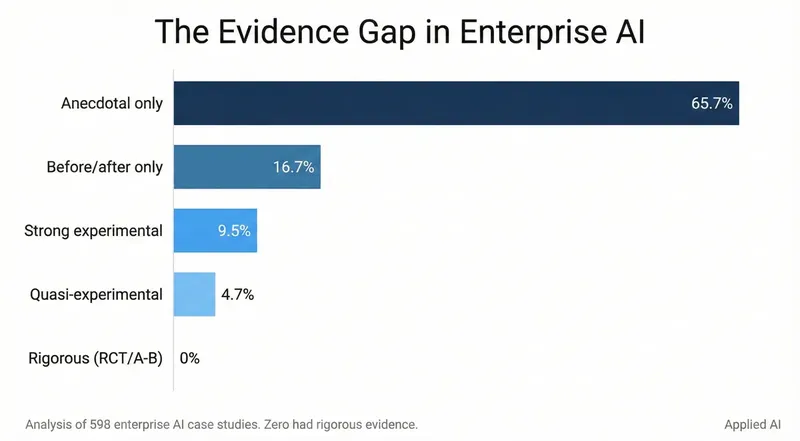

The core issue identified is a mismatch between real-world usage and academic evaluation. Most published research on AI medical advice uses older model generations (like GPT-3.5) and frames the comparison against the gold standard of licensed physicians. While comparing AI to doctors is valuable for understanding diagnostic limits, it misses a more practical and common scenario: when someone has a medical question, they are typically choosing between asking an AI chatbot or performing a traditional web search, consulting symptom checkers, or reading health forums. The critical, unanswered question is: How does advice from a state-of-the-art AI model compare to the information the same person would have likely found and used without AI?

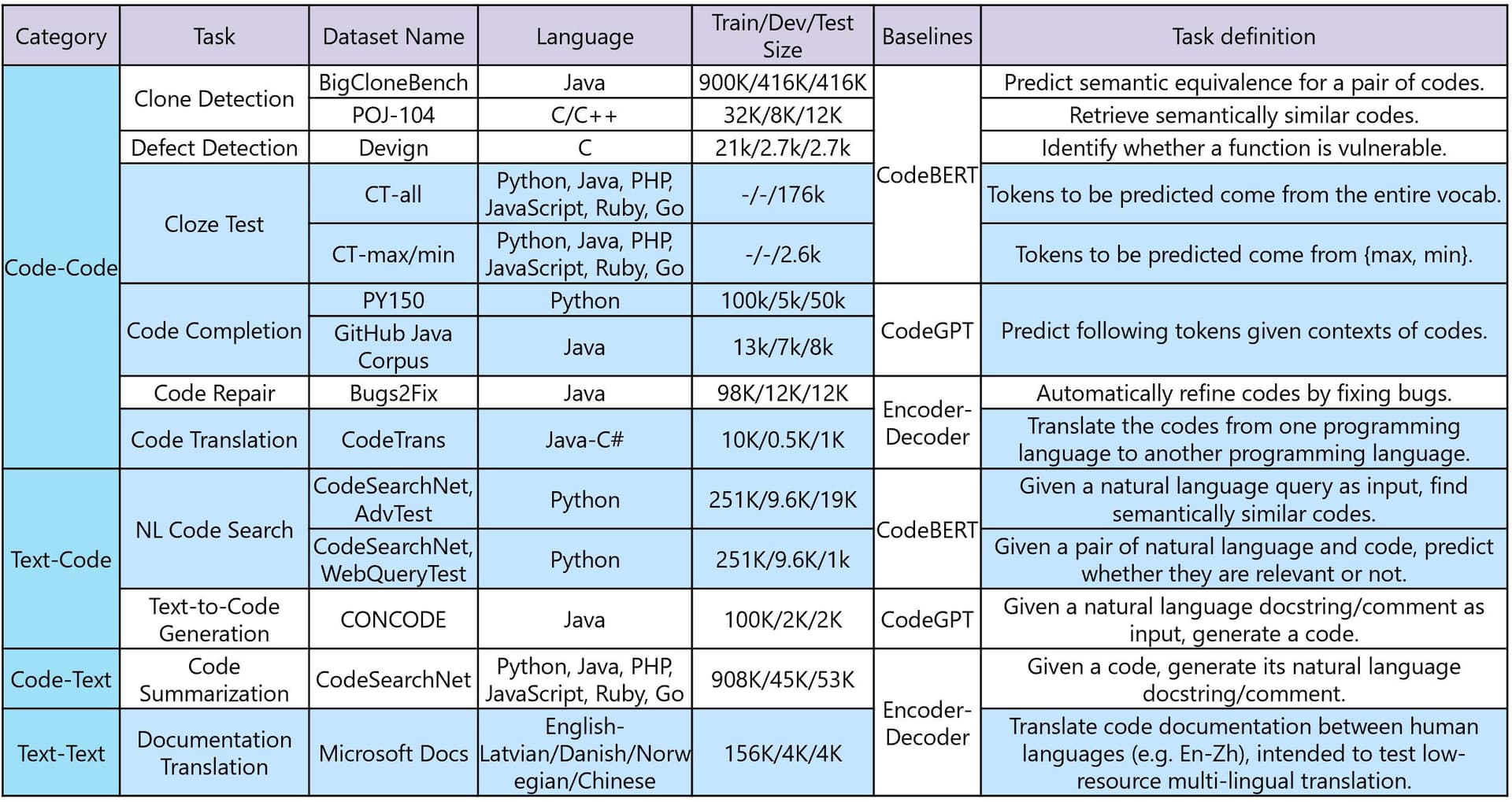

The Evidence Gap

The paper suggests the current research paradigm is lagging. Benchmarks comparing GPT-4 or Claude 3 to a doctor's diagnosis are important for establishing ceiling performance, but they don't measure the incremental impact of AI in a typical user's journey. A model doesn't need to outperform a board-certified specialist to be useful; it merely needs to provide more accurate, actionable, and less harmful information than the alternative sources a person would have used.

This gap leaves a significant blind spot. Without studies designed to measure this real-world substitution effect, it's impossible to say whether the massive, organic adoption of AI for health queries is a net positive for public health or a silent risk. The concern is that people may be over-relying on AI's confident tone without understanding its limitations, potentially delaying necessary care or acting on incorrect guidance.

Why This Matters Now

The call for new research is urgent. Model capabilities are advancing rapidly—GPT-4o, Claude 3.5 Sonnet, and Med-PaLM 2 represent a significant leap over the GPT-3.5 generation that populates many current studies. Furthermore, AI health advice is not a future possibility; it's a present-day behavior. Understanding the quality and impact of this advice compared to the status quo (e.g., WebMD, Mayo Clinic site, Reddit health communities) is essential for developers, policymakers, and healthcare providers to guide safe integration.

gentic.news Analysis

This paper touches on a critical and recurring theme in applied AI: the deployment-evaluation gap. As we covered in our analysis of AI Coding Assistants in the Enterprise, rapid user adoption often outpaces rigorous, real-world efficacy studies. The pattern is identical in healthcare: tools are used because they are accessible and convenient, not because their net benefit has been proven in the context of actual use.

The focus on comparing AI to doctors, while academically rigorous, reflects an older paradigm of AI as a direct professional tool. The current reality, as this paper notes, is of AI as a consumer-facing information intermediary. This aligns with trends we've tracked in the AI Search sector, where Perplexity AI and others are positioning LLMs as alternatives to Google for complex queries, including medical ones. The competitive landscape isn't just other AI models; it's the entire ecosystem of online health information.

For practitioners, the key takeaway is methodological. Evaluating AI for public health requires new benchmarks that simulate real user behavior and decision pathways. The next wave of research must answer: When someone uses AI instead of a search engine for a symptom, are they more or less likely to seek appropriate care? Does the advice reduce anxiety or increase it? These are the metrics that will determine whether this AI application is truly transformative or a digital placebo with hidden risks.

Frequently Asked Questions

What medical questions are people asking AI?

While the specific paper highlighted by Mollick may not break down query types, common use cases based on anecdotal and survey data include interpreting symptoms, seeking information on medication side effects, understanding lab results, and getting basic health information. These are often questions people might previously have typed into a search engine.

How accurate are current AI models for medical advice?

On professional medical licensing exam questions (e.g., USMLE), models like GPT-4 and Med-PaLM 2 can achieve passing or high scores, sometimes exceeding the average human medical student. However, these are closed-question benchmarks. Real-world medical questions are messy, lack context, and require nuanced understanding of risk—a scenario where model performance is less clearly established and where harmful "hallucinations" or overconfidence can occur.

Should I use ChatGPT or similar AI for medical advice?

Medical professionals and AI developers universally state that current general-purpose AI models are not substitutes for professional medical diagnosis, advice, or treatment. They can be useful for gathering general information but should be used with extreme caution. Any information obtained should be discussed with a healthcare provider before making decisions. The standard disclaimer applies: do not delay seeking professional medical care because of something an AI model said.

What would better research on this topic look like?

Ideal research would be longitudinal and observational, comparing outcomes for similar user groups who use AI versus traditional online resources for health information. It would use the latest model versions and measure practical outcomes like appropriateness of follow-up actions, user comprehension, and anxiety levels, rather than just accuracy on diagnostic puzzles. This is complex and ethically challenging, but necessary to move beyond the current evidence gap.