Key Takeaways

- A developer-focused article outlines decision frameworks for LLM finetuning—covering when it's worth the cost, how to approach it, and key trade-offs.

- For retail leaders, this is a practical primer on customizing models for brand-specific tasks.

What Happened

A new Medium article titled The Developer’s Guide to Finetuning LLMs: When, Why, and How (published on AI Mind) promises a practical walkthrough for engineers evaluating whether to finetune a large language model. While the full text is behind a link, the title alone signals a decision-oriented guide—likely covering scenarios where finetuning outperforms prompt engineering or retrieval-augmented generation (RAG), as well as data preparation, compute costs, and evaluation strategies.

This is a timely topic. LLMs are increasingly embedded in enterprise workflows, and retail/luxury brands are no exception. The choice between finetuning, RAG, or both has become a core architectural decision for AI teams.

Technical Details

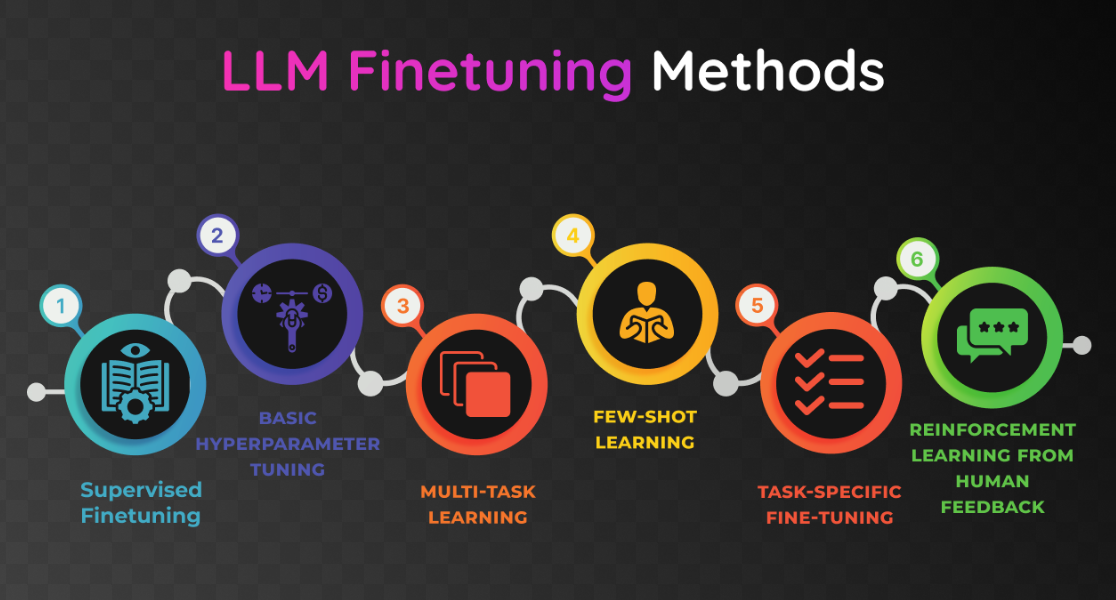

Finetuning involves taking a pre-trained LLM (e.g., LLaMA, Mistral, GPT) and further training it on domain-specific data to adjust its weights. The guide likely distinguishes between:

- Full finetuning (all weights updated) vs. parameter-efficient finetuning (PEFT) such as LoRA or QLoRA, which reduce memory and compute requirements.

- When to finetune: high-value, stable tasks like brand tone-of-voice adherence, product categorization, or compliance checks.

- When to avoid finetuning: rapidly changing data (e.g., inventory or promotions) where RAG is more agile.

- Data quality requirements: finetuning is only as good as the curated dataset.

The industry has seen a surge in finetuning tooling (e.g., Hugging Face TRL, Unsloth) that lowers the barrier for teams without massive GPU clusters.

Retail & Luxury Implications

For retail AI teams, the finetuning vs. RAG decision is not academic. Consider:

- Brand voice consistency: A luxury house can finetune an LLM to generate product descriptions that match its unique tone—poetic for fashion, precise for watches. RAG might introduce too much noise from generic sources.

- Product knowledge: Finetuning on a brand’s catalog, care instructions, and company wikis can produce an internal assistant that answers “Can I machine-wash this silk?” with high accuracy.

- Customer service escalation: Finetuned models can handle niche return policies or warranty details without hallucinating.

However, most retail use cases today benefit more from RAG + prompt engineering, because product catalogs change seasonally. Finetuning a model quarterly may be overkill. The guide likely recommends a hybrid pattern: finetune for the stable “brain” (voice, core knowledge) and RAG for the dynamic “memory” (inventory, pricing).

We recently covered related approaches in ItemRAG (retrieval for recommendation) and GraphRAG-IRL (hybrid personalization), both of which avoid full finetuning—a trend the guide probably endorses.

Business Impact

Direct savings from avoiding unnecessary finetuning can be significant:

- Training a small LoRA adapter on a 7B model costs ~$50-100 in compute (few-shot on a single GPU).

- Full finetuning of a 70B model can exceed $10,000 per run.

- Maintenance: finetuned models need retraining when data drifts, incurring ongoing costs.

For a luxury brand running 5-10 LLM use cases, adopting the “finetune only when necessary” framework can reduce AI infrastructure spend by 30-50% while improving output reliability.

Implementation Approach

Based on current best practices (and likely the guide):

- Audit each use case: Is the required knowledge stable or dynamic?

- Start with prompt engineering + RAG; measure performance.

- Only then consider finetuning for tasks requiring deep stylistic adaptation or deterministic outputs.

- Use PEFT (LoRA) for most commercial applications.

- Evaluate rigorously with both automated metrics (e.g., ROUGE, BERTScore) and human judges to check for hallucinations.

Governance & Risk Assessment

Finetuning introduces new risks:

- Data leakage: Training on customer PII is a compliance risk (GDPR, CCPA).

- Catastrophic forgetting: Finetuning can erode general capabilities; mitigate with replay data.

- Bias amplification: Domain-specific data may reinforce biases; audit training sets.

- Version control: Multiple finetuned models across teams can create fragmentation—centralize registry.

The maturity of finetuning for production is medium: well-established in research, but operational pipelines (CI/CD for ML models) are still maturing in retail.

gentic.news Analysis

This guide arrives amid growing consensus that finetuning is a surgical tool, not a default. Our coverage of ItemRAG (April 23) and GraphRAG-IRL (April 22) illustrates the industry’s pivot toward retrieval-augmented approaches for personalization and recommendations. The Columbia professor’s argument (April 21) that LLMs are limited for novel science further underscores that finetuning should stay within known data boundaries.

LLMs have appeared in 18 of our articles this week, reinforcing that the community is actively mapping the frontier between adaptation methods. For retail leaders, the key takeaway: invest in prompt engineering and RAG infrastructure first; reserve finetuning for brand-voice and high-stakes classification tasks. The guide will likely provide the concrete decision trees developers need to avoid costly missteps.