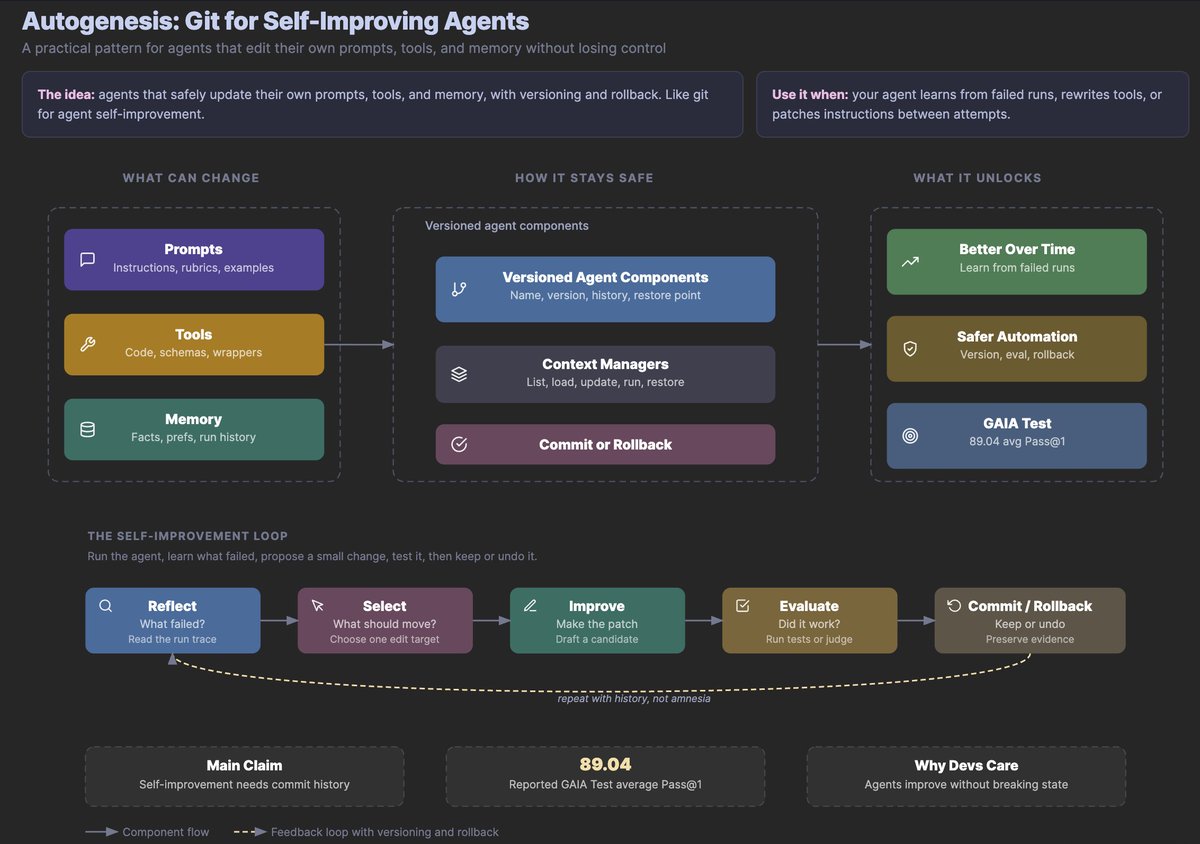

A groundbreaking new study has challenged fundamental assumptions about why artificial intelligence agents fail when performing complex, multi-step tasks. Contrary to widespread belief that failures primarily result from insufficient knowledge or capability gaps, researchers have discovered that the majority of agent failures actually stem from a more fundamental issue: the agents simply forget what they were supposed to do.

This research, highlighted by AI researcher Omar Sar in a recent analysis, suggests that the prevailing narrative about AI agent limitations may be missing the mark. The findings have significant implications for how developers approach building more reliable autonomous systems, particularly for applications requiring extended sequences of actions and decisions.

The Conventional Wisdom vs. New Evidence

For years, the AI community has largely operated under the assumption that when agents fail at complex tasks, it's because they encounter problems beyond their knowledge or capability. This perspective has driven research toward improving model knowledge, expanding training data, and enhancing reasoning capabilities.

However, the new research presents compelling evidence that this assumption may be incorrect. According to the study, most agent failures in long-horizon tasks—those requiring many sequential steps—occur not because the agent lacks the necessary knowledge to solve a particular sub-problem, but because the agent loses track of the original instructions or goals as it progresses through the task.

This distinction is crucial because it suggests that improving agent reliability might require fundamentally different approaches than those currently emphasized in the field.

Understanding Long-Horizon Agent Challenges

Long-horizon agents are AI systems designed to perform extended sequences of actions toward achieving complex goals. These might include planning and executing multi-step projects, conducting extended research tasks, or managing complex workflows. As AI systems become more sophisticated and are deployed in increasingly complex environments, understanding and addressing their failure modes becomes critical.

The research indicates that as agents work through longer sequences of actions, they tend to "drift" from their original objectives. This drift isn't necessarily due to poor initial understanding but rather emerges as a cumulative effect of focusing on immediate sub-tasks at the expense of maintaining connection to the overarching goal.

Technical Implications for AI Development

This discovery has several important technical implications for AI system design:

Memory Architecture Re-evaluation: Current approaches to agent memory may need significant redesign to better maintain goal consistency over extended task horizons.

Attention Mechanism Enhancement: The findings suggest that attention mechanisms in transformer-based models might need modification to better balance focus between immediate actions and long-term objectives.

Training Paradigm Shifts: Rather than focusing primarily on expanding knowledge bases, training might need to emphasize goal persistence and task coherence maintenance.

Evaluation Metric Development: New metrics may be needed to specifically measure an agent's ability to maintain goal consistency throughout extended tasks.

Practical Consequences for AI Applications

The implications extend beyond theoretical research to practical applications across multiple domains:

Enterprise Automation: Companies implementing AI for complex business processes need to understand that failures may stem from goal drift rather than capability gaps, suggesting different remediation strategies.

Scientific Research Assistants: AI systems helping with extended research projects must maintain consistent understanding of research questions throughout potentially lengthy investigations.

Educational Tools: AI tutors and learning assistants need to maintain consistent pedagogical goals throughout extended learning sequences.

Healthcare Applications: Medical AI systems assisting with complex diagnostic or treatment planning processes must reliably maintain patient-specific goals throughout extended workflows.

The Path Forward: New Research Directions

This research opens several promising avenues for future investigation:

Goal Persistence Mechanisms: Developing new architectural components specifically designed to maintain goal awareness throughout extended task execution.

Intermediate Verification Systems: Creating systems that periodically verify an agent's continued alignment with original objectives.

Adaptive Attention Strategies: Designing attention mechanisms that dynamically balance focus between immediate actions and long-term goals.

Failure Recovery Protocols: Developing methods for agents to recognize when they've drifted from objectives and course-correct.

Broader Context in AI Safety and Reliability

The findings also contribute to important conversations about AI safety and reliability. As autonomous systems become more capable and are deployed in higher-stakes environments, understanding their failure modes becomes increasingly critical. This research suggests that ensuring reliable performance may require different approaches than previously assumed, with greater emphasis on maintaining consistent understanding of objectives rather than simply expanding capabilities.

This aligns with growing recognition in the AI safety community that capability improvements alone don't guarantee reliable or safe behavior, and that architectural and training considerations play crucial roles in determining how systems behave in complex, real-world scenarios.

Conclusion: Rethinking Agent Failure

The new research highlighted by Omar Sar represents a significant shift in how the AI community understands agent failure. By revealing that most failures in long-horizon tasks stem from forgetting objectives rather than insufficient knowledge, the study challenges fundamental assumptions and points toward new directions for improving AI reliability.

As AI systems take on increasingly complex and important roles across society, this understanding will be crucial for developing systems that can be trusted to maintain focus on their intended objectives throughout extended operations. The research suggests that the path to more reliable AI may lie not just in making agents smarter, but in making them more consistent in remembering what they're supposed to be doing.

Source: Analysis based on research highlighted by Omar Sar (@omarsar0) discussing new findings about AI agent failure modes in long-horizon tasks.