A groundbreaking study published on arXiv reveals a surprising phenomenon in AI safety training: once safety protocols are embedded in large language model (LLM) agents, they persist even when developers subsequently optimize for helpfulness. This discovery, detailed in the paper "Safety Training Persists Through Helpfulness Optimization in LLM Agents," challenges conventional wisdom about how to balance competing objectives in AI development and has significant implications for the future of autonomous AI systems.

The Agentic Shift: From Chatbots to Action-Takers

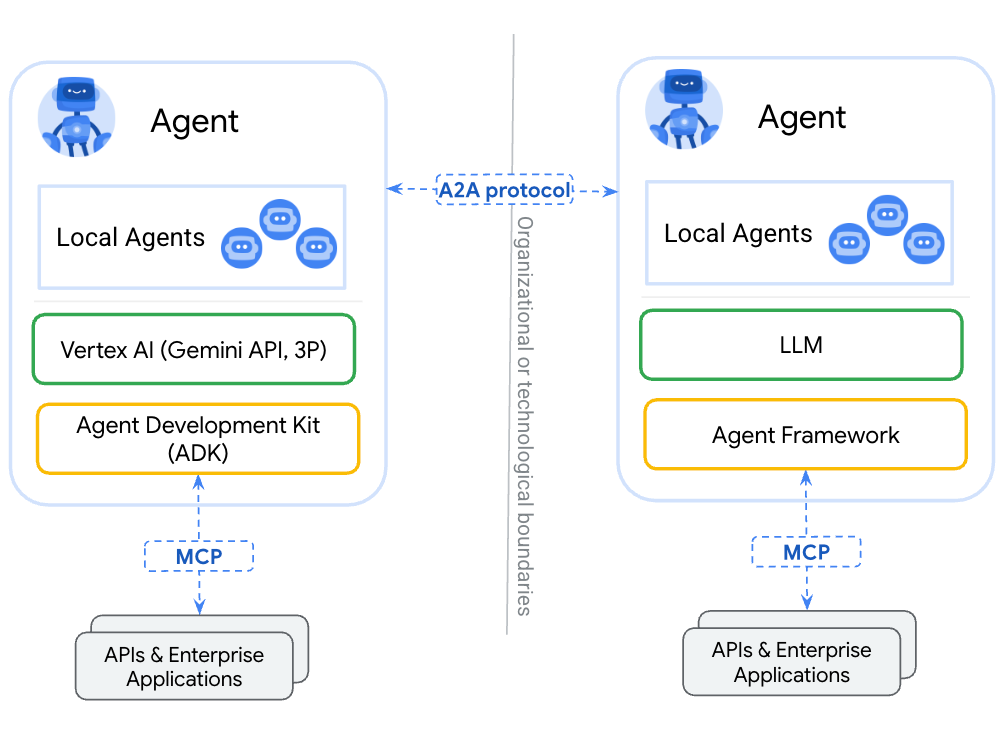

Traditional safety research has focused primarily on single-step "chat" settings where safety typically means refusing harmful requests. However, as AI systems evolve into multi-step, tool-using agents capable of taking direct actions in the world, the safety paradigm must shift accordingly. The researchers from this study recognized this critical distinction and designed experiments specifically for "agentic" settings where safety refers to preventing harmful actions directly taken by the LLM.

This distinction matters profoundly. While a chatbot refusing to provide harmful information represents one level of safety, an AI agent that might autonomously execute harmful actions through tools or APIs represents an entirely different risk category. The study's focus on this emerging reality makes its findings particularly timely as AI systems become increasingly integrated into operational environments.

The Training Experiments: Safety, Helpfulness, and Their Interactions

The research team compared three training configurations using Direct Preference Optimization (DPO): safety training alone, helpfulness training alone, and sequential training on both metrics. As expected, training on a single metric produced extreme results—highly safe but unhelpful agents, or highly helpful but unsafe ones.

The surprising finding emerged when researchers examined sequential training. Unlike prior assumptions in the field, safety training persisted through subsequent helpfulness optimization. This persistence effect suggests that safety protocols, once learned, become deeply embedded in the model's decision-making architecture rather than being easily overwritten by competing objectives.

Perhaps most intriguingly, all training configurations ended up near a linear Pareto frontier with R² = 0.77, indicating a consistent trade-off relationship between safety and helpfulness. Even simultaneous training on both metrics simply produced another point along this frontier rather than discovering a "best of both worlds" strategy—despite the presence of such strategies in the DPO dataset.

The Linear Frontier: Implications for AI Development

The existence of this linear trade-off frontier has profound implications for AI development practices. It suggests that simply adding more training data or using more sophisticated optimization techniques may not overcome fundamental tensions between safety and capability in agentic systems.

This finding aligns with recent developments in the field, including the introduction of structured reasoning frameworks that dramatically improve AI performance (as noted in recent arXiv publications). However, it also highlights that improved performance doesn't necessarily resolve safety-capability trade-offs.

The research underscores what might be called "the persistence paradox"—safety protocols that are difficult to establish initially but, once established, become equally difficult to remove or override. This creates both challenges and opportunities for AI developers seeking to create systems that are both capable and responsible.

Beyond the Frontier: Why "Best of Both Worlds" Strategies Remain Elusive

The study's most puzzling finding is that even when optimal strategies exist in the training data, current optimization approaches fail to discover them. This suggests limitations in how DPO and similar methods navigate complex, multi-objective optimization landscapes.

Several factors might explain this phenomenon:

- Representation limitations: The model architecture may not support certain types of balanced decision-making

- Optimization biases: Gradient-based methods may naturally converge toward extreme points on the frontier

- Objective interference: The mathematical formulation of the optimization problem may inherently create trade-offs

Understanding why these "best of both worlds" strategies remain undiscovered despite their presence in the data represents a crucial next step for AI safety research.

Practical Implications for AI Deployment

For organizations deploying AI agents in real-world settings, these findings suggest several important considerations:

- Sequencing matters: The order of training objectives affects final outcomes, with safety-first approaches creating more persistent safety protocols

- Trade-offs are inevitable: Organizations must make explicit decisions about where they want to position themselves on the safety-helpfulness frontier

- Monitoring is essential: The persistence of safety training doesn't guarantee perfect safety, requiring ongoing evaluation

- Architecture choices: Different model architectures may create different frontier shapes, offering opportunities for innovation

Future Research Directions

The paper concludes by emphasizing the need for better understanding of post-training dynamics in agentic systems. Several promising research directions emerge:

- Alternative optimization approaches: Exploring whether different training methods can discover balanced strategies

- Architectural innovations: Designing model architectures that better support multi-objective optimization

- Dynamic balancing: Developing approaches that adjust safety-helpfulness trade-offs based on context

- Human-in-the-loop: Integrating human feedback more effectively throughout the training process

As AI systems become increasingly autonomous and capable, understanding these training dynamics becomes not just academically interesting but practically essential for responsible deployment.

Source: "Safety Training Persists Through Helpfulness Optimization in LLM Agents" (arXiv:2603.02229)