As large language model (LLM) agents rapidly become the practical interface for task automation, a critical problem has emerged: with thousands of deployable agent configurations available, how do users and developers systematically choose the right one for a specific task? This selection challenge represents one of the most significant bottlenecks in the practical deployment of AI agents across industries.

Researchers have now addressed this gap with AgentSelect, a groundbreaking benchmark that reframes agent selection as narrative query-to-agent recommendation. Published on arXiv on March 4, 2026, this work establishes the first unified data and evaluation infrastructure for agent recommendation, creating a reproducible foundation to study and accelerate the emerging agent ecosystem.

The Agent Selection Problem

LLM agents combine backbone models with specialized toolkits to perform complex tasks, from data analysis and content creation to software development and customer service. However, the current ecosystem lacks principled methods for choosing among what the researchers describe as "an exploding space of deployable configurations."

Existing evaluation approaches remain fragmented across tasks, metrics, and candidate pools. Traditional LLM leaderboards evaluate models in isolation, while tool and agent benchmarks often focus on specific components rather than end-to-end configurations. This fragmentation leaves a critical research gap: there is little query-conditioned supervision for learning to recommend complete agent configurations that couple a backbone model with an appropriate toolkit.

"Existing LLM leaderboards and tool/agent benchmarks evaluate components in isolation and remain fragmented across tasks, metrics, and candidate pools," the researchers note in their abstract, highlighting the need for a more integrated approach.

The AgentSelect Framework

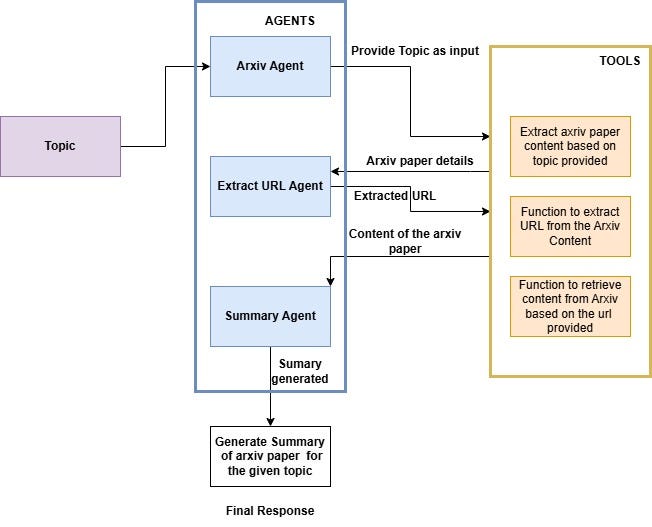

AgentSelect systematically converts heterogeneous evaluation artifacts into unified, positive-only interaction data. The benchmark comprises:

- 111,179 queries representing diverse task descriptions

- 107,721 deployable agents spanning LLM-only, toolkit-only, and compositional configurations

- 251,103 interaction records aggregated from 40+ sources

The framework organizes agents into capability profiles and treats agent selection as a recommendation problem where the system must match narrative queries (task descriptions) with appropriate agent configurations.

Key Findings and Analysis

The researchers' analyses reveal several important patterns in the agent ecosystem:

Regime Shift: They observe a transition from dense head reuse (where popular agents handle many tasks) to long-tail, near one-off supervision, where each task may require a specialized configuration.

Methodological Implications: This shift makes popularity-based collaborative filtering and graph neural network methods fragile, highlighting the need for content-aware capability matching approaches.

Compositional Learning: The benchmark demonstrates that synthesized compositional interactions are learnable and induce capability-sensitive behavior under controlled counterfactual edits. These compositions improve coverage over realistic agent configurations.

Transfer Learning: Models trained on AgentSelect successfully transfer to real-world environments, yielding consistent gains on an unseen catalog when tested on the public agent marketplace MuleRun.

Technical Architecture and Implementation

AgentSelect employs a sophisticated data unification pipeline that normalizes evaluation artifacts from diverse sources into a consistent format. The benchmark includes three main components:

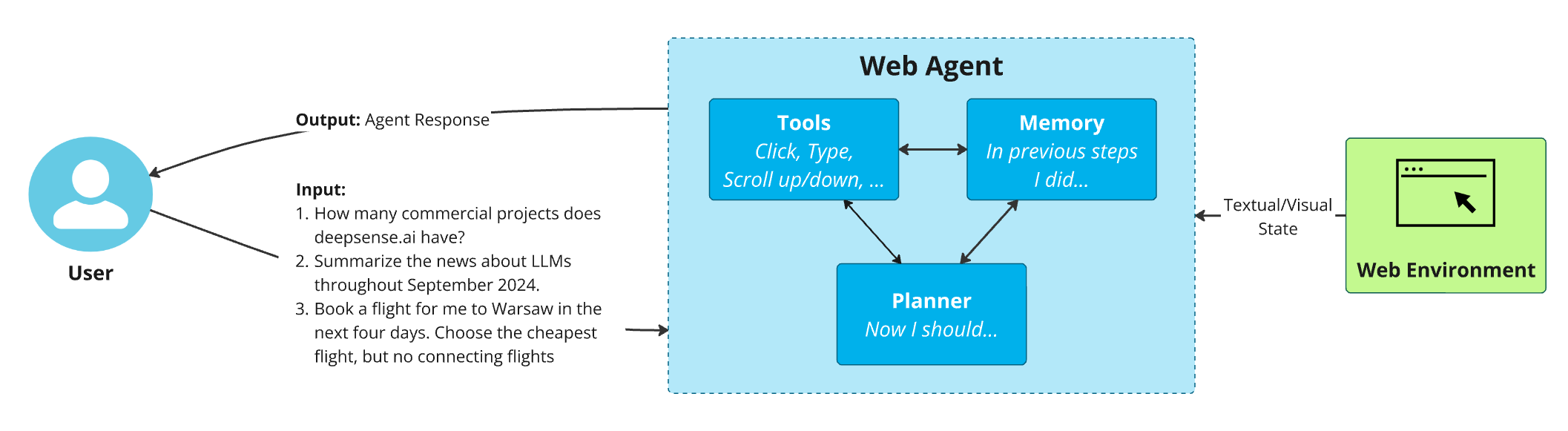

Query Representation: Narrative queries are encoded using transformer-based models to capture semantic meaning and task requirements.

Agent Profiling: Each agent is characterized by its capabilities, performance metrics, and compatibility constraints.

Interaction Modeling: The system learns from positive interactions (successful agent-task pairings) to recommend optimal configurations for new queries.

The researchers developed novel evaluation metrics that consider both performance and coverage, addressing the trade-off between recommending highly specialized agents versus more general-purpose configurations.

Implications for the AI Ecosystem

AgentSelect represents a significant advancement with broad implications:

For Developers: Provides standardized evaluation protocols for comparing agent configurations, accelerating development cycles and improving interoperability.

For Enterprises: Enables more systematic deployment of AI agents by matching specific business needs with optimal configurations, potentially reducing implementation costs and improving outcomes.

For Researchers: Establishes a common benchmark for agent recommendation research, facilitating comparison between different approaches and accelerating innovation.

For End Users: Could lead to more intuitive agent selection interfaces that understand task requirements and recommend appropriate AI assistants.

The benchmark's ability to handle compositional agents is particularly significant as the field moves toward more modular, customizable agent architectures where components can be mixed and matched based on task requirements.

Future Directions and Challenges

While AgentSelect provides a crucial foundation, several challenges remain:

Dynamic Adaptation: Agents and their capabilities evolve rapidly, requiring continuous updates to the benchmark.

Multimodal Extensions: Future versions may need to incorporate agents that process images, audio, and other data types beyond text.

Ethical Considerations: As agent recommendation systems become more sophisticated, questions about bias, transparency, and accountability will need to be addressed.

The researchers suggest that AgentSelect could evolve into a living benchmark that incorporates real-world deployment data, creating a feedback loop between research and practical applications.

Conclusion

AgentSelect represents a paradigm shift in how we evaluate and select AI agents. By treating agent selection as a recommendation problem and providing the first unified benchmark for this task, the research team has addressed a critical bottleneck in the practical deployment of AI automation systems.

As the paper concludes, "AgentSelect provides the first unified data and evaluation infrastructure for agent recommendation, which establishes a reproducible foundation to study and accelerate the emerging agent ecosystem."

This work not only advances the technical state of the art but also provides the infrastructure needed for more systematic, efficient, and effective deployment of AI agents across industries. As the agent ecosystem continues to expand, tools like AgentSelect will become increasingly essential for navigating the complex landscape of available options and matching the right AI assistant to the right task.

Source: arXiv:2603.03761v1, "AgentSelect: Benchmark for Narrative Query-to-Agent Recommendation" (Submitted March 4, 2026)