New research highlighted by Wharton professor Ethan Mollick points to a critical distinction in how artificial intelligence impacts education. One study found that simply giving students access to AI tools can lead them to inadvertently shortcut the learning process. However, both that study and a separate randomized controlled trial (RCT) found that when AI systems are specifically prompted to act as tutors, they demonstrably improve learning outcomes.

The findings, shared via social media, reference research involving team member @hamsabastani and point to a growing body of evidence that the design and prompting of AI educational tools are as important as their availability.

What the Research Shows

The core insight is not that AI is inherently good or bad for learning, but that its impact is mediated by its implementation. The first study suggests a potential pitfall: when students are given unrestricted access to generative AI (like ChatGPT for answering questions or solving problems), they may use it to bypass the cognitive effort required for genuine understanding. This "shortcut" can undermine the learning objectives of an assignment or course.

In contrast, the second study—a more rigorous randomized controlled trial—demonstrates a positive effect. Here, the AI was not a general-purpose tool but was specifically designed and prompted to function as a tutor. This means the AI was likely guided to use Socratic questioning, provide hints instead of answers, assess understanding, and adapt to the student's pace—mimicking proven pedagogical techniques.

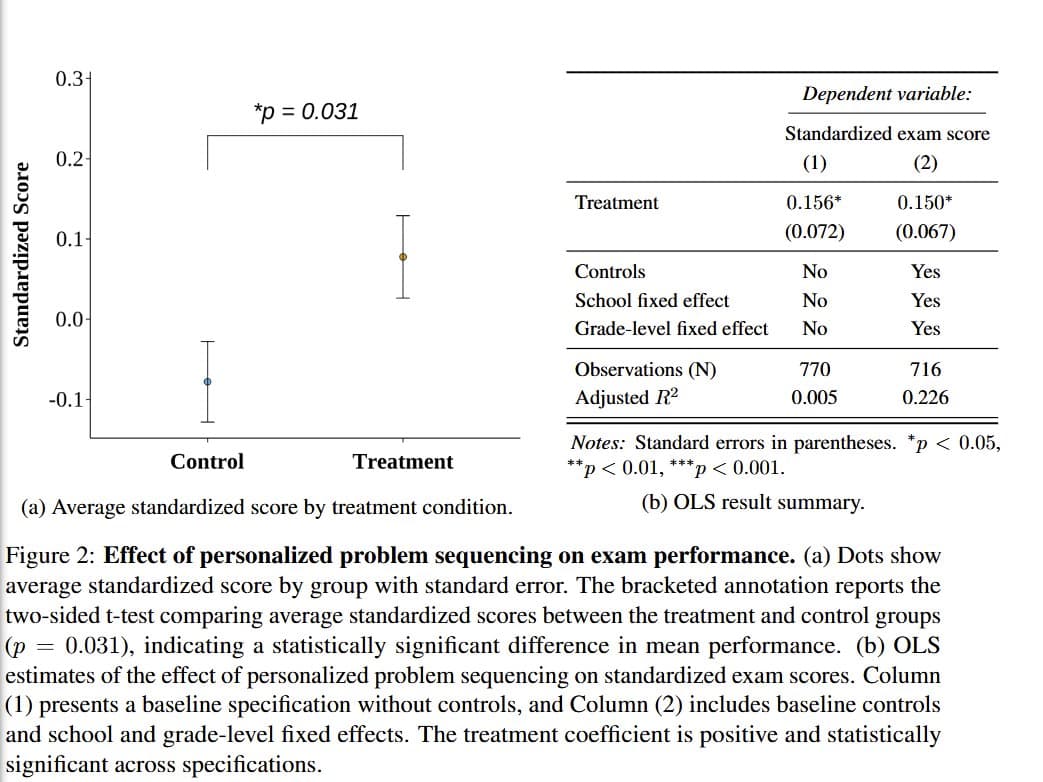

The results indicate that this structured, tutor-like interaction successfully improved learning compared to a control group, validating a targeted application of AI in education.

The Critical Role of Prompting and Design

This dichotomy highlights a central theme in applied AI: the output is dictated by the input. An AI model is a powerful but directionless engine. Telling it to "solve this math problem" provides a correct answer but may not teach the student. Telling it to "act as a patient tutor and guide me to the solution" changes the entire interaction.

For educators and edtech developers, the implication is clear. Successfully integrating AI into learning environments requires careful instructional design. It necessitates building systems or crafting prompts that:

- Scaffold learning: Break down complex problems.

- Promote metacognition: Ask students to explain their reasoning.

- Provide formative feedback: Focus on the process, not just the final answer.

- Avoid answer-giving: Especially in initial learning phases.

gentic.news Analysis

This research arrives amid intense debate and experimentation about AI's role in education, a sector where entities like Khan Academy (with its Khanmigo AI tutor) and Duolingo have been early and aggressive integrators. The findings provide empirical weight to a design philosophy already in motion: that AI must be constrained and guided to be pedagogically effective. It directly supports the approach of Khanmigo, which is built to act as a guide and coach rather than an answer oracle.

The results also create a clear counterpoint to common, fear-based narratives about AI enabling cheating. Instead, they frame a more nuanced challenge: the risk isn't just dishonesty, but the well-intentioned use of tools that can, by being too helpful, erode the learning journey. This aligns with broader discussions in the AI community about alignment—ensuring AI systems act in accordance with human goals. In this case, the goal is deep learning, not just task completion.

For practitioners, this underscores that deploying LLMs in education is not a simple plug-and-play task. It requires a deep understanding of pedagogy to craft the guardrails and prompts that will keep the AI in a beneficial "tutor" mode. The next frontier will be measuring the long-term retention and transfer of learning from AI-tutored sessions compared to human-led instruction.

Frequently Asked Questions

Can AI really replace human tutors?

The research suggests AI can effectively augment and scale certain tutoring functions, particularly for foundational knowledge and practice. However, it does not address the complex motivational, emotional, and deeply interpersonal aspects of learning that human tutors excel at. The most likely near-term future is hybrid models, where AI handles drill-and-practice and initial guidance, freeing human educators for higher-level mentorship.

What's the difference between an AI tutor and just asking ChatGPT for help?

The difference is entirely in the prompting and system design. A standard ChatGPT query like "What's the answer to this calculus problem?" provides a solution. An AI tutor uses a foundational prompt such as "You are a patient math tutor. Do not give the student the answer. Ask guiding questions to help them discover the solution themselves. Assess their understanding at each step." The latter requires careful design and testing to ensure the AI consistently adheres to the tutoring role.

How can educators prevent students from using AI to shortcut learning?

The research implies that prohibition is less effective than redirection. Instead of banning AI, educators can design assignments where the process is the product (e.g., "submit your dialogue with an AI tutor explaining this concept"), use AI tools that are locked into a tutoring mode, or focus assessment on in-class, AI-free demonstrations of skills built with the aid of AI tutoring outside of class.

Are there specific AI tutoring platforms available now?

Yes, several platforms are building on this concept. Khan Academy's Khanmigo is a leading example, acting as a guide within its learning system. Other startups are developing specialized AI tutors for coding, language learning, and test preparation. The key is to look for platforms that emphasize dialogue, questioning, and step-by-step guidance over simply delivering answers.