A first-of-its-kind field study from researchers at the University of California, San Diego has delivered a surprising and counterintuitive finding: AI-powered coding assistants can significantly slow down experienced software developers rather than accelerate their work.

The study, which observed developers in their natural work environment, found that experienced programmers using AI tools like GitHub Copilot took up to 50% longer to complete coding tasks compared to working without AI assistance. This challenges the widespread industry assumption that AI coding tools provide universal productivity benefits.

Key Takeaways

- A real-world study from UC San Diego shows AI coding assistants like GitHub Copilot can slow down experienced developers, increasing task time by up to 50%.

- This challenges the assumption that AI tools universally boost productivity for all skill levels.

What the Study Measured

The UC San Diego researchers conducted what they describe as the "first real field study of experienced developers" using AI coding assistants in their actual workflow. Unlike controlled lab experiments or surveys, this study observed developers working on real tasks in their normal development environment.

Key findings from the study include:

- Increased completion time: Experienced developers required substantially more time to finish coding tasks when using AI assistants

- Cognitive overhead: The need to evaluate, verify, and debug AI-generated code created additional mental load

- Context switching costs: Interacting with the AI tool disrupted developers' natural workflow and concentration

- Debugging burden: AI-generated code often contained subtle bugs or required significant modification, offsetting any time saved in initial code generation

How It Works: The Productivity Paradox

The study reveals what might be called the "AI productivity paradox" for experienced developers. While AI tools can quickly generate code snippets, several factors contribute to the net slowdown:

- Verification overhead: Experienced developers must carefully review AI-generated code for correctness, security, and alignment with existing codebase patterns

- Mental context switching: The back-and-forth interaction with the AI assistant disrupts the developer's flow state

- Debugging complexity: AI-generated code often contains subtle logical errors that are harder to debug than self-written code

- Specification refinement: Developers spend additional time crafting and refining prompts to get useful AI output

For junior developers or those working with unfamiliar languages or frameworks, the AI assistant may still provide net benefits by reducing the need to look up syntax or common patterns. However, for experienced developers who already have these patterns internalized, the AI tool adds friction rather than removing it.

Why It Matters for Engineering Teams

This research has immediate practical implications for how engineering teams adopt and measure AI coding tools:

Performance measurement: Companies tracking developer productivity need more nuanced metrics than simple lines of code generated or time-to-first-draft. The quality of final output and total time to production-ready code may be more important indicators.

Tool customization: AI coding assistants may need different default behaviors for developers of different experience levels. Experienced developers might benefit from more conservative suggestions or the ability to disable certain types of auto-completion.

Training and onboarding: The study suggests that developers need specific training on how to effectively integrate AI tools into their workflow without disrupting their natural problem-solving process.

Cost-benefit analysis: Organizations investing in AI coding tools should consider that the return on investment may vary significantly across their developer population, with potentially negative returns for their most experienced engineers.

gentic.news Analysis

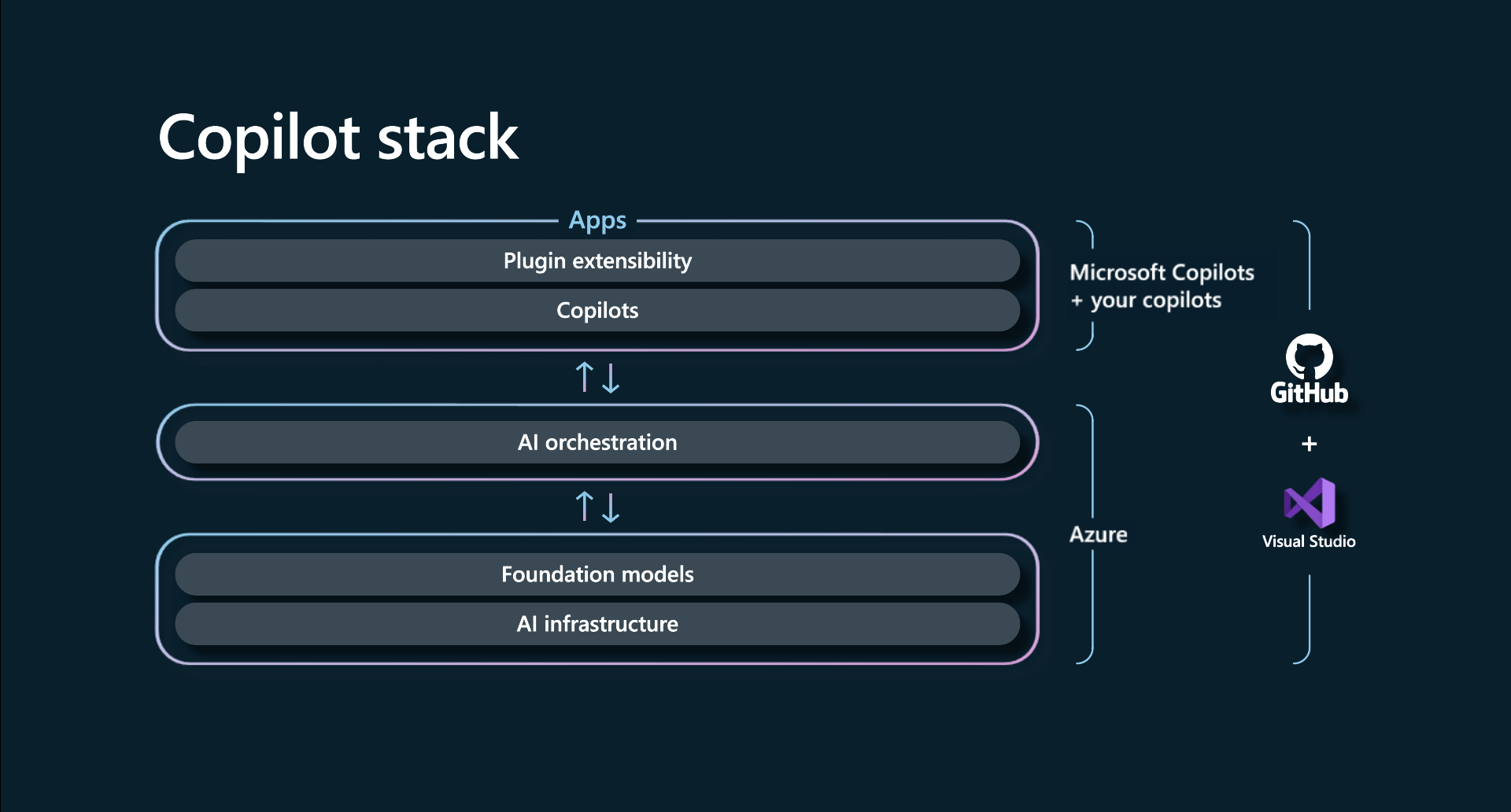

This UC San Diego study arrives at a critical moment in the AI-assisted development tool market. Over the past two years, tools like GitHub Copilot, Amazon CodeWhisperer, and Tabnine have seen explosive adoption, with GitHub reporting over 1.3 million paid Copilot users as of early 2025. The dominant narrative has been one of universal productivity gains, with GitHub's own studies claiming developers code up to 55% faster with Copilot.

However, this new research suggests a more nuanced reality. It aligns with emerging concerns we've covered at gentic.news about AI tool over-reliance and skill atrophy. In our December 2025 analysis of Stack Overflow's declining traffic, we noted that developers were increasingly turning to AI assistants for questions they previously would have researched themselves, potentially weakening their fundamental understanding.

The study also connects to broader trends in human-AI collaboration research. As we reported in our coverage of Google's "Human-Centered AI" initiative in January 2026, effective AI tools need to adapt to human workflows rather than forcing humans to adapt to AI limitations. This UC San Diego finding that AI disrupts experienced developers' flow states highlights exactly this mismatch.

Looking forward, this research suggests that the next generation of AI coding tools may need to incorporate developer experience detection and adaptive suggestion models. Rather than offering the same assistance to all users, tools might identify when a developer is in a deep work state and reduce interruptions, or recognize when they're working in a familiar codebase versus exploring new territory.

Frequently Asked Questions

Do AI coding assistants help any developers?

Yes, the study suggests that less experienced developers or those working with unfamiliar technologies may still benefit from AI assistance. The slowdown effect was most pronounced among developers with deep expertise in the language and domain they were working in.

Should experienced developers stop using AI coding tools?

Not necessarily, but they should be more selective about when and how they use them. The research suggests that AI tools might be most helpful for boilerplate code, documentation, or exploring alternative approaches, but counterproductive for core logic that experienced developers can write quickly from memory.

How was this study different from previous research on AI coding assistants?

Previous studies were often conducted in laboratory settings with artificial tasks, or relied on self-reported survey data. This UC San Diego study observed experienced developers in their actual work environment completing real tasks, making it more reflective of true productivity impacts.

What should engineering managers take away from this research?

Managers should avoid assuming that AI coding tools automatically improve productivity for all team members. They should track metrics beyond simple code generation speed, provide training on effective AI tool use, and be open to developers opting out of certain AI features if they find them disruptive to their workflow.