A developer has highlighted a new tool for AI coding agents called visual-explainer. The tool is presented as an installable "agent skill" designed to generate visual diagrams from code, directly addressing the common practice of using ASCII art to represent architecture or flow within codebases and agent conversations.

The brief announcement suggests the skill can be integrated into an agent's capabilities. Once installed, the agent can presumably be prompted to create a diagrammatic explanation of a code snippet, module, or system design, outputting a visual instead of the traditional, manually-crafted ASCII diagrams that are common in documentation and developer communications.

What Happened

The source is a social media post from a developer account, @aiwithjainam, stating that the tool "just killed ASCII art diagrams in coding agents." The tool is named visual-explainer. The post instructs users to "Install it as an agent skill" to evaluate code, implying a straightforward integration process for AI coding assistants.

Context

ASCII art diagrams—using characters like -, |, +, and / to draw boxes and arrows—have long been a low-fidelity, quick-and-dirty way for developers to sketch system architecture, data flow, or state machines directly in code comments, terminals, or chat interfaces. With the rise of AI coding agents (like GitHub Copilot, Cursor, or Claude Code), this practice has extended into human-agent interactions, where a developer might ask the agent to "draw" an ASCII diagram to explain a concept.

Visual-explainer appears to be a targeted solution that automates this diagram generation, producing a proper visual format. This aligns with a broader trend of enhancing AI coding tools with multimodal capabilities, moving from pure text to integrated visuals for better comprehension and documentation.

gentic.news Analysis

This development, while light on technical specifics, points to a clear and growing niche: equipping AI coding agents with specialized, task-oriented skills beyond raw code generation. The framing of "killing ASCII art" is hyperbolic but identifies a genuine friction point. ASCII diagrams are functional but brittle and time-consuming to create and parse. Automating this into a clean visual output is a logical productivity enhancement.

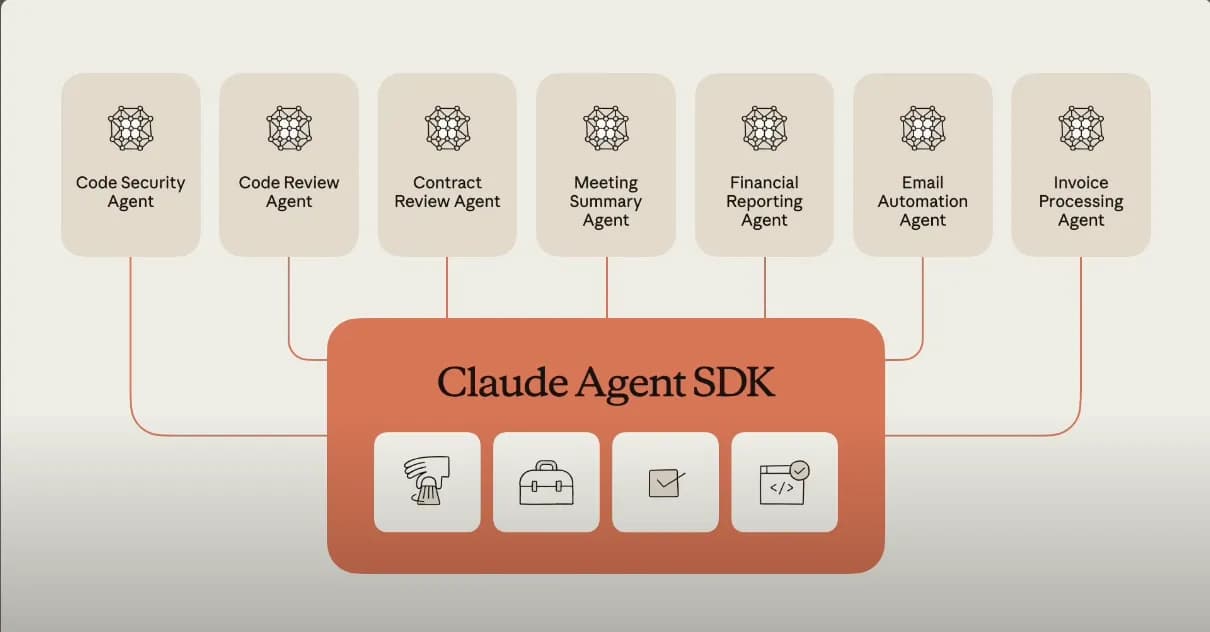

This trend of agent skill marketplaces or plug-in ecosystems is accelerating. We've covered similar movements with platforms like OpenAI's GPT Store and Cline's specialist agents, where core models are augmented with targeted capabilities for coding, research, or design. Visual-explainer fits neatly into this paradigm, treating diagram generation as a discrete skill that can be summoned on demand.

From a technical implementation perspective, the key questions are about the underlying model. Is it using a vision-language model to interpret code and generate Graphviz/DOT notation, Mermaid.js code, or a raster image? The fidelity, accuracy, and customizability of the generated diagrams will determine its real utility versus a simple novelty. If it can reliably produce accurate sequence diagrams, class hierarchies, or network topologies from complex code, it could become a staple in an engineer's agent toolkit. However, if it produces generic or incorrect visuals, it will remain a gimmick. The success of such tools hinges on their deep integration with the code's semantic understanding, not just syntactic parsing.

Frequently Asked Questions

What is visual-explainer?

Visual-explainer is an installable skill or plugin for AI coding agents. Its primary function is to automatically generate visual diagrams from code snippets or system descriptions, aiming to replace the need for manual ASCII art diagrams in development workflows.

How do I use visual-explainer?

Based on the announcement, you would install it as a skill within your AI coding agent's framework. The exact integration method would depend on the specific agent platform you are using (e.g., Cursor, Windsurf, an OpenAI GPT). Once installed, you would likely use a natural language command like "Explain this module with a diagram" to trigger the visual generation.

What's wrong with ASCII art diagrams?

Nothing is inherently "wrong" with them—they are a lightweight, text-based communication tool. However, they are manual to create, difficult to modify, and can be hard to read in complex layouts. An automated visual diagram generator promises faster, clearer, and more standardized visual explanations, potentially improving documentation and team communication.

Which coding agents support skills like this?

The ecosystem for agent skills is still evolving. Some advanced AI-powered IDEs like Cursor and platforms built around Claude or GPT-4 have begun supporting extensible agent behaviors or custom instructions that could accommodate a skill like visual-explainer. The trend is toward more open, pluggable architectures for AI assistants.