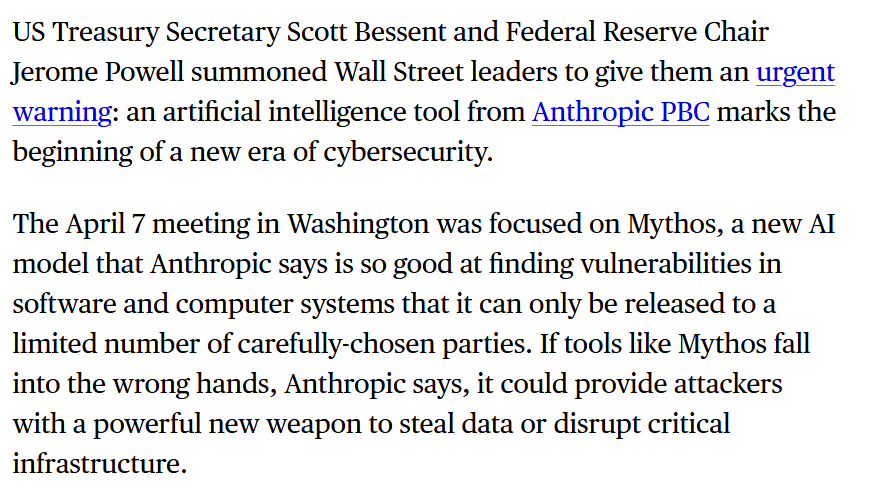

According to a report by the Financial Times, the White House is moving to grant major U.S. federal agencies access to a specially modified version of Anthropic's Mythos AI model. The system is engineered to proactively hunt for dangerous software vulnerabilities in critical systems—such as operating systems, browsers, and servers—before malicious actors can discover and exploit them.

This initiative signals a significant shift in the federal government's approach to cybersecurity, treating advanced AI as a dual-edged sword: a capability too powerful to ignore for national defense, yet too dangerous to disseminate without strict controls.

Key Takeaways

- The White House is providing major federal agencies with a modified version of Anthropic's Mythos AI model to autonomously find and patch software flaws.

- This represents a strategic, high-stakes adoption of AI for national cyber defense.

What Happened

The Financial Times reports that the administration is acting to provide access to this modified Anthropic model to key agencies. The core function of the model is offensive cybersecurity defense—using AI to autonomously scan code and systems for weaknesses that could be turned into security patches. The goal is to drastically shorten the time between the discovery of a vulnerability and its remediation, a critical factor in modern cyber warfare.

The report highlights the government's nuanced stance: AI for cyber defense is seen as strategically essential, but its distribution requires "tight control." This suggests the deployed version of Mythos will likely have usage restrictions, monitoring, and possibly air-gapped or highly secured deployment environments distinct from a public API.

Context: The Mythos Model and Anthropic's Positioning

Anthropic's Mythos is part of the company's suite of AI models, which is headlined by the Claude family. While less publicly discussed than Claude, Mythos appears to be specialized for security and reasoning tasks. This move aligns with Anthropic's established focus on building safe, steerable, and constitutional AI—principles that are likely paramount for a government partner.

This development follows a broader trend of the U.S. government deepening its partnerships with leading AI labs for national security purposes. It also comes amid intense global competition in AI, particularly with China, where AI-enabled cyber capabilities are a major area of focus.

The Strategic Calculus: AI as a Cyber "Vaccine"

The logic behind the deployment is preventative. Instead of waiting for hackers to find a flaw (a zero-day) and then racing to patch it, agencies could use Mythos to continuously audit their own and critical national infrastructure codebases. If successful, this would transform AI from a tool for analyzing attacks post-breach into a proactive shield.

However, the same capability is inherently dual-use. A model that excels at finding flaws could, in the wrong hands, be used to exploit them. The White House's emphasis on "tight control" directly addresses this risk, implying a carefully managed, likely non-commercial deployment model for select agencies.

Potential Implications and Questions

- Scope of Access: Which agencies will get access? Likely candidates include the Department of Defense (DoD), Cybersecurity and Infrastructure Security Agency (CISA), and intelligence communities.

- Model Modification: What specific modifications were made to the base Mythos model for government use? Possibilities include enhanced audit trails, restrictions on output formats, and training on classified or sensitive vulnerability data.

- Operational Tempo: Could this enable continuous, automated vulnerability discovery programs, fundamentally changing how government software is secured?

- Vendor Landscape: This represents a major, high-profile win for Anthropic in the government contracting space, potentially setting a precedent for other AI labs like OpenAI, Google, or specialized cybersecurity AI firms.

gentic.news Analysis

This is a concrete, operational step in the militarization and governmental adoption of frontier AI models, a trend we have tracked closely. It follows the pattern of the U.S. government seeking controlled partnerships with domestic AI champions, as seen in the Department of Defense's ongoing collaborations with various tech firms on Project Maven and similar AI integration efforts. The choice of Anthropic is particularly telling. Unlike some competitors, Anthropic has built its brand on safety and controllability (its "Constitutional AI" approach). For a risk-averse government entity deploying a potentially powerful offensive cyber tool, Anthropic's philosophical alignment may have been as important as its technical benchmarks.

This move also directly relates to the escalating AI cyber arms race. In March 2025, we covered the rise of AI-powered penetration testing tools from startups like Synacktiv and HiddenLayer, which are commercializing similar capabilities. The U.S. government is now effectively entering that race with a state-sponsored, top-tier model. The critical distinction is control: this isn't a tool being sold on the open market. It creates a bifurcated landscape where nation-states with access to frontier models may develop cyber capabilities that outpace those available to even well-funded criminal groups, potentially altering the balance of power in cyber conflict.

Finally, this deployment will be a critical test case for real-world AI safety and security protocols. The government will need to answer hard questions about model provenance, training data hygiene (to avoid poisoning), and operational security to prevent the model itself from becoming a target. The lessons learned here will inevitably filter back into the commercial sector, influencing how all high-stakes AI systems are deployed.

Frequently Asked Questions

What is the Anthropic Mythos model?

Mythos is a specialized AI model developed by Anthropic, the company behind Claude. While full public specifications are limited, it is understood to be engineered for deep reasoning and analysis tasks, with this specific application focused on autonomously finding software vulnerabilities in complex codebases.

Which government agencies will get access to the AI?

The Financial Times report specifies "major US agencies" but does not list them. Based on the mission, the most likely recipients are agencies with national defense and critical infrastructure protection mandates, such as the Department of Defense (DoD), the Cybersecurity and Infrastructure Security Agency (CISA), the National Security Agency (NSA), and potentially the FBI.

Why is the government choosing Anthropic for this role?

Anthropic has distinguished itself with a strong focus on AI safety, alignment, and controllability through its Constitutional AI framework. For a sensitive, high-risk application like offensive cyber defense, the government likely prioritizes a vendor with a proven commitment to building predictable and steerable systems, reducing the risk of unintended behavior.

Could this AI be used for offensive hacking?

The core capability—finding software flaws—is inherently dual-use. The White House's stated requirement for "tight control" is explicitly designed to prevent this. The modified model is intended to be a defensive tool to patch vulnerabilities faster than attackers can find them. Its deployment within secured government environments is meant to minimize the risk of the technology being repurposed for offensive operations.