OpenAI is preparing to launch a new AI model with advanced cybersecurity capabilities, internally referred to as "Mythos," according to a report via Axios. In a significant shift from its typical broad-release strategy, the company will not make this model publicly available. Instead, it plans a limited, staggered rollout to a select group of vetted companies and partners.

This approach directly mirrors the strategy employed by rival Anthropic, which recently launched its own "Mythos Preview" for a small, trusted cohort. The move signals a new phase in AI deployment, where models capable of sophisticated, autonomous tasks—particularly in cybersecurity and offensive hacking—are treated with the caution of a controlled vulnerability disclosure.

What's New: A Closed-Door AI for Security

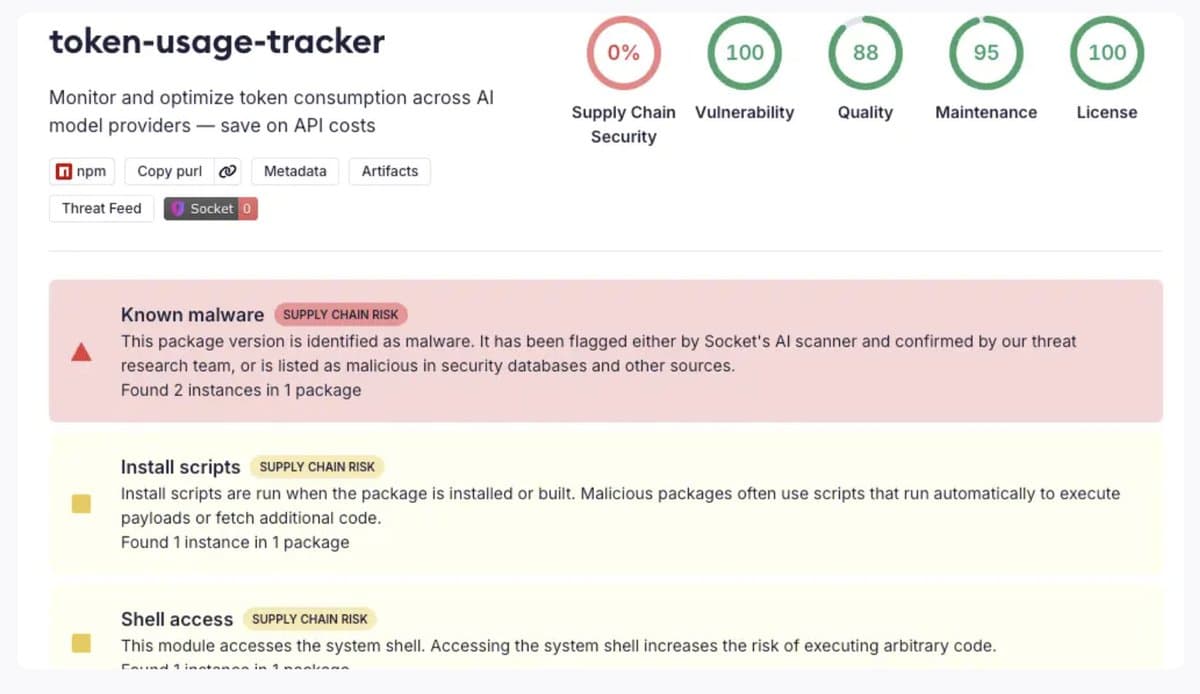

OpenAI's "Mythos" is described as a model with "advanced cybersecurity capabilities." While specific technical details are not provided in the initial report, the context suggests capabilities that could include automated vulnerability discovery, penetration testing, or threat analysis at a level that raises dual-use concerns. The core news is not just the model's existence, but its release protocol.

Key aspects of the launch plan:

- No Public Release: The model will not be available via ChatGPT or the public API.

- Staggered Rollout: Access will be granted gradually, not all at once.

- Vetted Partners: Initial availability will be restricted to a pre-approved list of companies, likely in the cybersecurity and enterprise sectors.

The Strategic Mirror: Following Anthropic's Lead

This decision places OpenAI on a path recently charted by Anthropic. In late 2025, Anthropic began its Mythos Preview, offering early access to its most capable model to a handful of trusted organizations under strict confidentiality and use-case agreements. The goal was to study the model's real-world impact and potential misuse in a controlled environment before considering any wider release.

OpenAI's adoption of a nearly identical strategy—even using the same internal codename—highlights a converging industry consensus. When models reach a threshold of autonomous capability in sensitive domains like cybersecurity, the default move-fast-and-break-things playbook is being replaced by one of extreme caution.

The Underlying Shift: AI as a Controlled Vulnerability

The report underscores a critical trend: "More and more AI models are now capable enough at autonomous hacking that their makers are treating releases like responsible vulnerability disclosure."

This framing is profound. It means AI labs are starting to view their own most powerful creations not merely as products, but as potential systemic risks. The process resembles how a security researcher would confidentially disclose a critical software flaw to a vendor before public disclosure, allowing for mitigations to be developed. In this case, the "flaw" is the model's inherent capability, and the "mitigation" is the controlled, supervised environment in which it is first deployed.

What this means in practice: For vetted partners, this could provide early access to transformative defensive tools. For the broader ecosystem, it creates a tiered access model where the most powerful AI capabilities are gated behind trust and verification, potentially slowing adoption but aiming to increase safety.

gentic.news Analysis

This development is a direct and telling response to the escalating capabilities crisis in frontier AI. It's not happening in a vacuum. As we covered in our analysis of Anthropic's Mythos Preview launch, the industry has been grappling with how to commercialize models that can autonomously execute complex, potentially harmful tasks in digital environments. OpenAI's move confirms that Anthropic's cautious framework is now seen as a template, not an outlier.

The strategic alignment between these two leading labs—historically fierce competitors—on a containment strategy for certain AI capabilities is one of the most significant trends of 2026. It suggests that behind closed doors, red-teaming efforts have likely produced results concerning enough to override commercial incentives for a rapid, wide release. This follows a pattern of increasing coordination on safety, reminiscent of the Frontier Model Forum commitments, but now moving into concrete deployment policy.

For practitioners and enterprises, the implication is clear: the era of universally accessible, state-of-the-art frontier models may be narrowing. Access to the most powerful AI tools for sensitive applications will increasingly depend on established trust relationships, compliance frameworks, and specific use-case approvals. This creates a moat for early, trusted partners but could also stifle innovation from smaller players and researchers who lack the resources to navigate these new gated communities.

Frequently Asked Questions

What can OpenAI's 'Mythos' model actually do?

Based on the report, it possesses "advanced cybersecurity capabilities." While specifics are undisclosed, this strongly implies abilities related to autonomous vulnerability discovery, exploit generation, network penetration testing, or advanced threat analysis. Its capabilities are considered significant enough to warrant a restricted release.

Why won't OpenAI release this model publicly?

OpenAI and other labs are treating such models akin to controlled vulnerabilities because they are capable of autonomous hacking. A public release could enable malicious actors to automate cyberattacks, discover zero-day exploits at scale, or otherwise amplify threats. The limited rollout allows the company to study real-world use and impacts in a controlled setting with trusted entities.

How can a company get access to OpenAI's 'Mythos'?

The report indicates access will be for a "small group of vetted companies." While the exact criteria are not public, it will likely require a pre-existing enterprise relationship with OpenAI, a demonstrable and legitimate cybersecurity use case (likely defensive), and agreement to strict usage protocols and confidentiality. It is not an open application process.

Is this the same as Anthropic's Mythos model?

No, they are different models developed by different companies. However, OpenAI's internal codename and its identical release strategy directly mirror Anthropic's approach with its Mythos Preview, indicating a deliberate alignment on safety and deployment philosophy for this class of AI capability.