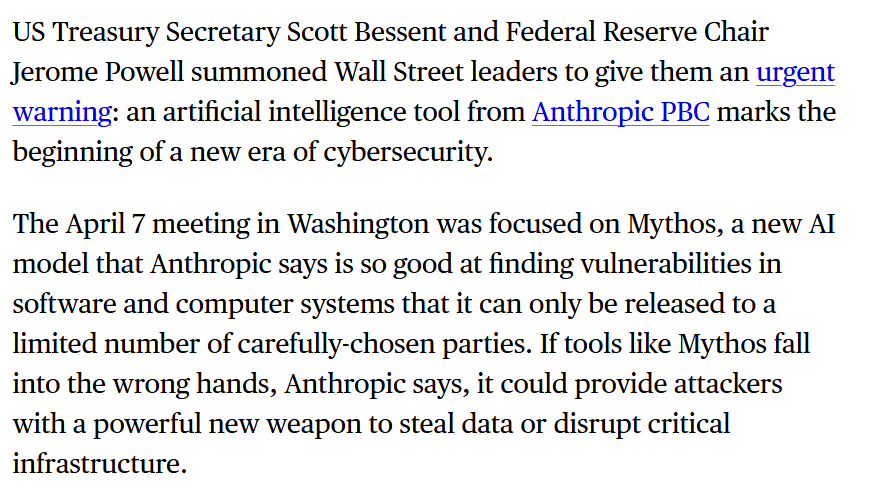

A new AI model from Anthropic, internally referred to as "Mythos," will not be released to the public. The decision, highlighted in a social media post by AI commentator Rohan Pandey, is based on an internal safety case developed by Anthropic outlining fears of potential damage the model could cause.

What Happened

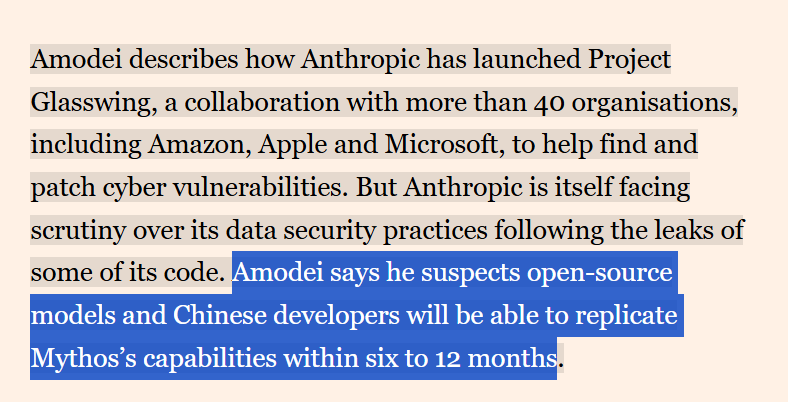

According to the report, Anthropic has built a safety case arguing against the public release of a model known as "Mythos." The core of the argument is a fear of the damage the model could potentially cause. No specific capabilities, benchmark scores, or technical details about the Mythos model were shared in the source material. The announcement was framed as a significant internal decision by the company, known for its constitutional AI approach and focus on AI safety.

Context

This move fits within Anthropic's established public stance of prioritizing safety and cautious deployment. The company, a primary competitor to OpenAI, has consistently emphasized building predictable and steerable AI systems. Withholding a model pre-release is a more extreme version of the deployment pacing advocated by many in the AI safety community. It suggests the internal evaluation flagged capabilities or failure modes that Anthropic's safety team deemed unacceptably risky for a general release at this time.

The Broader Landscape of Model Withholding

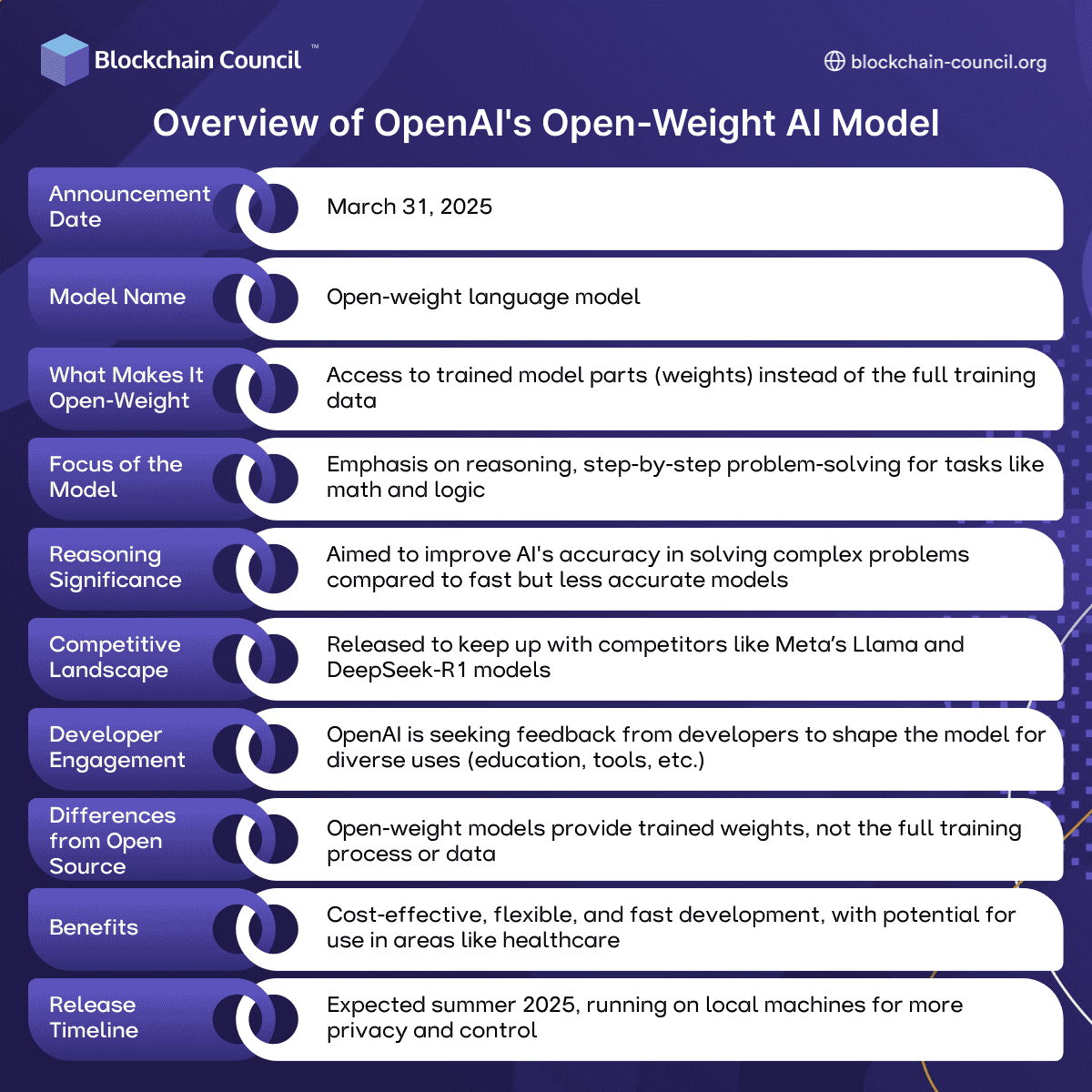

Anthropic is not the first AI lab to withhold a model. In 2024, OpenAI confirmed it had developed but not released a precursor to its o1 reasoning models, and other labs have shelved projects over dual-use concerns. However, a public acknowledgment of a specific model name being withheld is less common. This action raises immediate questions about the threshold for such decisions and whether a standardized evaluation framework for "dangerous capability" assessment exists across the industry.

Unanswered Questions

The lack of concrete details about Mythos is the central limitation of this report. Key unknowns include:

- Model Type: Is Mythos a large language model, a multimodal system, or an agentic framework?

- Capabilities: What specific abilities triggered the safety concern? (e.g., advanced autonomous planning, superior persuasive ability, novel scientific discovery potential with dual-use risk, cybersecurity prowess)

- Benchmarks: Did it achieve a breakthrough on a specific internal or external evaluation?

- Fate of the Model: Is it scrapped entirely, used only for internal safety research, or being modified for a future, safer release?

gentic.news Analysis

This decision is a direct manifestation of the AI safety principles Anthropic has championed since its founding by former OpenAI researchers concerned about rapid commercialization. It represents a tangible, costly action—shelving a likely significant R&D investment—in the name of precaution. This aligns with the growing institutional focus on frontier model evaluations and dangerous capability assessments, a trend we covered in our analysis of the UK AI Safety Institute's early 2026 findings.

The move creates immediate competitive tension. While Anthropic cites safety, its rivals—namely OpenAI with its ongoing GPT and o-series releases, and Google DeepMind with Gemini—continue their public deployment cycles. The industry lacks a common standard for what constitutes an unreleasable model, putting the onus on individual corporate governance. This incident may intensify calls for external, third-party auditing of advanced models before release, a policy area that has seen increased legislative discussion in 2025 and 2026.

Practically, for developers and researchers, this underscores that the most advanced models in lab environments may never become accessible APIs. The cutting edge is becoming increasingly opaque, bifurcated into public-facing models and private, restricted research artifacts. This has profound implications for the open-source community, academic research, and the overall pace of innovation distributed across the ecosystem.

Frequently Asked Questions

What is the Mythos AI model?

Based on available information, Mythos is an AI model developed by Anthropic that the company has decided not to release publicly. Its specific architecture, size, and capabilities have not been disclosed. The name "Mythos" appears to be an internal project codename.

Why did Anthropic not release the Mythos model?

Anthropic made an internal safety assessment concluding that the potential damage the Mythos model could cause outweighed the benefits of its public release. The company has not detailed the specific risks identified, but they likely pertain to potential misuse or unforeseen harmful behaviors that their safety protocols could not sufficiently mitigate.

How does this compare to OpenAI not releasing a model?

The decision is similar in principle to past instances where AI labs have withheld models, such as OpenAI's confirmed non-release of a precursor to its o1 models. The key difference may be in the public framing; Anthropic is explicitly citing a formalized "safety case" and potential damage as the reason, which is consistent with its brand identity as a safety-first research organization.

Will Anthropic ever release Mythos or a version of it?

This is unknown. The model could be permanently shelved, destroyed, used exclusively for internal safety research to improve future models, or iterated upon until Anthropic's safety team believes the risks are manageable. The company has not indicated any future release plans.