Key Takeaways

- A new research paper presents a reference architecture for 'agentic hybrid retrieval' that orchestrates BM25, dense embeddings, and LLM agents to handle underspecified queries against sparse metadata.

- It introduces offline metadata augmentation and analyzes two architectural styles for quality attributes like governance and performance.

What Happened

A research paper published on arXiv proposes a novel reference architecture for agentic hybrid retrieval systems, specifically applied to the challenging domain of dataset search. The core problem addressed is ad hoc dataset search, where users submit vague, natural-language queries that must be matched against sparse, heterogeneous, and often poorly structured metadata records. The authors argue that neither traditional lexical search (like BM25) nor modern dense-embedding retrieval alone is sufficient for this task.

Technical Details

The proposed architecture repositions dataset search as a software-architecture problem. Its key innovation is the orchestration of multiple retrieval techniques by a Large Language Model (LLM) agent that acts as an intelligent controller. The system combines:

- BM25 Lexical Search: For term-matching precision.

- Dense-Embedding Retrieval: For semantic understanding.

- Reciprocal Rank Fusion (RRF): A method to merge ranked results from the two different retrieval systems.

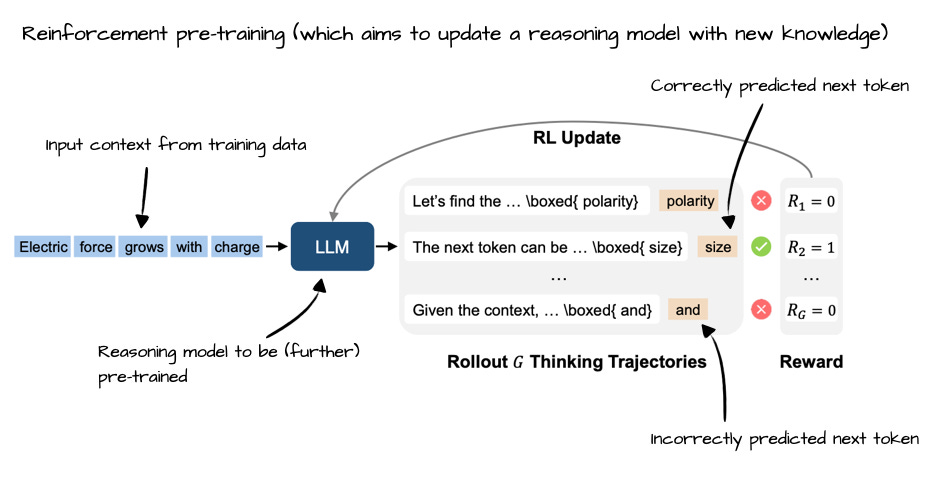

The LLM agent doesn't just perform a single search. It engages in a ReAct (Reasoning + Acting) loop: it repeatedly plans queries, evaluates whether the results are sufficient, and can rerank candidates. This "agentic" behavior allows it to handle the ambiguity of user intent.

A critical pre-processing step is introduced to tackle the vocabulary mismatch between how users ask for data and how providers describe it. In an offline metadata augmentation phase, an LLM generates multiple "pseudo-queries" for each dataset record. These synthetic queries, representing potential ways users might ask for that data, are then indexed alongside the original metadata, enriching the searchable corpus before any live query is made.

The paper rigorously examines two high-level architectural styles:

- Single ReAct Agent: A unified agent handles all reasoning and control.

- Multi-Agent Horizontal Architecture with Feedback Control: Tasks are distributed among specialized agents (e.g., for query planning, evaluation, reranking) with control mechanisms to manage their interaction.

The analysis focuses on quality-attribute tradeoffs critical for production systems: modifiability (ease of updating components), observability (ability to monitor and debug), performance (latency, cost), and governance (controlling nondeterministic LLM outputs). The authors define an evaluation framework with seven system variants to isolate the impact of each architectural decision, presenting this as an extensible reference design for the software engineering community.

Retail & Luxury Implications

While the paper's evaluation domain is scientific and governmental dataset search, the architectural patterns and components have direct, high-value analogs in retail and luxury. The core challenge—matching vague user intent to imperfect, heterogeneous internal data—is ubiquitous.

Enterprise Knowledge & Asset Search: A luxury group's internal teams constantly search for assets: past campaign mood boards, fabric swatch databases, supplier sustainability reports, or historical sales analysis decks. These are often buried in systems with inconsistent metadata. An agentic hybrid retrieval system could power a "Corporate Memory" search engine, allowing a designer to query, "Find Italian velvet suppliers from the 2023 collection that are certified organic," and have an LLM agent intelligently comb through PDFs, PIM entries, and CRM data.

Enhanced Product Discovery & Customer Service: The offline metadata augmentation concept is particularly powerful. An e-commerce Product Information Management (PIM) system contains structured attributes (color: "Bleu Roi"), but customers search informally ("deep royal blue"). An LLM could generate thousands of plausible customer search phrases for each product offline, embedding them into the product's search index. This bridges the vocabulary gap without slowing down the live site, making discovery more intuitive.

Architectural Governance for LLM Systems: The paper's emphasis on bounded, auditable architectures and governance tactics is a critical read for any technical leader implementing LLM-based agents. Retail applications dealing with pricing, inventory, or customer data require audit trails and controls. The analysis of single-agent vs. multi-agent designs provides a framework for deciding between a simpler, monolithic chatbot agent and a more complex but controllable system of specialized AI assistants (e.g., one for product lookup, one for policy checking, one for response generation).

The proposed architecture moves beyond a simple "RAG pipeline" to a managed, multi-strategy retrieval system with an LLM conductor. For retailers sitting on decades of unstructured data across brands, this represents a sophisticated blueprint for building the next generation of intelligent enterprise search and customer interaction platforms.