The Innovation — What the Source Reports

A recent analysis from legal platform JD Supra examines the rise of agentic AI commerce, where AI agents (powered by large language models and autonomous reasoning) are given the ability to browse, compare, and purchase products on behalf of a user. Unlike traditional recommendation engines or chatbots, these agents can negotiate, execute transactions, and even manage returns with minimal human oversight.

The article highlights that while this promises a frictionless shopping experience, it also introduces novel risks: agents may misinterpret product descriptions, place unintentional orders, or be exploited by malicious actors. Retailers face potential liability for transactions initiated by AI agents, especially when returns, cancellations, or security breaches occur.

Why This Matters for Retail & Luxury

For luxury brands (LVMH, Kering, Richemont), where customer trust and brand integrity are paramount, agentic AI commerce presents both opportunity and peril.

Scenarios:

- A high-net-worth customer allows an AI agent to access their personal account, instructing it to “find a limited-edition handbag under €5,000.” The agent misinterprets “limited edition” and purchases a counterfeit listing from a third-party marketplace, leaving the brand liable for facilitating the transaction.

- An agent programmed to negotiate discounts on bespoke furniture repeatedly undercuts a brand’s pricing policy, eroding brand value.

- Return fraud becomes automated: agents could systematically exploit liberal return policies, overwhelming customer service.

Mass-market retailers face similar issues at scale – agent-driven bot attacks on flash sales, inventory manipulation, and denial-of-service through high-volume automated interactions.

Business Impact

JD Supra’s analysis suggests that retailers who fail to prepare for agentic AI will face:

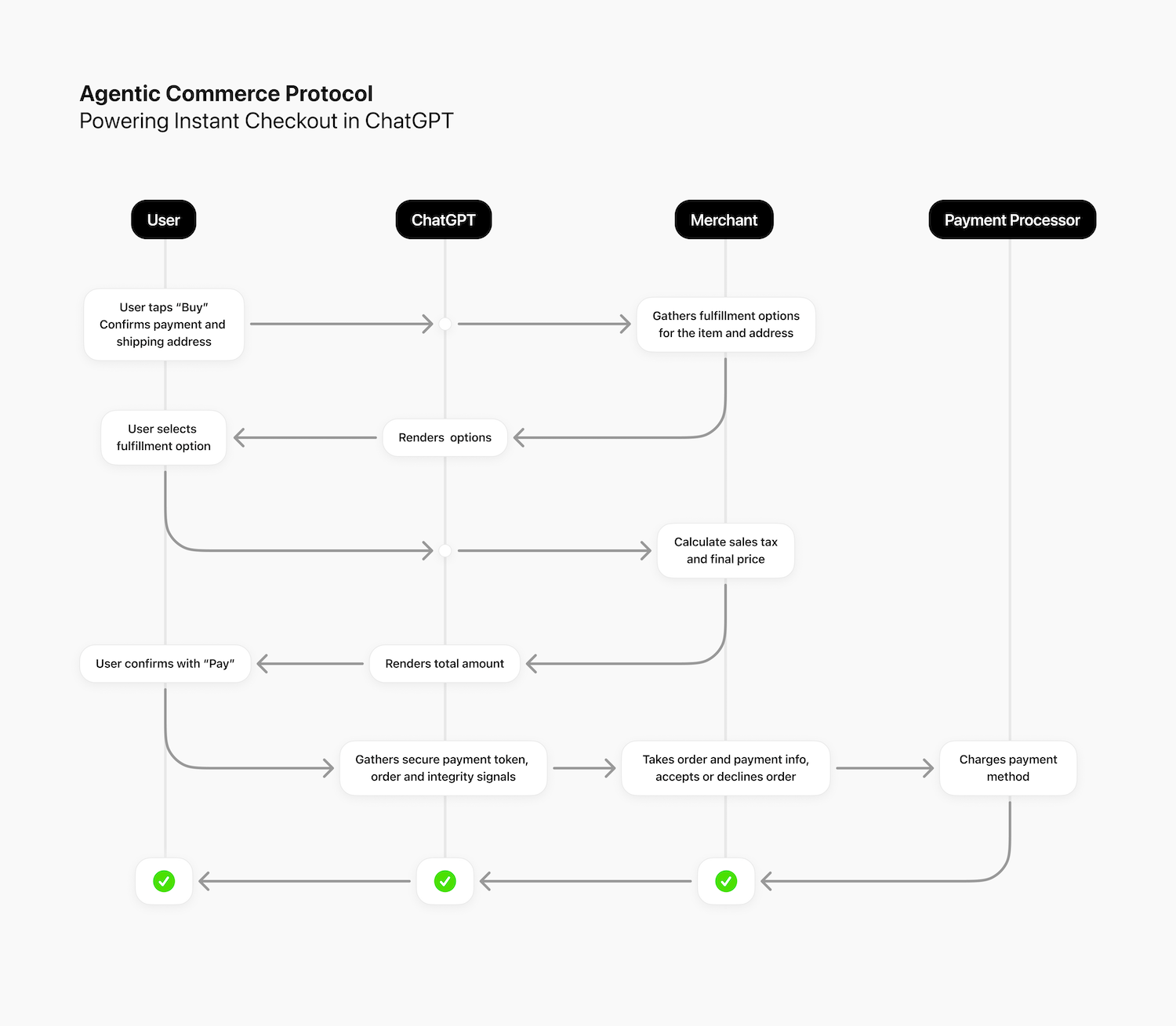

- Increased transaction complexity – agents may not follow standard checkout flows (e.g., cookies, session IDs).

- Liability ambiguity – who is responsible when an agent’s action violates terms of service? The user, the agent developer, or the retailer?

- Fraud acceleration – agents can test thousands of stolen credit cards or exploit pricing glitches faster than humans.

- Operational cost – support teams will need to handle agent-originated queries and disputes.

However, early adopters can gain a competitive edge by designing agent-friendly commerce APIs, identity verification for AI shoppers, and clear terms governing agentic transactions.

Implementation Approach

To mitigate risks, retailers should:

- Update Terms of Service – Explicitly address AI agent interactions, including that users are responsible for their agents’ actions.

- Deploy agent-aware authentication – Require agents to present a verifiable identity token (e.g., OAuth with scope limits).

- Build agentic audit trails – Log all agent-initiated interactions (requests, decisions, purchases) for dispute resolution.

- Rate-limit and sandbox – Prevent abuse by capping agent transaction speed and limiting access to sensitive operations.

- Collaborate with legal teams – Understand evolving regulations (EU AI Act, consumer protection laws) that may treat agent actions differently.

Governance & Risk Assessment

Agentic AI commerce is still nascent – the JD Supra article is a preemptive warning rather than a report on widespread incidents. The maturity level is low; few retailers have encountered real agentic shopping behavior, but the technology is advancing rapidly (e.g., OpenAI’s Operator, Google’s Project Mariner).

Risk areas:

- Privacy – agents may collect and store user shopping data outside the retailer’s control, complicating GDPR/CCPA compliance.

- Security – agent APIs become new attack surfaces for prompt injection or unauthorized transactions.

- Fairness – agents trained on biased data could discriminate against certain customers or products.

- Liability – unclear in current law whether an AI agent is an agent (in legal terms) of the user or a third-party tool.

Retailers should treat agentic AI as a new class of user – one that requires its own policies, technical guardrails, and constant monitoring.

gentic.news Analysis

The JD Supra article serves as an essential early-warning signal for retail AI leaders. While agentic AI commerce is not yet mainstream, the legal community is already anticipating the fallout. Luxury brands, in particular, must move proactively: define how agents can discover and purchase products, establish clear attribution of agent actions, and work with platform partners (e.g., Shopify, Salesforce Commerce Cloud) to standardize agent identification.

Interestingly, this legal risk-focused perspective complements the technical excitement around “shopping agents” seen in open-source projects and frontier labs. The gap between what’s technically possible and what’s legally/operationally safe is significant. Retailers who invest now in agent-aware commerce infrastructure will not only protect themselves but also set the terms of engagement for a new channel of automated demand.

Key takeaway: Agentic AI is coming to e-commerce – the winners will be those who embrace it with eyes wide open, not those who ignore the risks until a lawsuit lands.