AGIBOT has released GE-Sim 2.0, a new foundation model designed to generate and simulate photorealistic environments for robotic agents. The release, announced via a social media post from the company's founder, positions the model as a tool that enables robots to "dream"—a metaphor for planning and reasoning within a simulated world before executing tasks in reality.

Key Takeaways

- AGIBOT has launched GE-Sim 2.0, a foundation model for robot simulation.

- It allows AI agents to generate and reason within photorealistic simulated environments for planning and training.

What Happened

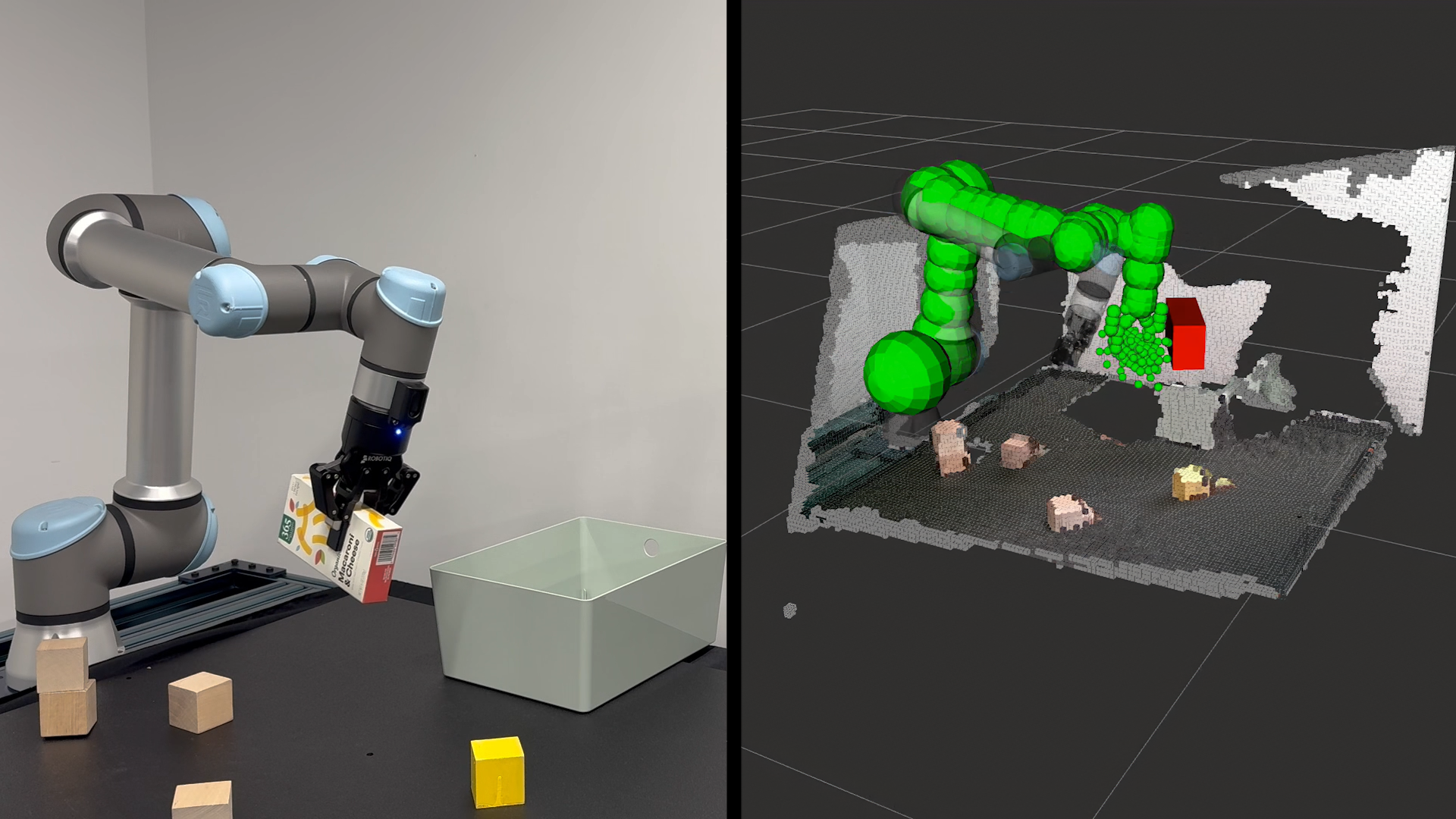

AGIBOT, a company focused on embodied AI and robotics, announced the launch of GE-Sim 2.0. The model is described as a "foundation model for simulation" capable of generating detailed, interactive 3D environments. The core proposition is that an AI agent can use this model to create a simulated version of its target environment—like a kitchen or warehouse—and then "dream" or plan its actions within that simulation. This internal simulation allows the agent to test strategies, predict outcomes, and optimize its plan before any physical movement occurs, potentially improving efficiency and safety.

Context & Technical Implications

The concept of "simulation-to-reality" (Sim2Real) transfer is a longstanding challenge in robotics. Training purely in simulation often fails due to the "reality gap"—differences between the simulated and real worlds that break the learned policy. GE-Sim 2.0 appears to be an attempt to bridge this gap by using a generative foundation model to create highly realistic and adaptable simulations on-demand.

From a technical standpoint, a foundation model for simulation would likely be a large-scale generative model trained on massive datasets of 3D scenes, object interactions, and physics. When given a text or image prompt (e.g., "a cluttered office desk with a monitor, coffee mug, and stack of papers"), the model would generate a corresponding interactive simulation. An agent's "dream" would then be its internal rollout of possible actions within this generated simulation.

This approach differs from traditional, hand-crafted simulators (like NVIDIA's Isaac Sim) by being generative and potentially more flexible. The promise is that an agent could simulate the exact environment it's about to enter, not just a generic proxy.

What This Means in Practice

If the technology performs as suggested, the immediate application is more robust robotic planning and training. For example:

- A warehouse robot could simulate the current state of a shelf before attempting to pick an item, identifying potential obstacles.

- A home assistant robot could "practice" clearing a specific table layout in simulation before trying it physically.

- Developers could generate countless variations of a training scenario to improve an agent's generalization, all driven by natural language prompts.

The major unknown is the fidelity and physical accuracy of the generated simulations. The success of the method hinges entirely on whether plans made in the "dream" simulation transfer reliably to the real world.

gentic.news Analysis

This launch by AGIBOT fits directly into the accelerating trend of applying foundation model capabilities to robotics, a sector we've tracked closely. In March 2026, we covered the release of Google's RT-3, a vision-language-action model that showed significant improvements in instruction following. AGIBOT's GE-Sim 2.0 represents a complementary, rather than competing, approach: instead of focusing primarily on the control policy (like RT-3), it focuses on the world model in which that policy is planned.

This aligns with a broader industry shift towards "world models" and internal simulation as a core component of advanced AI agents. DeepMind's recent work on Genie, a generative interactive environment model, demonstrated the potential of training agents entirely within generated worlds. AGIBOT is applying a similar principle but with a stated focus on photorealism and real-world robotics tasks.

The competitive landscape here is nascent but growing. NVIDIA dominates with its Omniverse and Isaac Sim platforms, which are high-fidelity but not generative in the foundation model sense. Startups like Covariant and Physical Intelligence are also building large models for robot reasoning. AGIBOT's bet is that a dedicated, generative simulation foundation model will become a critical layer in the robotics stack, separate from the control model. The key test will be published benchmarks showing a reduction in real-world task failures when using GE-Sim 2.0 for planning versus baseline methods.

Frequently Asked Questions

What is GE-Sim 2.0?

GE-Sim 2.0 is a foundation model developed by AGIBOT that generates photorealistic, interactive 3D simulations from prompts. It is designed to allow robotic AI agents to create a simulated version of their target environment for planning and "dreaming" of action sequences before execution in the real world.

How does a robot "dream" with this model?

The "dream" is a metaphor for internal simulation and planning. An agent uses GE-Sim 2.0 to generate a simulation based on its perception of a target scene (e.g., from a camera). It then runs mental simulations—dreams—of different action sequences within this virtual environment to predict outcomes, identify optimal paths, and avoid failures before it moves a single physical actuator.

What is the difference between GE-Sim and a simulator like NVIDIA Isaac Sim?

Traditional simulators like Isaac Sim are pre-built software platforms with defined environments and physics engines. GE-Sim 2.0 is a generative model: you describe an environment (via text or image), and it creates a corresponding simulation on the fly. This aims for greater flexibility and specificity, allowing simulation of unique, real-world scenes without manual 3D modeling.

What are the main challenges for a technology like this?

The primary challenge is the "reality gap." If the generated simulation lacks accurate physics, lighting, or object properties, the plans made within it will fail when deployed. The model must also generate simulations with extremely low latency to be useful for real-time planning. Finally, the company has not yet released independent benchmarks, so its real-world performance compared to established methods is unverified.