A pointed critique from AI researcher Hasan Türe has spotlighted a growing, fundamental tension in AI development: we are building autonomous agents that operate on digital logic, yet we still rely on inherently limited, analog humans to evaluate them.

The core argument is stark: Human respondents cannot replicate agent logic. They get tired, distracted, and biased. More critically, they fundamentally lack the perception to understand the specific reasoning, execution processes, preferences, and boundaries of an AI agent operating within a digital environment. This creates a significant methodological gap in agent research and benchmarking.

The Core Problem: An Alien Logic

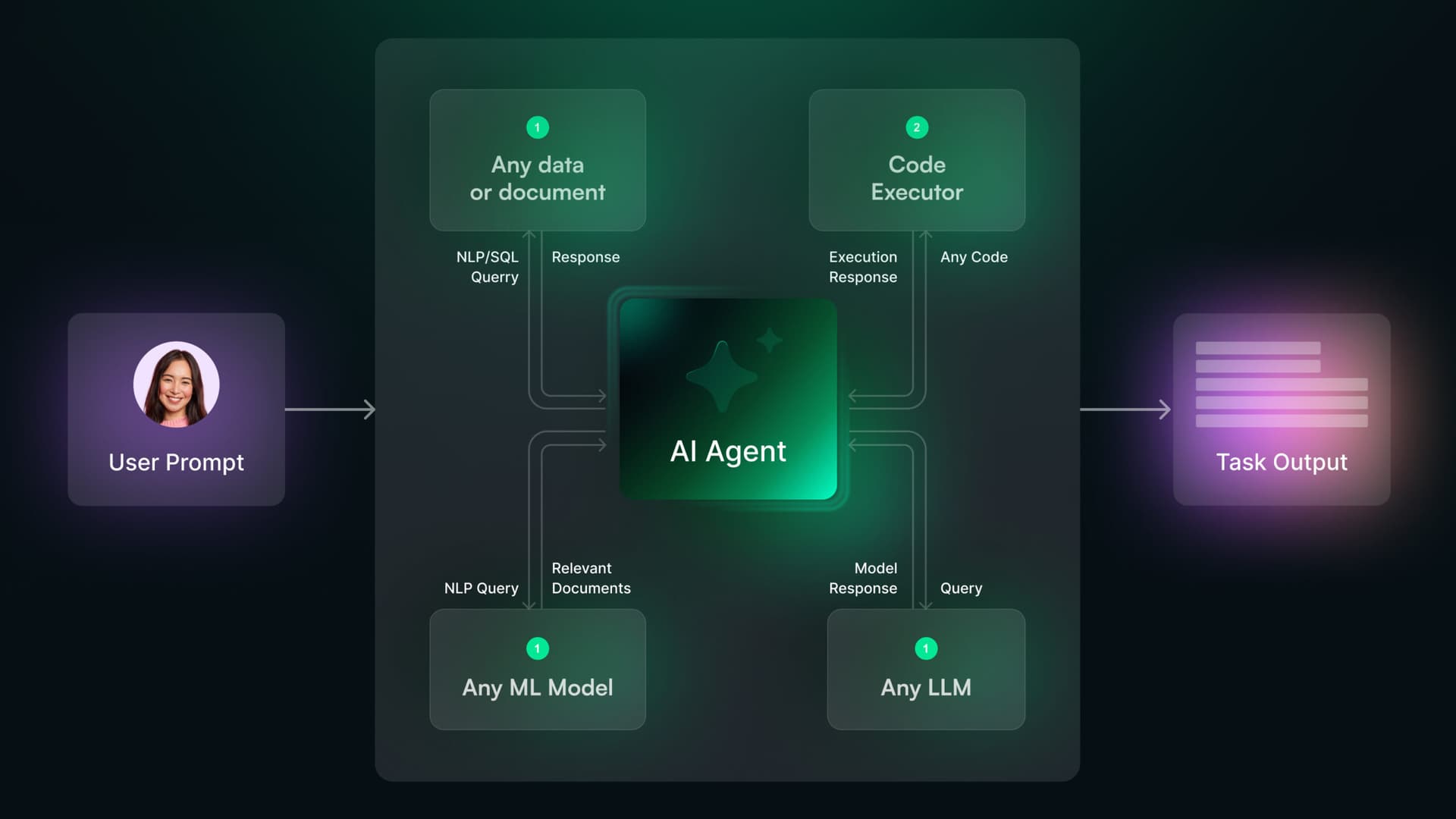

AI agents—systems designed to autonomously perceive, plan, and act to achieve goals—are being trained and tested in complex digital sandboxes (e.g., web browsers, coding environments, simulated worlds). Their "thought processes" involve parsing raw HTML, executing API calls, navigating file trees, and making chain-of-thought decisions at machine speed.

Human evaluators, tasked with scoring an agent's performance on a task like "book a flight" or "debug this code," are blind to this internal process. They see only the final output or a simplified log. They cannot perceive:

- The agent's internal chain-of-thought reasoning.

- The millions of token-level decisions made.

- How the agent recovers from dead-ends or errors.

- The agent's evolving "preferences" for certain action paths over others.

As Türe notes, this means human evaluation is a black-box assessment of a black-box process. It's akin to judging a chess engine's brilliance solely by whether it won or lost, with no insight into its positional evaluation or depth of search.

The Practical Consequences for AI Development

This evaluation bottleneck has direct, negative impacts on the pace and quality of agent research:

- Noisy, Unreliable Benchmarks: Human-rated benchmarks like MT-Bench or Chatbot Arena for conversational agents introduce subjective noise. For action-based agents, the problem is worse—a human might mark a task as "failed" because the final answer is wrong, missing that the agent's 90% of its reasoning was correct and it failed on a trivial syntax error.

- Slow Iteration Cycles: Human evaluation is slow and expensive. It prevents the rapid, automated testing cycles (A/B testing, hyperparameter sweeps) that are standard in other areas of ML.

- Inability to Diagnose Failure Modes: When an agent fails, human evaluators often cannot pinpoint why. Was it a knowledge gap, a planning error, a tool-use mistake, or a prompt misunderstanding? Without this granular diagnosis, improving the agent becomes guesswork.

The Search for Solutions

The field is aware of this problem and is groping for solutions, which generally fall into two categories:

1. Automated, Programmatic Evaluation: Creating digital environments where an agent's success can be objectively scored by code. For example:

- SWE-Bench: Tests coding agents by checking if their submitted code passes unit tests.

- WebArena/VisualWebArena: Tests web agents by checking if they successfully navigate to a target page or complete a transaction.

- Simulated Environments (e.g., MineDojo, Habitat): Score agents based on achieved goals in a physics-based world.

These are superior for measuring capability but are difficult and expensive to create for every possible task domain.

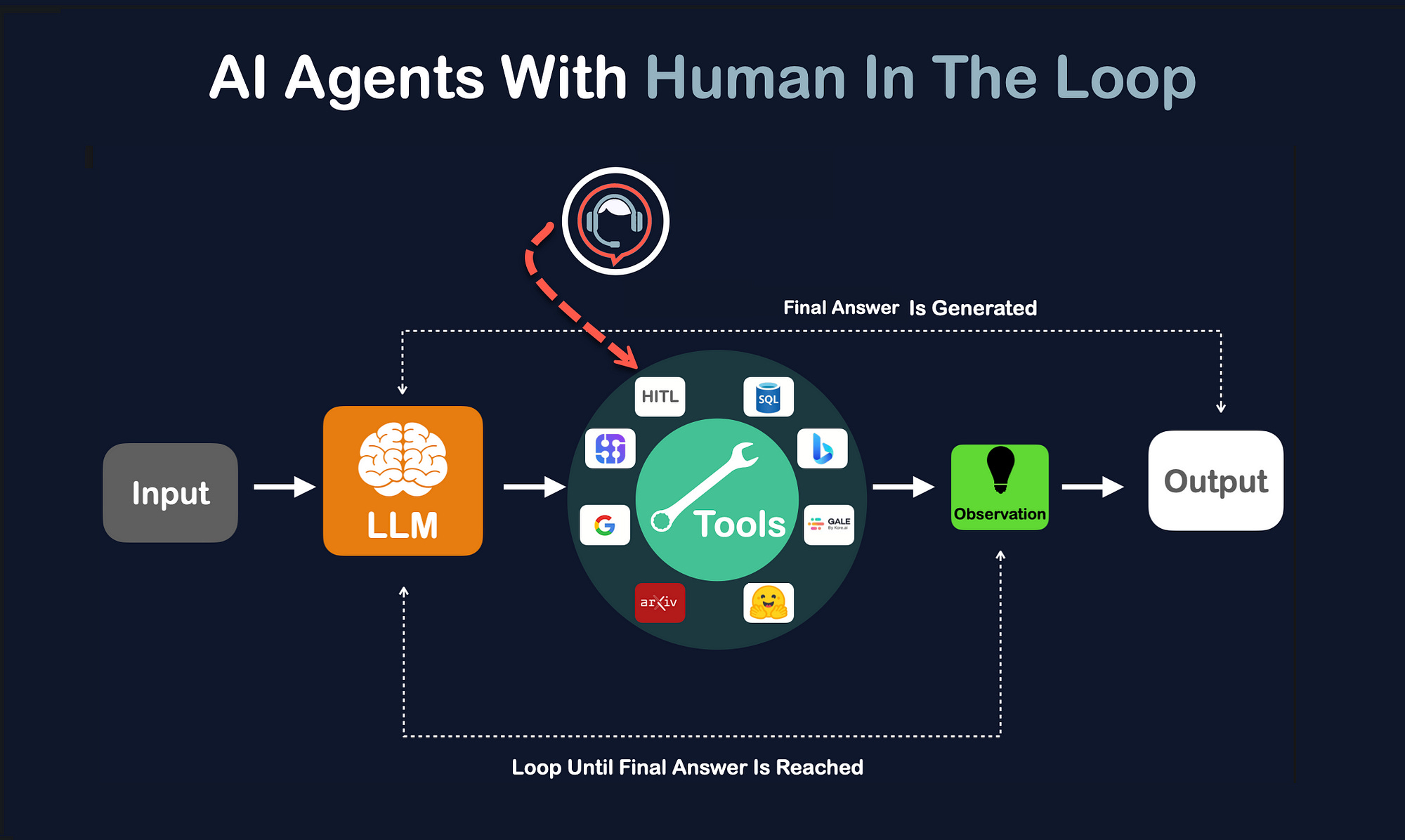

2. Agent-to-Agent Evaluation: Using a more capable "judge" AI model (like GPT-4 or Claude 3.5) to evaluate the performance of a lesser agent. This is faster and more scalable than human evaluation and can potentially follow the agent's reasoning trace. However, it introduces a new problem: you are using one black box to evaluate another, with all the attendant biases and failures of the judge model.

gentic.news Analysis

This critique cuts to the heart of a methodological crisis we've been tracking. As we reported in our analysis of Cognition Labs' Devin and the subsequent flurry of AI coding agents, the initial hype was often followed by scrutiny over unreproducible or poorly-defined evaluation metrics. Türe's argument explains why: demonstrating an agent's true capability is extraordinarily difficult.

This aligns with a trend we noted in our coverage of Google's Astra and other multimodal agents: a shift from demo-driven marketing to rigorous, automated benchmarking. The companies making the most credible progress—like DeepSeek with its R1 model's strong SWE-Bench results—are those embracing programmatic evaluation. The pressure is now on the entire research community to move beyond "human-in-the-loop" as the gold standard for agentic AI and to build a new generation of evaluation suites that are as complex and autonomous as the agents they are designed to test.

The entity relationship here is clear: the research methodology (human evaluation) is becoming a limiting factor for the advancement of the technology (AI agents). This creates a market opportunity for platforms that can provide robust, automated agent testing environments, a space where companies like Scale AI and Weights & Biases are already expanding their offerings.

Frequently Asked Questions

Why can't we just use better human evaluators?

The problem is not human skill but human nature and perception. Even a domain expert cannot mentally simulate the trillion-parameter, token-by-token decision-making of a large language model acting as an agent. They are evaluating an external output, not the internal process, which is where most of the interesting failures and learning opportunities occur.

Are automated benchmarks the ultimate solution?

They are a necessary step, but not a perfect one. Automated benchmarks (like unit tests) are excellent for measuring specific, predefined capabilities. However, they can be "gamed" by agents overfitted to the test set, and they are poor at measuring qualities like creativity, robustness to novel instructions, or general reasoning—areas where human judgment is still valuable, albeit flawed.

What does this mean for the near future of AI agent development?

Expect a period of fragmentation and debate over evaluation standards. Different research groups and companies will champion their own benchmarks. The field will likely converge on a hybrid approach: using automated benchmarks for rapid development and iteration, supplemented by targeted, high-quality human evaluation for nuanced tasks. The biggest advances will come from teams that build the most sophisticated and realistic digital testing environments.

Is this related to the "alignment" problem?

It's adjacent. The alignment problem asks, "How do we ensure AI systems do what we intend?" The evaluation bottleneck asks, "How do we even measure what the AI system did and whether it was correct?" You cannot solve alignment without first solving measurement. If we can't reliably evaluate an agent's actions in a controlled sandbox, we have no hope of evaluating its safety in the real world.