PJM data reveals AI infrastructure projects now spend more time waiting after interconnection approval than in the queue itself. Post-approval delays have stretched to 4 years for some projects, per PJM's latest interconnection queue report.

Key facts

- Post-approval delays average 3-4 years for AI data centers.

- PJM covers 65 million people across 13 states.

- Google's Texas facility for Anthropic faces extended timelines.

- Transformer lead times now 18-24 months.

- $700B AI CapEx pipeline threatened by grid bottlenecks.

The Post-Approval Bottleneck

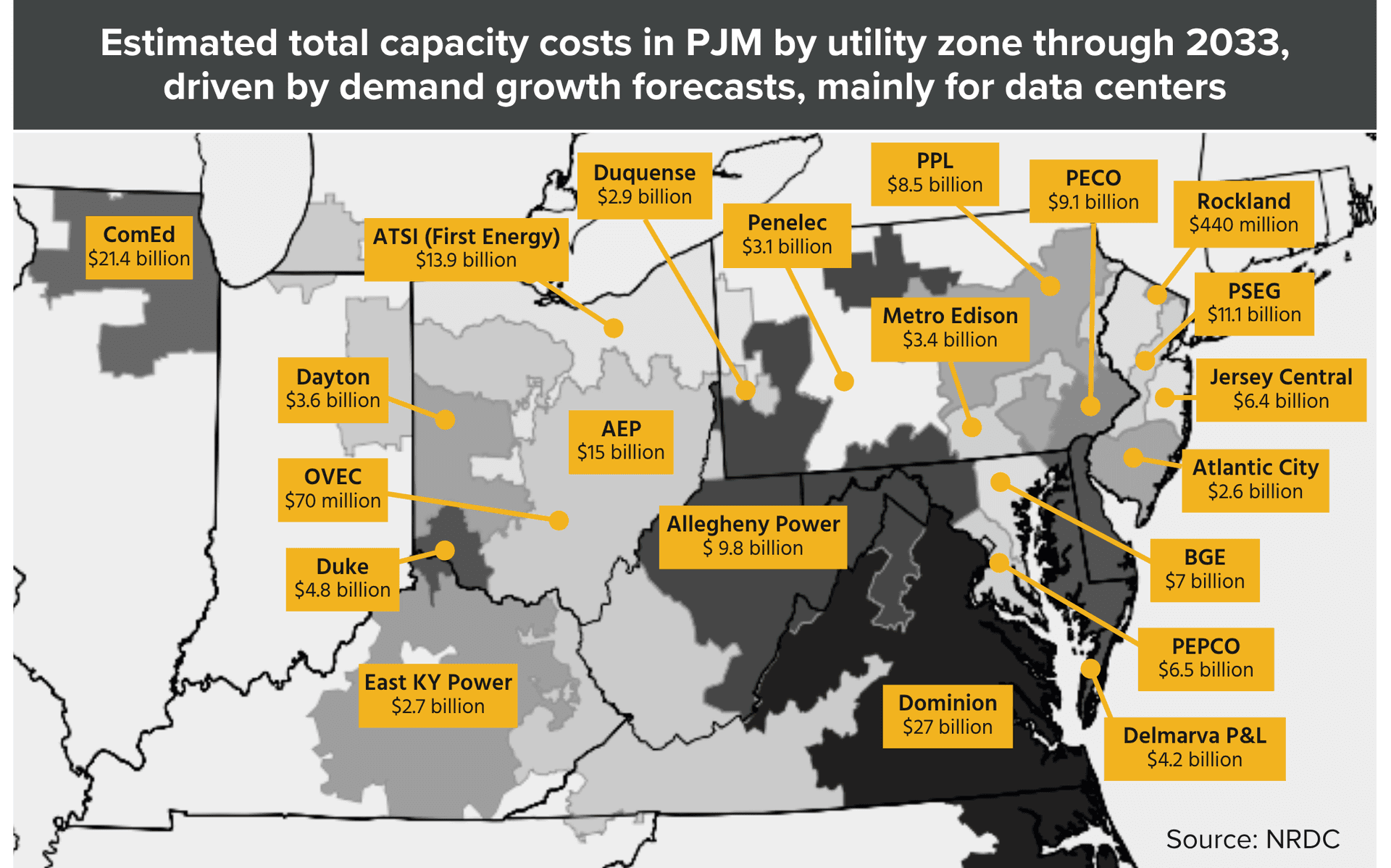

New data from PJM Interconnection — the grid operator covering 65 million people across 13 states — shows AI data center projects face an average of 3-4 years of delays after receiving interconnection approval. This post-approval wait now exceeds the time spent in the interconnection queue itself [According to Data Center Knowledge].

The bottleneck threatens the $700B AI CapEx pipeline announced by Google, Meta, and Microsoft. Google's $5B Texas facility for Anthropic is among projects facing extended timelines [per the source]. The data suggests capacity constraints, not just regulatory hurdles, are the primary driver.

Why This Matters for AI Buildout

The unique take: the conventional narrative blames interconnection queue backlogs for slowing AI infrastructure. PJM's data flips this — the queue is no longer the binding constraint. The real bottleneck is post-approval: transformer availability, construction labor, and grid interconnection equipment lead times.

Meta's $60B+ AI spend in 2025 and Google's 7 new data center projects identified in May 2026 all face this post-approval wall. The CNAS report from May 11, 2026 warned that chip supply trails CapEx — this PJM data shows the grid side is equally constrained [as previously reported].

What the Data Doesn't Say

PJM did not disclose specific project names or the exact percentage of AI-dedicated projects in the queue. The 4-year figure is an average; some projects may clear faster, others stall indefinitely. The source notes that grid interconnection equipment — transformers and switchgear — now have 18-24 month lead times alone.

The Structural Risk

For AI labs planning 2027-2028 model training runs, this delay means power commitments made today won't materialize until 2030. Google, Meta, and Microsoft are already competing for the same transformer supply and construction crews. The PJM data suggests the AI infrastructure buildout is supply-constrained on the grid side, not demand-constrained.

What to watch

Watch for PJM's Q3 2026 interconnection queue report and whether Google, Meta, or Microsoft announce alternative power strategies — on-site gas generation or nuclear co-location — to bypass grid delays. Also track transformer manufacturer lead times for signs of easing.