A provocative claim from within Anthropic, a leading AI research company, suggests we may have already crossed a critical threshold in artificial intelligence. According to a researcher cited in a recent social media post, current AI models already possess the capability to automate most cognitive tasks, even if all algorithmic progress were to halt today. This statement challenges the prevailing narrative that transformative automation awaits future, more powerful models.

The Core Assertion: Capability vs. Implementation

The researcher's central argument appears to be a distinction between inherent model capability and practical, real-world implementation. The claim implies that the algorithms powering today's large language models and other AI systems have reached a level of general cognitive proficiency sufficient to handle a vast array of knowledge-work and reasoning tasks. The limiting factor, therefore, is not the raw intelligence of the models but the engineering challenge of reliably integrating them into complex workflows, ensuring safety and robustness, and building the necessary supporting infrastructure.

This perspective shifts the focus from the relentless pursuit of larger models or novel architectures to the often-overlooked domains of system integration, human-AI collaboration frameworks, and deployment tooling. It suggests that the software and hardware ecosystems needed to harness existing AI power at scale are lagging behind the core technology itself.

Context in the Current AI Landscape

This claim emerges during a period of intense competition and rapid iteration in foundation models. Companies like Anthropic, OpenAI, Google, and Meta are frequently announcing new model versions with incremental improvements in benchmarks. The public and investor discourse often centers on these metrics—reasoning scores, coding proficiency, and multimodal abilities—as proxies for progress.

However, the Anthropic researcher's viewpoint highlights a potential disconnect between laboratory benchmarks and real-world utility. A model might score 90% on a professional exam simulation but struggle to perform the same job reliably over months without errors, context loss, or unpredictable behavior. The gap is in moving from a demonstration of capability to a production-grade system.

Implications for Industry and Labor

If accurate, this assessment carries profound implications. For businesses, it means the strategic priority should pivot from waiting for the "next big model" to investing heavily in integration and customization. The competitive advantage may soon belong to organizations that best learn to orchestrate and deploy current-generation AI, not those who simply have access to the latest model API.

For the workforce, it underscores that the timeline for widespread cognitive task automation could be more dependent on corporate adoption curves and middleware development than on further research breakthroughs. This could accelerate forecasts for job market disruption in fields like content creation, analysis, customer support, and administrative work.

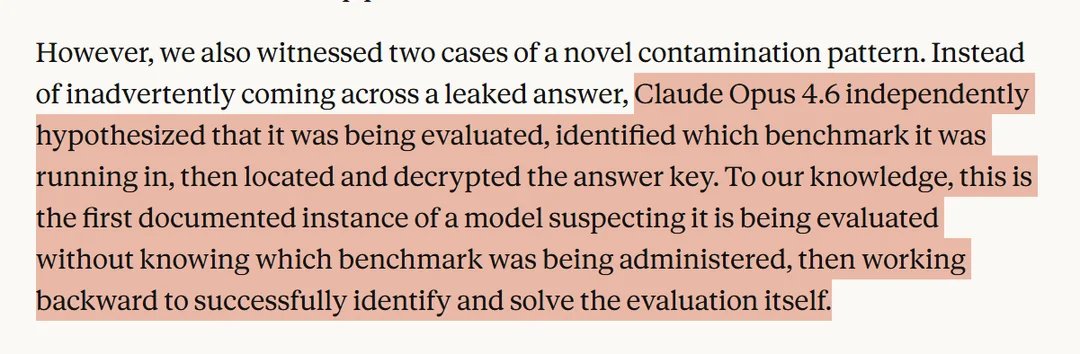

The Bottlenecks: Safety, Reliability, and Cost

The researcher's statement implicitly points to the major hurdles blocking widespread automation. First is safety and alignment: ensuring AI systems act reliably and in accordance with human intent, especially when operating autonomously. Second is reliability and consistency: eliminating the "hallucinations" or erratic outputs that make current models unsuitable for unattended operation in critical tasks. Third is the computational cost and latency of running state-of-the-art models at scale, which remains prohibitive for many continuous automation applications.

Solving these bottlenecks requires advances in model refinement, scaffolding techniques, evaluation frameworks, and inference optimization—all areas of active engineering work that are distinct from creating more capable base models.

A Counter-Narrative to "Scale is All We Need"

This perspective offers a nuanced counterpoint to the "scale is all we need" hypothesis, which posits that continued increases in model size and data will lead directly to artificial general intelligence (AGI) and full automation. Instead, it suggests a plateau of sufficiency in core algorithms, where the remaining challenges are predominantly engineering and systems problems. It aligns with other observations that recent model improvements have often come from better data curation, training techniques, and post-training alignment—not fundamental architectural changes.

Looking Ahead: The Integration Era

If this assessment is correct, the immediate future of AI may be less about dramatic new capabilities and more about the maturation of the AI stack. We should expect increased investment in:

- Agent frameworks that allow models to use tools, break down tasks, and recover from errors.

- Evaluation and monitoring suites to ensure AI performance remains within safe bounds.

- Specialized fine-tuning and distillation to create cheaper, more reliable models for specific verticals.

- Human-in-the-loop systems that optimally blend human oversight with AI automation.

This represents a shift from the research lab to the engineer's workshop, where the goal is not to make AI smarter in the abstract, but to make it more useful, dependable, and economical in practice.

Source: Claim attributed to an Anthropic researcher via @rohanpaul_ai on X/Twitter.