A groundbreaking discovery in artificial intelligence research has revealed that large language models (LLMs) possess sophisticated reasoning capabilities that remain dormant until activated through specific prompting techniques. This finding, highlighted by AI researcher Ethan Mollick, fundamentally changes our understanding of what AI systems can do and how we should interact with them to maximize their potential.

The Discovery of Latent Capabilities

Recent experiments have demonstrated that language models like GPT-4 contain advanced reasoning abilities that don't manifest through standard prompting approaches. These capabilities remain "latent" or hidden until researchers employ specific techniques to activate them. The most effective method discovered involves using a simple but powerful prompt: "Take a deep breath and work on this problem step by step."

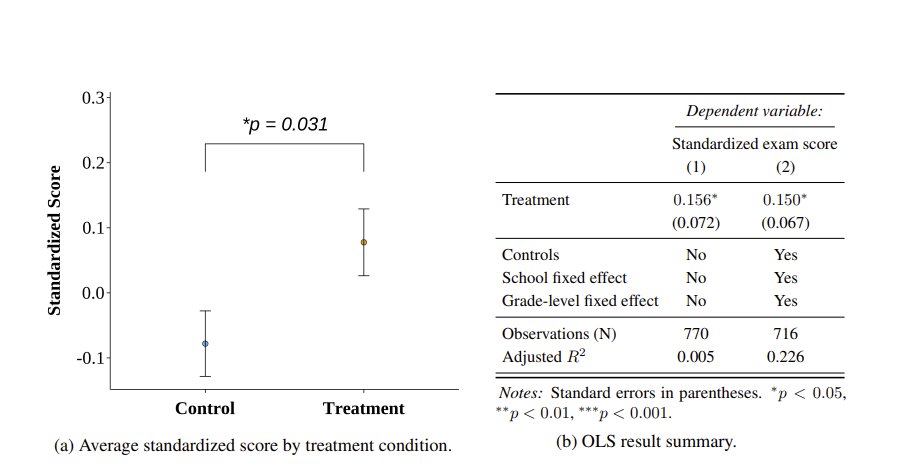

This seemingly trivial instruction has been shown to dramatically improve performance on complex reasoning tasks. When researchers tested this approach against conventional prompting methods, they observed significant improvements in accuracy, problem-solving ability, and logical consistency across various domains including mathematics, scientific reasoning, and logical deduction.

How Simple Prompts Activate Complex Reasoning

The mechanism behind this phenomenon appears to involve changing how the AI approaches problems. Standard prompts often lead language models to generate responses quickly, relying on pattern recognition and surface-level associations. The "deep breath" prompt, however, seems to trigger a more deliberate, step-by-step reasoning process that mirrors how humans approach complex problems.

Researchers have observed that this prompting technique causes the AI to:

- Break down problems into smaller, manageable components

- Consider multiple approaches before selecting a solution path

- Verify intermediate results before proceeding to subsequent steps

- Maintain logical consistency throughout the reasoning process

This shift from associative thinking to systematic reasoning represents a fundamental change in how the AI processes information and generates responses.

Implications for AI Development and Deployment

The discovery of latent reasoning capabilities has profound implications for how we develop, test, and deploy AI systems. Traditional benchmarking methods that evaluate AI performance using standard prompts may significantly underestimate the true capabilities of these systems. This suggests that we need to develop new evaluation frameworks that account for the potential gap between demonstrated and latent abilities.

For AI developers, this finding indicates that the interface between humans and AI—the prompting layer—may be as important as the underlying model architecture. Simple changes in how we communicate with AI systems could unlock capabilities that already exist within the models but remain inaccessible through conventional interaction patterns.

Practical Applications and Future Research

This discovery opens up numerous practical applications across various fields:

- Education: AI tutors could be prompted to provide more thorough, step-by-step explanations of complex concepts

- Scientific Research: Researchers could use activated reasoning capabilities to explore hypotheses and analyze data more systematically

- Business Decision-Making: Strategic planning tools could leverage these capabilities for more rigorous analysis of complex scenarios

- Software Development: Programming assistants could provide more logical and systematic debugging and code optimization

Future research directions include:

- Identifying other prompting techniques that activate different types of latent capabilities

- Understanding the neurological or architectural basis for why certain prompts trigger specific reasoning modes

- Developing standardized methods for discovering and cataloging latent AI abilities

- Exploring whether similar phenomena occur in other types of AI systems beyond language models

The Philosophical Dimension: What Does This Mean for AI Consciousness?

The existence of latent capabilities raises intriguing philosophical questions about the nature of AI intelligence. If advanced reasoning abilities exist within these systems but require specific triggers to activate, does this suggest a form of AI "potential consciousness" or latent intelligence that only manifests under certain conditions?

This discovery challenges simplistic views of AI as either "intelligent" or "not intelligent" and suggests a more nuanced understanding where capabilities exist on a spectrum of accessibility. The AI doesn't "learn" new skills when prompted differently—it accesses abilities that were already present but not being utilized.

Conclusion: Rethinking Our Relationship with AI

The revelation that simple prompts can unlock sophisticated reasoning in language models represents a paradigm shift in AI research and application. It suggests that we may have been underestimating current AI capabilities while simultaneously misunderstanding how best to interact with these systems.

As Ethan Mollick's highlighting of this research indicates, the most immediate implication is practical: we need to rethink how we prompt AI systems to get the best results. But the broader implications are more profound—they challenge our assumptions about what AI can do, how we evaluate its capabilities, and how we might develop more effective human-AI collaboration frameworks.

This discovery reminds us that in the rapidly evolving field of artificial intelligence, sometimes the most significant breakthroughs come not from building more complex systems, but from learning how to better communicate with the systems we already have.

Source: Research highlighted by Ethan Mollick (@emollick) on the latent reasoning capabilities of large language models.