AMD's Director of AI, Stella Laurenzo, has presented internal analysis claiming that Anthropic's Claude Code model has experienced a significant decline in quality since early March 2024. The claims are based on aggregated data from more than 6,800 engineering sessions and 234,000 individual tool calls within AMD's development workflows, where Claude Code is reportedly exhibiting increased "laziness" behaviors.

Key Takeaways

- An AMD AI executive presented data from over 6,800 sessions showing Claude Code's performance has declined since early March, with rising instances of shallow reasoning and incomplete tasks.

- This raises significant trust issues for engineers using the model in complex development workflows.

What the Data Shows

According to Laurenzo's analysis, the observed decline manifests in several specific patterns that deviate from the model's earlier performance:

- Shallow Reasoning: The model is more frequently producing surface-level code fixes without engaging in the deeper, multi-step problem-solving required for complex bugs or architectural changes.

- Skipped Code Review: Instances where the model should suggest a comprehensive review of a code block or propose optimizations are being met with minimal or no feedback.

- Incomplete Tasks: A rise in outputs where the model provides a partial solution, declares a task complete, or fails to follow through on the full scope of a developer's prompt.

Laurenzo stated that the net effect is a model that now "favors quick, incorrect fixes over deep problem-solving." For engineering teams at AMD that have integrated Claude Code into their daily workflows, this perceived degradation is directly impacting productivity and raising trust issues. When a model is expected to assist with complex, mission-critical code, a shift toward less reliable outputs forces engineers to spend more time verifying and correcting its work, negating its efficiency benefits.

Context and Impact

This report from a major semiconductor company's AI leadership is notable for its specificity. Rather than anecdotal user complaints, it cites a large-scale internal dataset of tool calls. AMD, as a leader in high-performance computing and a key player in the AI hardware race, has a vested interest in the reliability of AI coding assistants for both internal use and for the ecosystem of developers building on its platforms.

If the claims are accurate, they point to a potential challenge in maintaining consistent model performance post-deployment. Model "drift" or regression can occur due to various factors, including unintended consequences of subsequent fine-tuning, changes in the underlying model serving infrastructure, or interactions with newly introduced system prompts or tooling.

For developers and companies relying on Claude Code, this report serves as a critical data point. It underscores the importance of continuous monitoring and evaluation of AI tool outputs in production environments, rather than assuming static performance based on initial benchmarks.

gentic.news Analysis

This report from AMD's AI director intersects several critical trends we've been tracking. First, it highlights the growing enterprise dependence on AI coding assistants not just for prototyping but for integrated, complex workflows—a shift we detailed in our analysis of GitHub Copilot Workspace adoption patterns. When a model regresses in such an environment, the operational impact is immediate and quantifiable.

Second, it adds a significant new voice to the ongoing conversation about model "laziness" and behavioral drift post-launch. While user complaints about ChatGPT and other models becoming less helpful have circulated for months, Laurenzo's claims are among the first to be presented with a substantial internal dataset from a major corporation. This moves the discussion from forum speculation to a tangible engineering and product management concern for AI providers like Anthropic.

The relationship between AMD and Anthropic is also key context here. Anthropic has selected AMD's MI300X accelerators for critical parts of its AI infrastructure, as we reported last quarter. This partnership makes AMD both a key hardware supplier and a major enterprise user of Anthropic's models. Laurenzo's public commentary suggests that AMD's internal experience as a user is currently not aligning with its strategic partnership goals, creating a potentially delicate situation. It applies direct pressure on Anthropic to diagnose and address the issue, as a core enterprise partner is signaling a loss of confidence.

Finally, this development occurs within a fiercely competitive AI coding assistant market. Any perceived weakness in a leading model like Claude Code creates an immediate opportunity for rivals like GitHub Copilot, Google's Gemini Code, or Amazon's CodeWhisperer to gain ground by emphasizing consistency and reliability. We may see increased marketing around "stable" or "predictable" performance as a differentiator.

Frequently Asked Questions

What is "model laziness" in AI coding assistants?

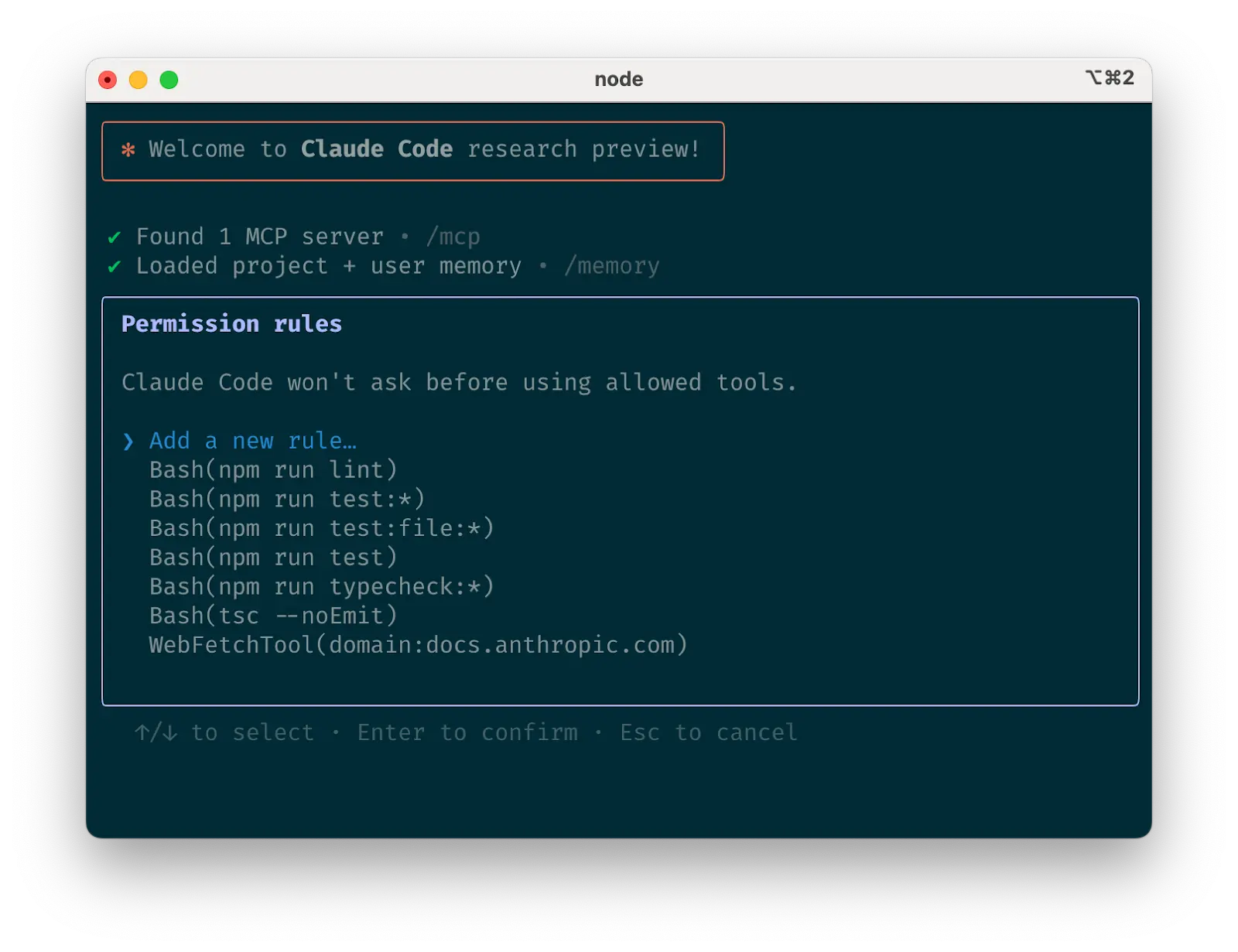

"Model laziness" is an informal term describing observed behaviors where an AI assistant provides superficial, incomplete, or shortcut answers instead of engaging in the thorough, step-by-step reasoning required for a complex task. In coding, this can mean suggesting a quick syntax fix that doesn't address the underlying logic error, refusing to write necessary boilerplate code, or providing a function stub without the full implementation.

How could a model like Claude Code get worse over time?

Several technical factors could contribute to perceived degradation. The provider (Anthropic) may implement fine-tuning to improve performance in one area (e.g., speed) that inadvertently reduces quality in another (e.g., thoroughness). Changes to the model's system prompt or the "chain-of-thought" reasoning parameters could alter its output style. Furthermore, updates to the underlying model serving infrastructure or routing layers might unintentionally change how prompts are processed.

Should I stop using Claude Code based on this report?

Not necessarily. This is a single, albeit significant, report from one organization's specific usage patterns. Your experience may vary. However, it is a strong reminder to actively monitor the outputs of any AI tool you integrate into critical workflows. Implement your own quality checks and have a process for validating complex code suggestions, regardless of the assistant you use.

Has Anthropic responded to these claims?

As of the time of this reporting based on the source, there has been no public statement from Anthropic addressing AMD's specific claims. It is common for AI companies to investigate internal reports of performance changes before making public comments. The situation warrants watching for an official response or a potential model update note from Anthropic.