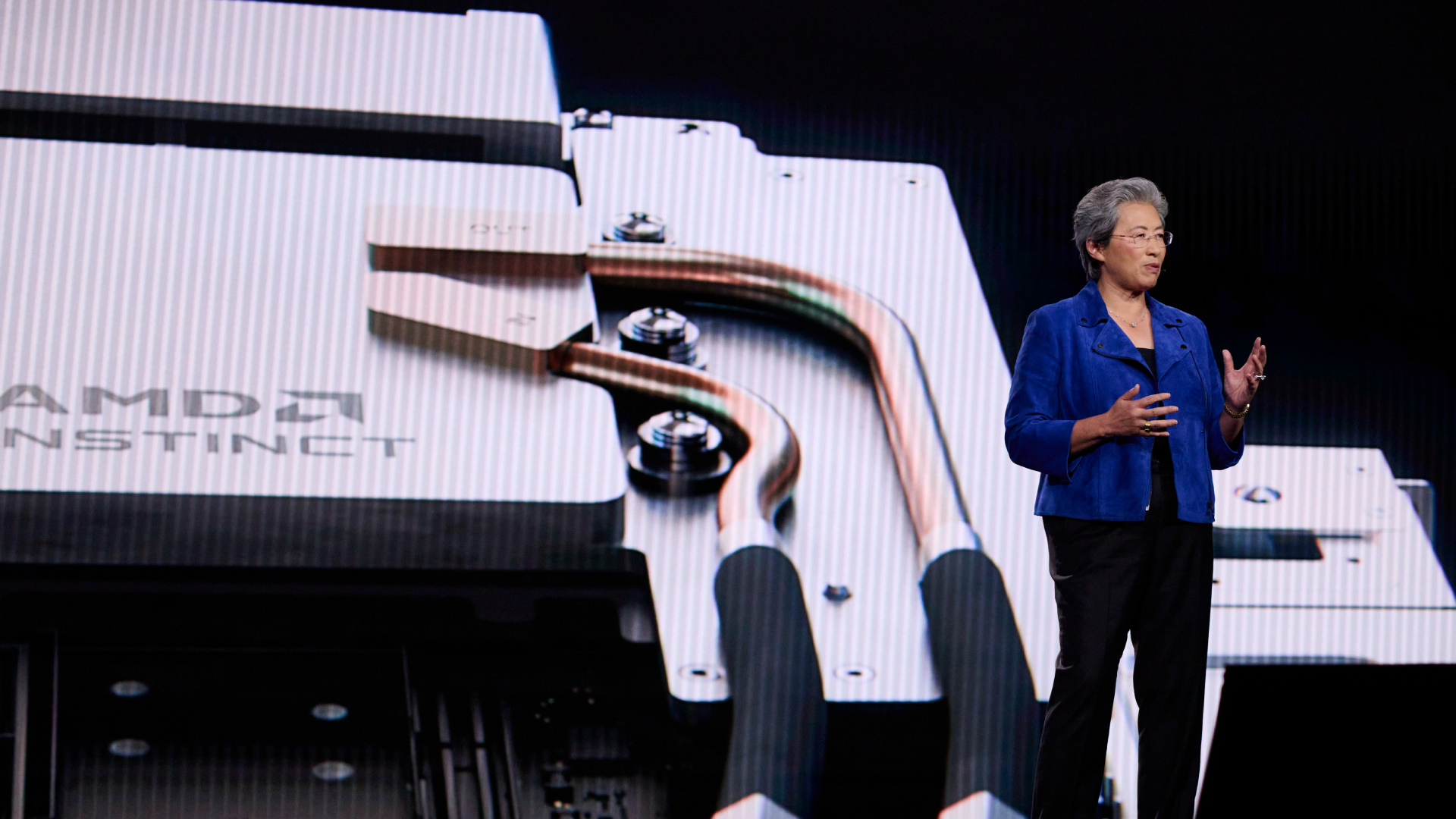

AMD launched the MI350P PCIe AI accelerator at CES 2026, claiming 39% faster FP8 theoretical compute than Nvidia's H200 NVL. The 600W dual-slot card packs 144GB of HBM3E and targets drop-in upgrades for existing air-cooled servers.

Key facts

- MI350P: 8,192 cores, 128 CUs, 144GB HBM3E, 4TB/s bandwidth.

- Claims 39% faster FP8 theoretical compute than Nvidia H200 NVL.

- 600W TDP, fanless dual-slot, drop-in for air-cooled servers.

- Built on TSMC 3nm/6nm, CDNA4 architecture.

- Nvidia has no PCIe Blackwell GPU announced.

AMD's new Instinct MI350P is a PCIe Gen5 AI accelerator built on the CDNA4 architecture, fabricated on TSMC's 3nm and 6nm FinFET process. It packs 8,192 cores, 128 Compute Units, 512 Matrix Cores, and a 2.2 GHz max clock. Memory consists of 144GB HBM3E with 4TB/s bandwidth and a 128MB last-level cache. The card supports native MXFP6 and MXFP4 precision to accelerate LLM inference [per Tom's Hardware].

The Drop-In Advantage

The MI350P is explicitly designed as a drop-in upgrade for existing air-cooled servers. At 10.5 inches and dual-slot, it uses a fanless design relying on chassis fans, configurable between 450W and 600W TDP. Up to eight cards can be paired in a single system, targeting small-to-large inference and RAG workloads [according to the source].

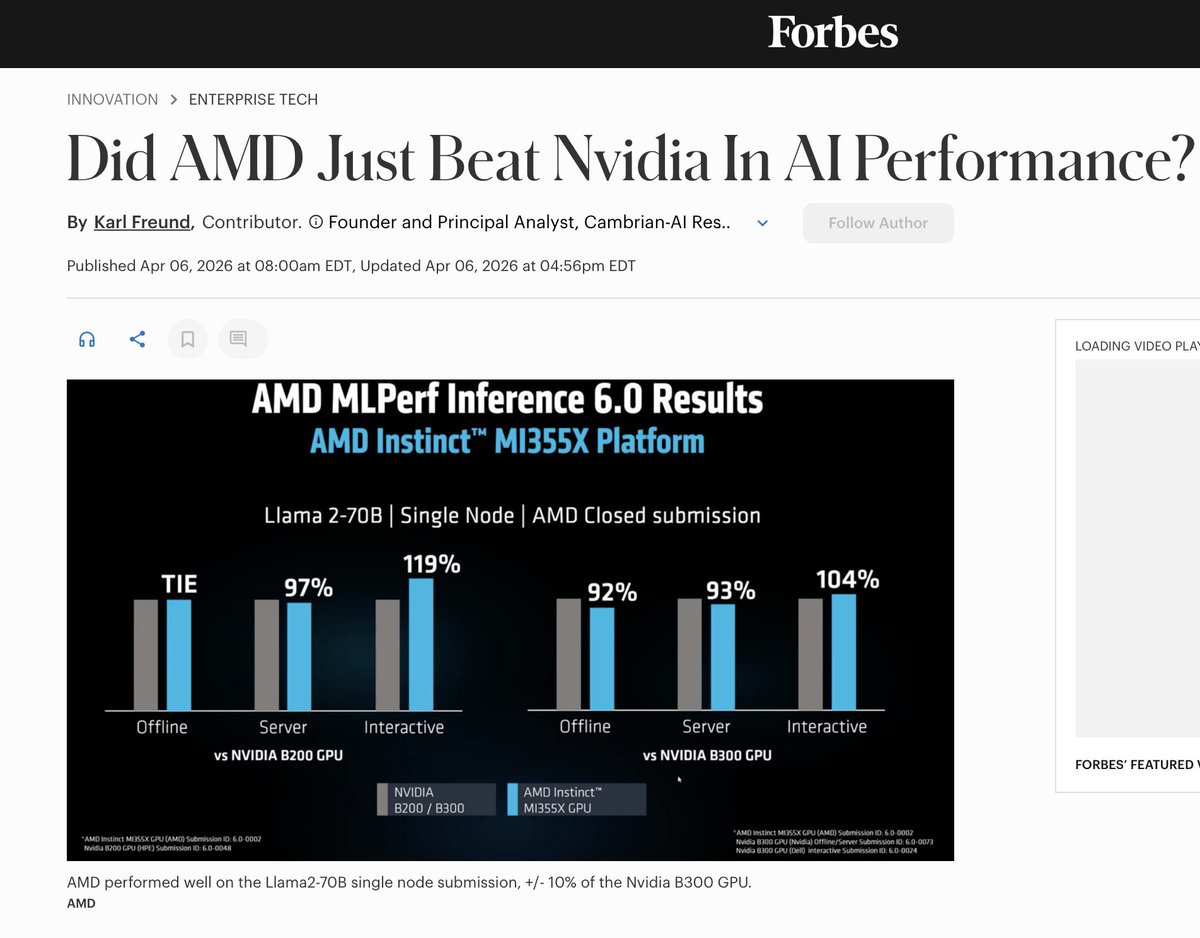

Benchmark Positioning

AMD claims the MI350P delivers 2,299 TFLOPs (FP16) and 4,600 peak TFLOPs (MXFP4). Compared to Nvidia's H200 NVL, AMD says the MI350P offers 20% better FP64, 43% better FP16, and 39% better FP8 theoretical compute. The card's specs are exactly half of AMD's flagship MI355X, which has 256 CUs and 288GB HBM3E [per the company's blog post].

The Unique Take: PCIe as a Strategic Wedge

Nvidia has not announced a PCIe version of its B200 Blackwell GPUs, leaving the PCIe AI accelerator segment open. AMD's MI350P fills that gap with a newer architecture and competitive memory capacity. The strategic play is not just raw FLOPs but compatibility: data centers can swap H200 NVL cards for MI350Ps without re-engineering server racks or cooling. If ROCm software maturity has improved enough — as AMD claimed at CES — this could be the first credible enterprise PCIe alternative to Nvidia in years.

Competitive Landscape

Nvidia's H200 NVL remains the incumbent PCIe AI accelerator, but it uses the older Hopper architecture. Nvidia's Blackwell generation (B200) is available only in SXM and NVL form factors, not PCIe. AMD's MI350P thus claims the "fastest enterprise PCIe card" title by default in its segment, though Nvidia could respond with a Blackwell PCIe variant [per Tom's Hardware]. AMD competes with Nvidia across the broader AI accelerator market, as noted in our coverage of the inference shift opening doors for chip startups.

What to watch

Watch for Nvidia's response: a potential Blackwell PCIe variant (e.g., B200 PCIe) could undercut AMD's advantage. Also track ROCm adoption benchmarks in enterprise inference deployments over the next two quarters — software maturity will determine whether MI350P gains real market share.