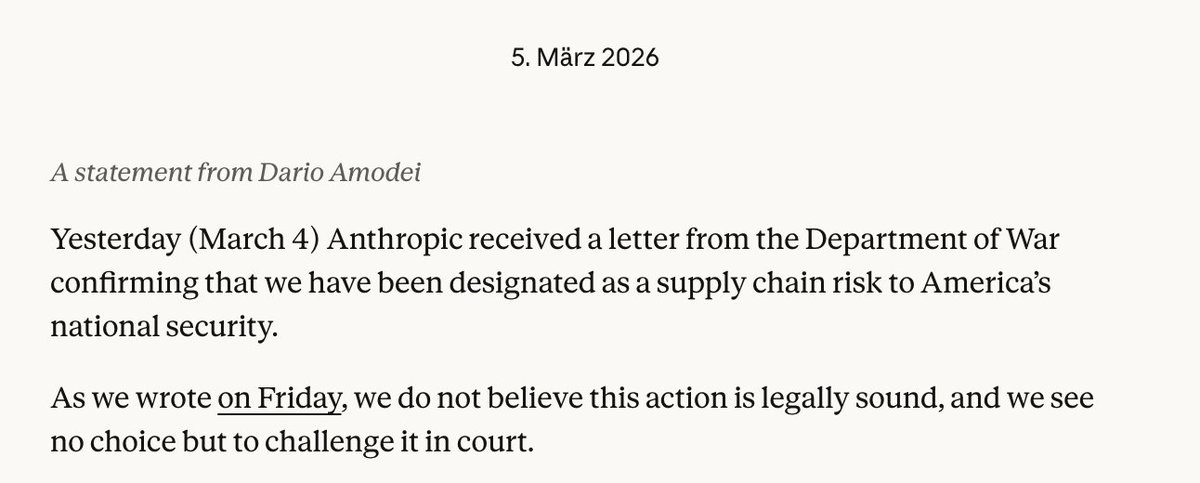

In a dramatic public statement that has sent shockwaves through the artificial intelligence and national security communities, Anthropic CEO Dario Amodei has revealed that his company is refusing a Department of Defense demand to remove critical safety guardrails from its Claude AI models for military applications. The Pentagon had threatened to cancel a $200 million contract and label Anthropic a "supply chain risk" if the company didn't comply with removing restrictions on mass surveillance and autonomous weapons capabilities.

The Ultimatum and Response

According to Amodei's statement published on Anthropic's official blog and referenced in multiple reports, Defense Secretary Pete Hegseth issued an ultimatum requiring the AI company to eliminate specific safeguards that currently prevent Claude from being used for certain military applications. The two key restrictions at issue involve mass surveillance operations and the deployment of autonomous weapons systems.

"Our strong preference is to continue to serve the Department and our warfighters — with our two requested safeguards in place," Amodei wrote. "We remain ready to continue our work to support the national security of the United States."

This confrontation represents a significant escalation in the ongoing tension between AI safety principles and national security imperatives. Anthropic, founded by former OpenAI researchers who left over safety concerns, has positioned itself as one of the most safety-conscious AI companies in the industry.

Anthropic's Established Government Partnerships

Amodei's statement provides important context about Anthropic's existing relationship with U.S. national security agencies. The company has been deeply embedded in government AI deployment, noting that they were:

- The first frontier AI company to deploy models in the U.S. government's classified networks

- The first to deploy models at National Laboratories

- The first to provide custom models for national security customers

- Extensively deployed across the Department of Defense for mission-critical applications including intelligence analysis, modeling and simulation, operational planning, and cyber operations

"I believe deeply in the existential importance of using AI to defend the United States and other democracies, and to defeat our autocratic adversaries," Amodei emphasized, underscoring that this is not a case of a company refusing to work with the military entirely.

The Broader Context of AI and National Security

This confrontation occurs against a backdrop of intense geopolitical competition in artificial intelligence. Amodei noted that Anthropic has already taken significant steps that "defend America's lead in AI, even when it is against the company's short-term interest." These include:

- Forgoing several hundred million dollars in revenue by cutting off access to firms linked to the Chinese Communist Party

- Shutting down CCP-sponsored cyberattacks attempting to abuse Claude

- Advocating for policies that maintain U.S. technological advantage

The company has specifically restricted access to entities designated by the Department of Defense as Chinese Military Companies, demonstrating their commitment to national security priorities while maintaining ethical boundaries.

The Core Ethical Dilemma

The current standoff centers on what Amodei describes as an inability to comply "in good conscience" with the Pentagon's demands. The specific safeguards in question appear to represent what Anthropic considers fundamental ethical boundaries for AI deployment.

Mass surveillance capabilities raise significant civil liberties concerns, particularly regarding privacy rights and potential abuses of power. Autonomous weapons systems, often called "killer robots" by critics, represent one of the most controversial applications of AI technology, with many experts and organizations calling for international bans or strict regulations.

Anthropic's refusal suggests that the company views these restrictions as non-negotiable components of their Constitutional AI approach, which emphasizes building AI systems that are helpful, harmless, and honest through training methodologies that instill these values at a fundamental level.

Industry Implications and Precedent

This public confrontation sets a significant precedent for the AI industry. Other major AI companies, including OpenAI, Google DeepMind, and various defense contractors developing AI capabilities, will be watching closely how this situation resolves.

The Pentagon's threat to label Anthropic a "supply chain risk" could have serious consequences beyond the immediate contract, potentially affecting the company's ability to work with other government agencies or even certain private sector clients who require security clearances.

The Response and Potential Outcomes

In response to Anthropic's statement, U.S. Under Secretary of Defense Emil Michael has publicly criticized Amodei's position, though the full details of the Pentagon's response continue to emerge. The situation represents a rare public airing of what are typically confidential negotiations between technology providers and defense agencies.

Several potential outcomes are possible:

- Compromise Solution: The Pentagon might accept modified safeguards or agree to specific use-case limitations rather than complete removal of restrictions.

- Contract Cancellation: The Department of Defense could follow through on its threat, canceling the $200 million contract and potentially seeking alternatives from less restrictive providers.

- Policy Intervention: Congressional or executive branch intervention could establish clearer guidelines for AI ethics in military applications.

- Industry-Wide Impact: Other AI companies might be pressured to take similar stands or might fill the gap left by Anthropic's restrictions.

The Larger Philosophical Divide

This confrontation highlights a fundamental philosophical divide in the AI community between those who believe AI development should proceed with minimal restrictions for strategic advantage and those who advocate for strict ethical boundaries, particularly in military contexts.

Amodei's statement references this tension directly, noting that Anthropic has chosen to prioritize certain ethical principles even when doing so conflicts with short-term business interests. This position aligns with the company's broader mission to develop AI systems that are safe and aligned with human values.

The situation also raises questions about the appropriate role of private companies in establishing ethical boundaries for technologies with national security implications, and whether such decisions should be made by corporations, government agencies, or through democratic processes.

Source: Anthropic statement from Dario Amodei on discussions with the Department of Defense, with additional context from related reporting.