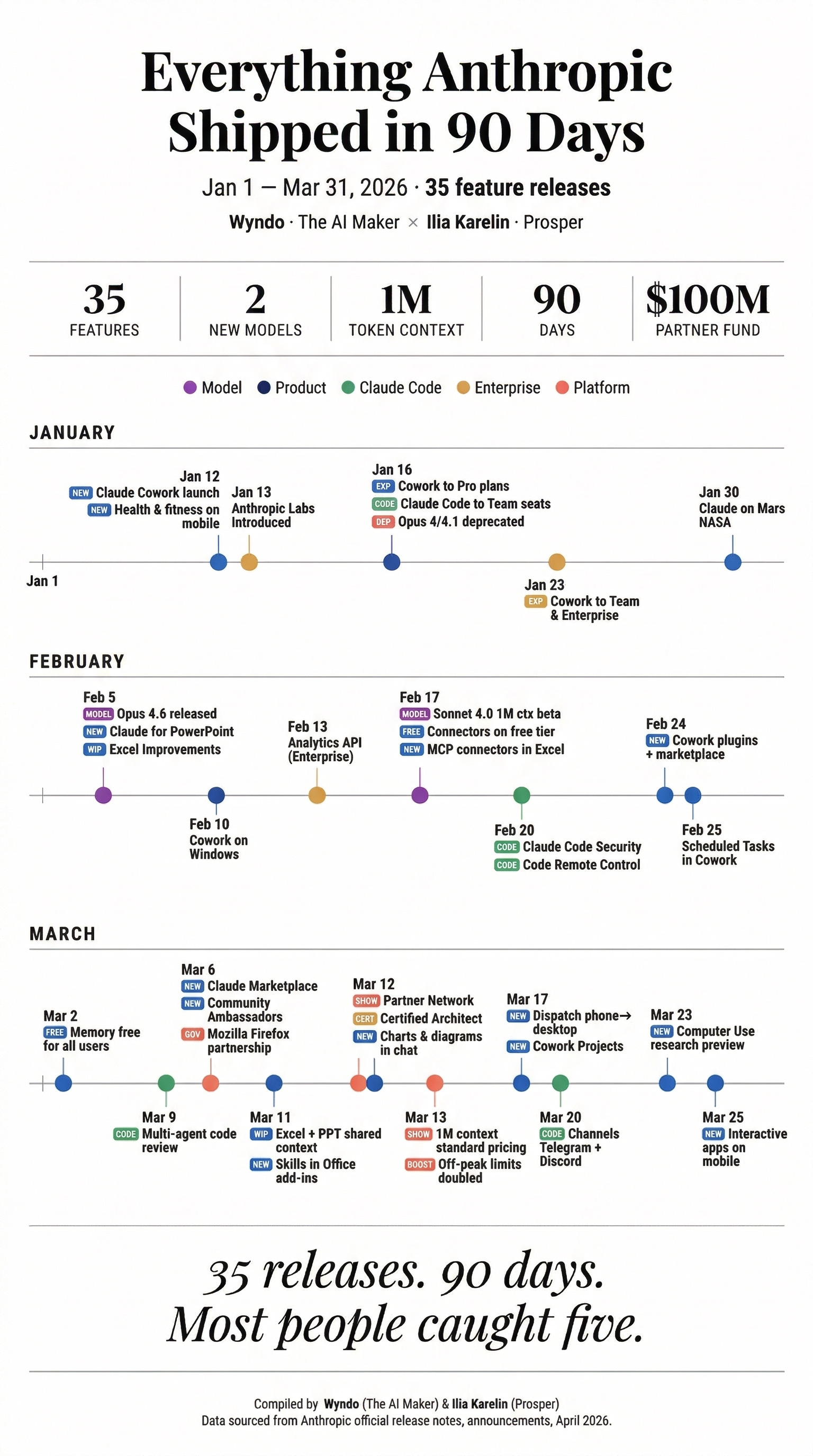

Anthropic has launched Claude Routines, a new feature that allows users to automate the execution of Claude Code based on triggers like schedules, GitHub events, or external API calls. This transforms Claude from a conversational coding assistant into an automated workflow engine that can handle repetitive development tasks without manual intervention.

Key Takeaways

- Anthropic launched Claude Routines, a feature that allows users to automate Claude Code execution based on schedules, GitHub events, or external API calls.

- This moves Claude from a conversational assistant to an automated workflow engine for developers.

What's New: From Chat to Automation

Claude Routines introduces three primary automation triggers:

- Scheduled Execution: Users can set Claude Code to run on a predetermined schedule (daily, weekly, hourly) without manual prompting

- GitHub Event Triggers: Claude can automatically execute code in response to GitHub events like pushes, pull requests, or issue creation

- API Integration: External systems can trigger Claude Code execution through API calls, enabling integration with existing workflows

This represents a significant shift in how developers interact with Claude. Previously, users needed to manually prompt Claude for each coding task. With Routines, repetitive tasks like code reviews, dependency updates, test generation, or documentation updates can be automated.

Technical Details and Implementation

While Anthropic hasn't released full technical specifications, the feature appears to build on Claude's existing code execution capabilities within the Claude Console and API. The automation layer sits on top of Claude Code, which already allows the model to write, test, and execute code in a sandboxed environment.

Key implementation aspects likely include:

- Webhook Integration: For GitHub events and external API triggers

- Scheduler Service: Managed scheduling infrastructure within Anthropic's platform

- Execution Context Persistence: Maintaining context between automated runs for consistent behavior

- Security Sandboxing: Ensuring automated code execution remains isolated and secure

The feature is currently available through Anthropic's platform, though pricing and API rate limits for automated execution haven't been detailed.

How It Compares to Existing Automation Tools

Claude Routines enters a competitive landscape of developer automation tools:

Claude Routines AI-powered code automation Schedule, GitHub, API Full Claude Code execution GitHub Actions CI/CD workflows GitHub events Custom scripts/containers Zapier/Make General workflow automation 1000+ app triggers Limited code steps Cron jobs Scheduled tasks Time-based only Shell scripts LangChain/LLM chains LLM workflow orchestration Programmatic Via API callsClaude Routines differentiates by combining AI-generated code with traditional automation triggers. Unlike GitHub Actions which requires manual script writing, Claude can generate the automation code itself based on natural language descriptions.

What to Watch: Limitations and Considerations

Early adopters should consider several factors:

- Cost Structure: Automated execution could significantly increase API usage costs compared to manual interactions

- Reliability: AI-generated code may have higher error rates than hand-written automation scripts

- Debugging Complexity: Troubleshooting failed automated runs may require understanding both the automation logic and Claude's code generation

- Security Implications: Automating code execution introduces new attack surfaces that need careful permission scoping

Anthropic will need to provide robust logging, monitoring, and rollback capabilities for enterprise adoption. The success of this feature will depend on Claude's consistency in generating reliable code across repeated executions.

gentic.news Analysis

This launch represents Anthropic's continued push toward enterprise workflow integration, following their pattern of moving beyond pure chat interfaces. The introduction of scheduled and event-driven automation positions Claude as a potential replacement for certain script-based automation tasks, particularly those requiring natural language understanding or adaptive behavior.

This development aligns with the broader industry trend of LLMs transitioning from conversational tools to autonomous agents. We've seen similar moves from competitors: OpenAI's GPTs can be triggered via API, and GitHub Copilot has been expanding beyond inline suggestions to broader workflow assistance. However, Claude Routines appears more focused on the automation of code-related workflows specifically, rather than general task automation.

For developers, the most significant implication is the potential reduction in boilerplate automation code. Instead of writing cron jobs or GitHub Action workflows from scratch, teams could describe the desired automation in natural language and let Claude implement it. This could accelerate development velocity but introduces new dependencies on Anthropic's platform reliability and Claude's code generation quality.

Looking ahead, watch for how Anthropic integrates this with their recently announced enterprise features. If Routines can be combined with Claude's document processing capabilities and knowledge base integration, it could enable sophisticated document-triggered workflows (e.g., "when a new spec document is uploaded, generate and test the implementation").

Frequently Asked Questions

How much does Claude Routines cost?

Anthropic hasn't released specific pricing for Claude Routines. It will likely be billed based on API usage, with automated executions counting toward token consumption. Enterprise customers may receive volume discounts for high-frequency automation.

Can Claude Routines access my private repositories?

Yes, but only with proper authentication and permissions. The GitHub integration would require OAuth authorization to access private repos, similar to other GitHub-integrated tools. Anthropic would need to implement robust security controls to ensure automated code execution doesn't expose sensitive data.

What programming languages does Claude Routines support?

Claude Routines should support all languages that Claude Code already handles, which includes Python, JavaScript, TypeScript, Go, Rust, and several others. The specific capabilities may depend on the execution environment's installed runtimes.

How do I debug a failing Routine?

Anthropic will need to provide detailed execution logs, error messages, and potentially the ability to inspect generated code before execution. Without proper debugging tools, failed automated runs could be difficult to troubleshoot, especially if Claude generates different code each time.