A leaked internal communication from Anthropic indicates the company's next-generation flagship model, codenamed Claude Mythos, is facing a significant release hurdle: it is currently too computationally expensive to run at scale. The company has admitted it needs to become "much more efficient" before a general release can be considered.

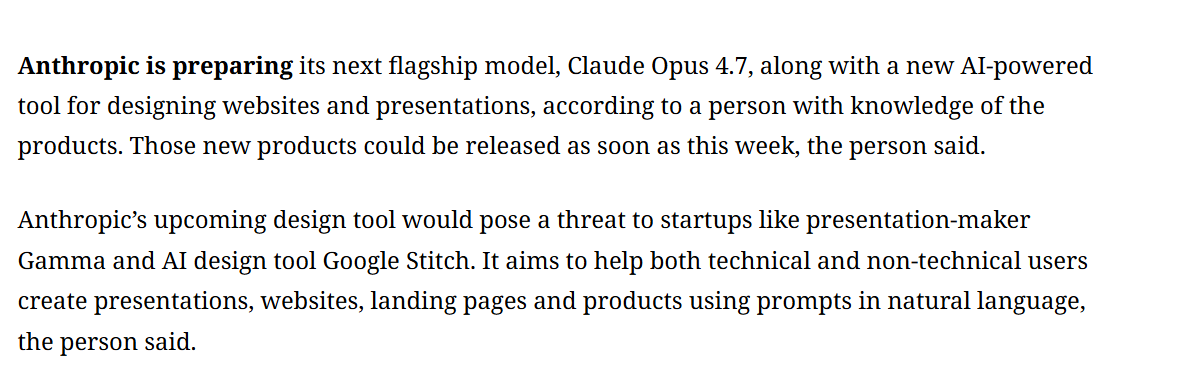

This development suggests a shift in Anthropic's near-term release roadmap. While the company's public blog had previously pointed to a 2026 release for its next major model, the compute constraints of Mythos make it likely that a different, presumably more efficient model—internally referred to as "Spud"—will reach users first.

What Happened

The information comes from an apparent accidental leak of an internal Anthropic update, shared by a user on X (formerly Twitter). The core revelation is that the Claude Mythos model, which represents Anthropic's ambitious push beyond the current Claude 3.5 Sonnet, is so compute-intensive that its operational costs and infrastructure demands are currently prohibitive for a broad, sustainable launch.

Anthropic's stated need to become "much more efficient" points to ongoing challenges in the frontier AI race: scaling model capabilities often comes at an exponential cost in compute, creating a tension between performance and practicality.

The Roadmap Implications: 'Spud' Before Mythos

The leak directly impacts the expected sequence of Anthropic's releases. Prior public statements had set expectations for a 2026 flagship release. The internal note now implies a strategic pivot:

- Claude Mythos: Remains in development as the flagship target but is on hold until significant efficiency breakthroughs are achieved. This could involve innovations in model architecture, training techniques, or inference optimization.

- Project 'Spud': Likely an intermediate model designed with a stronger focus on cost-to-performance ratio. 'Spud' may represent an evolution of the Claude 3.5 lineage rather than a massive architectural leap, prioritizing deployability and commercial viability over pure benchmark dominance.

This pattern—a high-cost flagship followed or preceded by a more efficient variant—has become common. OpenAI's sequence of GPT-4, then the more efficient GPT-4 Turbo, and Google's Gemini Ultra and Gemini Pro releases follow a similar logic of separating peak capability from scalable product.

The Efficiency Challenge

Anthropic's conundrum with Mythos underscores a central bottleneck in modern AI: the unsustainable compute trajectory of large language models. Training and, more critically, inference for massive models require vast amounts of expensive, power-hungry GPU time. For a company like Anthropic, which emphasizes responsible scaling and long-term safety, launching a model that is economically or environmentally untenable would contradict its core principles.

The push for efficiency will likely focus on:

- Inference Optimization: Techniques like speculative decoding, better quantization (e.g., moving to FP8 or INT4 precision), and improved caching to reduce the computational cost of generating each token.

- Architectural Innovations: Exploring mixtures of experts (MoE), state-space models, or other architectures that promise higher performance per parameter.

- Training Efficiency: Improving the quality and curation of training data to achieve better capabilities with fewer training steps or a smaller model size.

gentic.news Analysis

This leak aligns with the broader industry trend where raw scaling is hitting economic and physical limits. As we covered in our analysis of Google's Gemini 2.0 last quarter, the focus is shifting decisively from "bigger at any cost" to "smarter scaling." Anthropic's predicament with Mythos is a real-time case study of this transition.

The mention of "Spud" is particularly notable. It suggests Anthropic is actively developing a parallel, efficiency-first track within its model pipeline, a strategy directly mirrored by OpenAI's development of o1 and its preview models. This bifurcated approach—one research arm chasing the frontier and another engineering arm productizing the last frontier—is becoming the standard operating model for leading AI labs.

Furthermore, this follows Anthropic's established pattern of cautious, staged releases, as seen with the incremental rollout of the Claude 3 family (Haiku, Sonnet, Opus). The company, co-founded by former OpenAI research executives Dario and Daniela Amodei, has consistently prioritized control and safety over speed. A delay to solve efficiency problems is entirely in character and may indicate that Mythos represents a more significant capability jump—and thus a greater potential risk—than previous iterations.

Frequently Asked Questions

What is Claude Mythos?

Claude Mythos is the internal codename for Anthropic's next planned flagship large language model, intended to succeed the Claude 3.5 series. It appears to be a highly capable but computationally expensive model that is not yet efficient enough for a general release.

What is Project 'Spud'?

Project 'Spud' is another internal Anthropic model, mentioned in the context of this leak. It is widely interpreted by observers to be a more computationally efficient model than Mythos, likely designed for scalable deployment. It is expected to be released before Mythos.

When will Claude Mythos be released?

Based on the leaked information, there is no confirmed release date for Claude Mythos. Anthropic has stated it needs to achieve significant efficiency gains before a launch can happen. Its previous public mention of a 2026 release for a next-gen model may now refer to 'Spud' or is simply delayed.

Why is compute intensity a problem for AI models?

Extreme compute intensity makes models prohibitively expensive to run (inference) for a large user base. It drives up operational costs for the provider and API costs for developers, limits accessibility, and raises environmental concerns due to high energy consumption. Sustainable business models require efficient inference.