At a Wall Street Journal event this week, US Treasury Secretary Janet Yellen made a striking public assessment of an AI model, calling Anthropic's Claude Mythos a "step function change in abilities." This high-level characterization from a top economic official is unusual and underscores the model's perceived impact.

The comment is part of a rapid sequence of events placing Anthropic at the center of US government and financial sector attention regarding AI capabilities and risks.

Key Takeaways

- US Treasury Secretary Janet Yellen described Anthropic's Claude Mythos as a 'step function change in abilities' at a WSJ event.

- This follows emergency meetings with Wall Street CEOs and high-level briefings on AI cyber risks, revealing a government split on whether Anthropic is a security risk or asset.

What Happened: A Timeline of High-Stakes AI Scrutiny

According to the report, the Treasury Secretary's public remark followed a series of urgent, private engagements:

- Last Week: Secretary Yellen and Federal Reserve Chair Jerome Powell convened an emergency meeting with "every major Wall Street CEO" specifically because of the Claude Mythos model. The nature of the emergency was not specified, but the involvement of the nation's top financial regulators and bank leaders suggests concerns about financial stability, market manipulation, or systemic risk posed by advanced AI.

- The Week Before: Vice President J.D. Vance and a senior advisor (Bessent) briefed a who's-who of tech leadership—including Anthropic CEO Dario Amodei, Elon Musk (xAI, Tesla), Sundar Pichai (Google/Alphabet), Sam Altman (OpenAI), and Satya Nadella (Microsoft)—on AI cyber risks. This indicates the administration is treating AI security at a cabinet level and engaging directly with the primary architects of the technology.

The Anthropic Paradox: Simultaneous Risk and Asset

The report highlights a stark contradiction in the US government's stance toward Anthropic. While the Treasury and Fed are engaging with its technology at the highest levels, and the VP is briefing its CEO, the Pentagon is reportedly "still trying to blacklist Anthropic."

The nature of the potential blacklist is unclear but could relate to restrictions on contracting or technology sharing over national security concerns, possibly related to Anthropic's structure or foreign investment. The report calls the simultaneous treatment as both a "national security risk AND a national security asset" a moment of "remarkable irony."

For Anthropic, the intense, if conflicted, attention from the highest echelons of the US government is described as "the best PR they could have hoped for," validating the model's significance in the most consequential corridors of power.

gentic.news Analysis

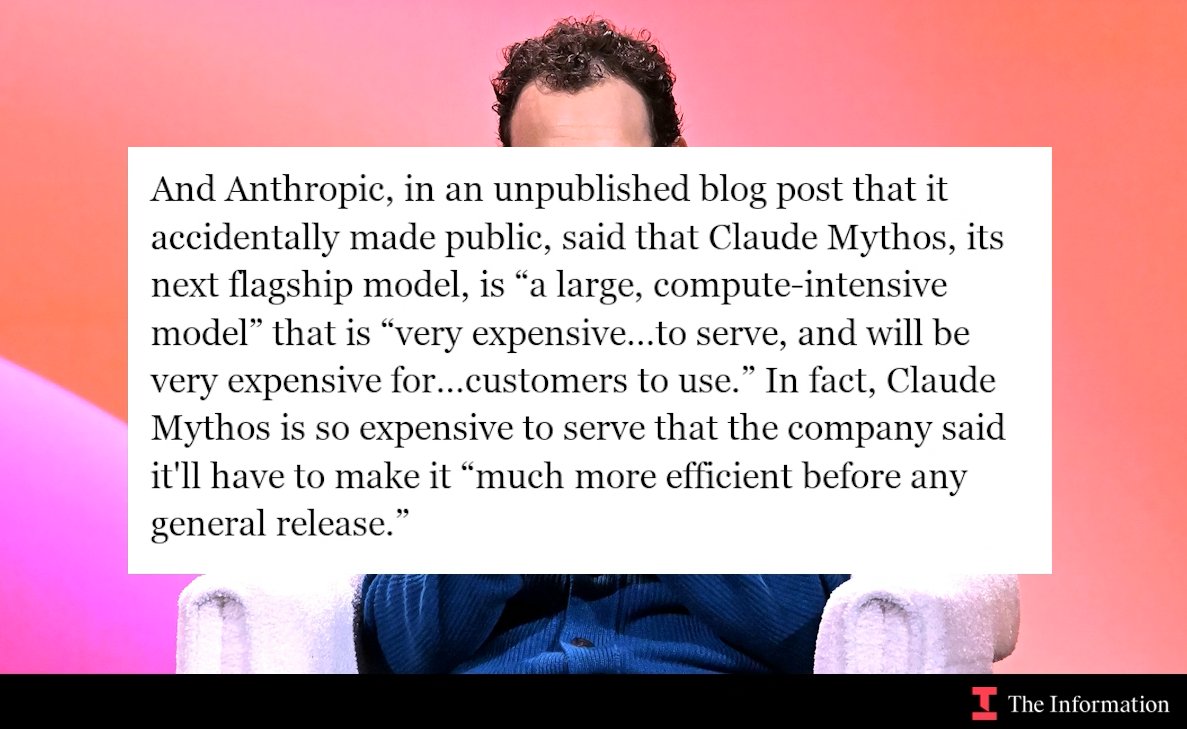

This episode is a concrete manifestation of the dual-use dilemma that has long been theorized about advanced AI. Claude Mythos appears to be a model of sufficient capability that it has triggered a crisis response in the financial regulatory system while simultaneously becoming a focal point for executive branch cyber risk planning. The 'step function' language suggests capabilities that are not just incrementally better but qualitatively different, crossing a threshold that demands new forms of governance and risk assessment.

The split between the Pentagon and the economic/executive branches is telling. It may reflect differing risk tolerances, bureaucratic inertia, or classified assessments. However, it creates immediate policy friction. How can a company be both a critical partner for securing national infrastructure and a potential security threat? This tension will need resolution, likely through a more coherent federal AI policy framework.

For the AI industry, this sequence signals that regulatory and national security scrutiny is now real-time and triggered by specific model releases, not abstract future risks. The emergency Wall Street meeting is particularly notable; it suggests financial regulators believe current market safeguards may be inadequate for the inference capabilities of models like Mythos. This could accelerate existing efforts by the SEC and CFTC to formulate AI-specific trading and disclosure rules.

Frequently Asked Questions

What is Claude Mythos?

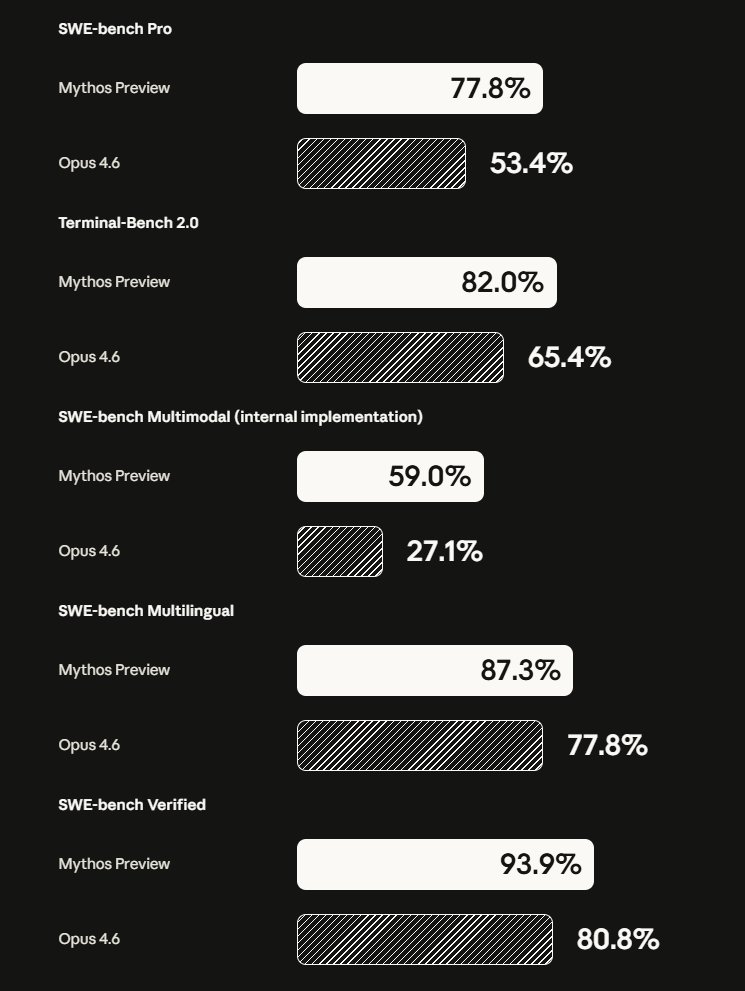

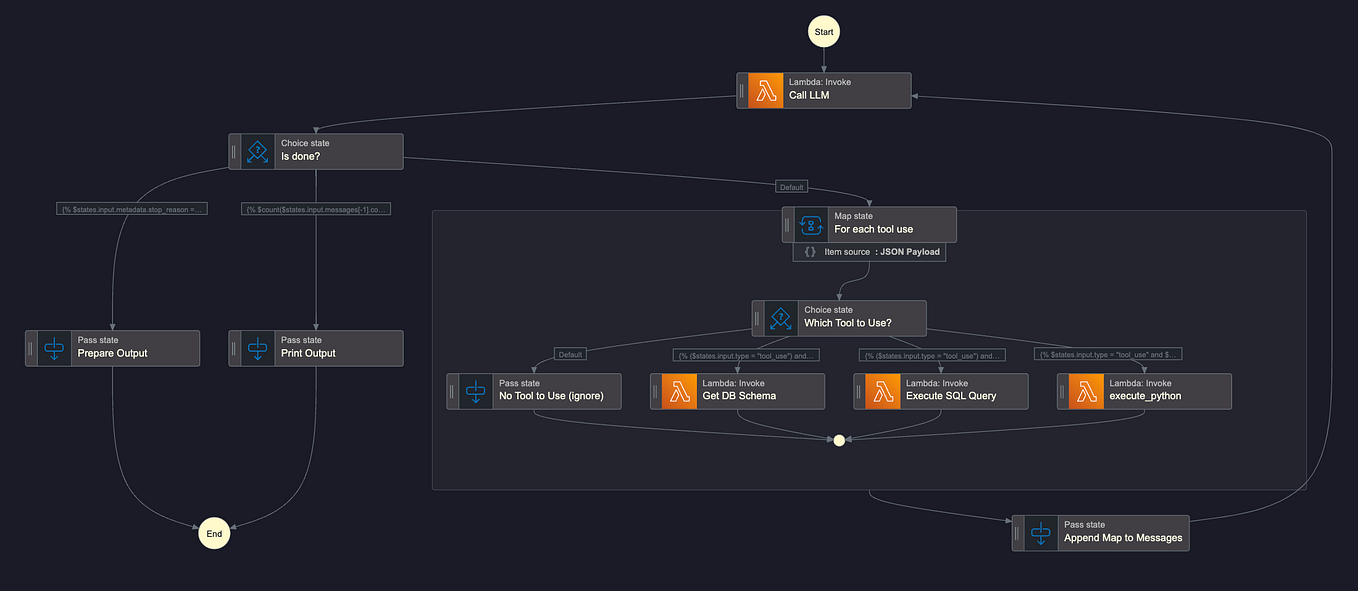

Claude Mythos is the latest and most capable AI model from Anthropic. While full technical details are not public, the 'step function change' description from the Treasury Secretary implies it represents a significant leap in reasoning, coding, or tool-use abilities compared to previous models like Claude 3.5 Sonnet. Its capabilities are apparently significant enough to prompt emergency high-level government meetings.

Why would the Treasury and Fed hold an emergency meeting about an AI model?

The Federal Reserve and Treasury Department are primarily concerned with financial stability. An AI model representing a 'step function change' could pose novel risks to markets, such as enabling new forms of hyper-fast algorithmic trading, sophisticated financial fraud, market manipulation, or creating vulnerabilities in critical banking infrastructure. The emergency meeting suggests regulators believe these risks are imminent and require immediate coordination with the largest financial institutions.

Why is the Pentagon trying to blacklist Anthropic?

The source does not specify the reasons, but potential factors could include concerns about Anthropic's corporate structure, its significant investment from Amazon, the background of its founders (who previously worked at OpenAI), or fears that its advanced AI technology could be misused or accessed by adversaries. The blacklist effort indicates some within the defense establishment view the company itself as a potential vector for risk, separate from the utility of its models.

What does this mean for other AI companies like OpenAI and Google?

The VP's briefing included CEOs from across the competitive landscape, indicating the government is taking a broad, industry-wide approach to AI cyber risk. However, Anthropic's model appears to be the specific trigger for recent financial stability concerns. This incident raises the bar for what constitutes a model release that demands immediate regulatory attention and will likely increase pressure on all leading labs to engage more deeply with policymakers before launching frontier systems.