A confidential internal document from Anthropic CEO Dario Amodei has reportedly been leaked, creating potential governance challenges for the prominent AI safety company. According to sources familiar with the matter, the document addresses sensitive internal discussions about Anthropic's organizational structure, decision-making processes, and strategic direction.

The Leak and Its Immediate Impact

The leaked document, first reported via social media and subsequently covered by CNN, appears to contain CEO Dario Amodei's communications to Anthropic staff regarding internal governance matters. While the exact contents remain partially obscured, sources indicate the document touches on fundamental questions about how Anthropic maintains its safety-focused mission while scaling operations.

Anthropic, founded in 2021 by former OpenAI researchers including Dario and Daniela Amodei, has positioned itself as a leader in developing safe artificial intelligence systems. The company's Constitutional AI approach and its Claude chatbot series have gained significant attention in both research and commercial circles. This leak comes at a critical juncture as Anthropic competes with OpenAI, Google, and other major players in the rapidly evolving AI landscape.

Governance Challenges in AI Safety Organizations

The Unique Structure of Anthropic

Anthropic operates under an unusual corporate structure designed to prioritize long-term safety over short-term profits. The company's "Long-Term Benefit Trust" holds special governance rights intended to ensure the company's mission endures even as it scales. This structure has been both praised as innovative and criticized as potentially cumbersome.

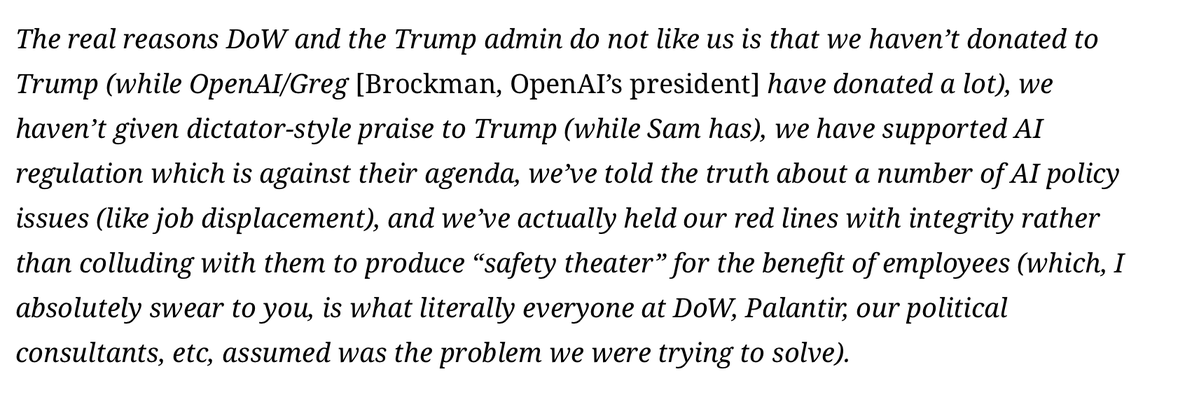

The leaked document reportedly addresses tensions between maintaining this safety-focused governance structure and the practical demands of operating a competitive AI company. Sources suggest Amodei's communication may have been attempting to navigate disagreements about how much authority should remain with the trust versus being delegated to operational leadership.

Internal Divisions Revealed

While details remain limited, the leak suggests potential divisions within Anthropic about:

- Decision-making authority: How much control should remain with the safety-focused governance structures versus operational leaders

- Growth versus safety: Balancing rapid development and deployment with rigorous safety protocols

- Organizational scaling: Maintaining safety culture as the company expands beyond its research-focused origins

These tensions mirror broader debates in the AI safety community about how to build organizations that can both develop cutting-edge AI and maintain strong safety commitments.

Industry Context and Competitive Pressures

The AI Safety Landscape

Anthropic emerged from the effective altruism and AI safety movements, with founders who left OpenAI partly over concerns about safety prioritization. The company has received significant funding from investors sympathetic to its mission, including former Google CEO Eric Schmidt and various effective altruism-aligned donors.

The leak occurs amid increasing scrutiny of AI companies' internal governance. Recent controversies at OpenAI regarding board governance and mission alignment have highlighted how difficult it is to maintain safety commitments while competing commercially. Anthropic has positioned itself as an alternative model, making any governance challenges particularly significant.

Competitive Implications

As the AI race intensifies, any internal instability at Anthropic could affect:

- Investor confidence: Anthropic has raised billions in funding, with investors betting on both its technical capabilities and its governance model

- Talent retention: The company has attracted researchers specifically interested in its safety focus

- Partnership opportunities: Enterprise clients considering Claude may be concerned about organizational stability

- Regulatory positioning: Policymakers looking to AI safety-focused companies as potential partners in governance efforts

Potential Consequences and Responses

Short-Term Challenges

The immediate concern for Anthropic includes:

- Damage control: Managing both internal morale and external perceptions

- Clarifying governance: Potentially needing to publicly reaffirm its governance structure

- Competitive disadvantage: Distractions from product development and research

Long-Term Implications

More fundamentally, this leak raises questions about whether Anthropic's governance model can scale effectively. The company faces the classic challenge of mission-driven organizations: maintaining founding principles while growing beyond founder-led stages.

If the governance tensions are substantial, they could lead to:

- Structural changes: Modifications to the Long-Term Benefit Trust or other governance elements

- Leadership adjustments: Potential changes in how decisions are made or who makes them

- Mission drift concerns: Questions about whether Anthropic can maintain its safety focus under competitive pressure

Broader Industry Significance

Testing Alternative Governance Models

Anthropic represents one of the most prominent attempts to create an AI company with built-in safety mechanisms. Its challenges provide valuable data points for:

- Other AI safety organizations: Learning what governance approaches work at scale

- Policymakers: Understanding how corporate structures might align with public safety goals

- Investors: Assessing whether mission-focused governance affects commercial success

The Transparency Dilemma

The leak also highlights the tension between internal transparency and organizational stability. AI safety organizations often advocate for transparency in AI development, but internal governance discussions typically require confidentiality to function effectively.

Moving Forward

Anthropic now faces the dual challenge of addressing internal governance questions while managing external perceptions. How the company responds could set important precedents for the AI industry:

- Will they maintain their current structure? Or will competitive pressures force adaptation?

- How transparent will they be about governance challenges? Mission-focused organizations often face higher expectations for transparency

- Can they resolve internal tensions while maintaining safety focus? The fundamental test of their model

The AI industry will be watching closely, as Anthropic's experience may inform how other organizations balance safety, governance, and competition in this critical technological domain.

Source: CNN reporting on leaked internal Anthropic document via social media channels.