A groundbreaking new framework called ARLArena promises to solve one of the most persistent challenges in training sophisticated AI agents: catastrophic training instability. Published in a new arXiv preprint (2602.21534), this research addresses the fundamental problem that has limited the scalability and reliability of agentic reinforcement learning (ARL) systems.

The Stability Crisis in Agentic AI

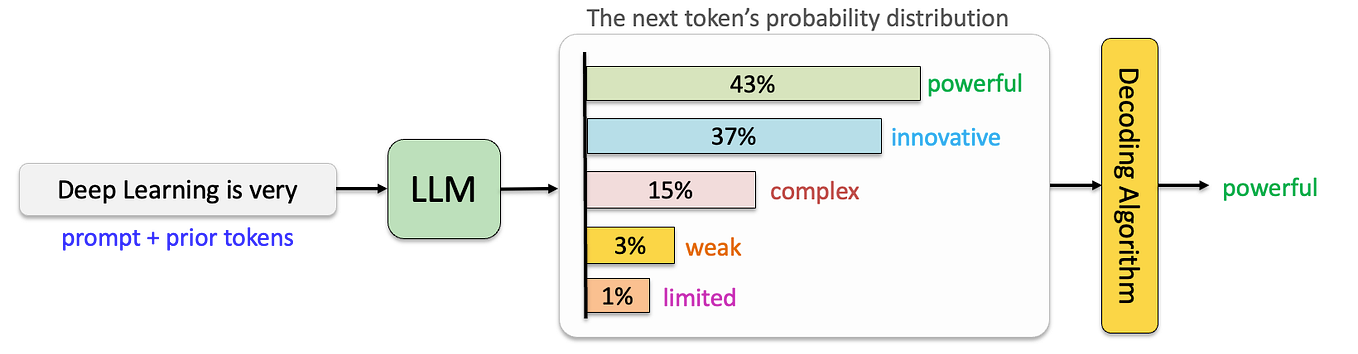

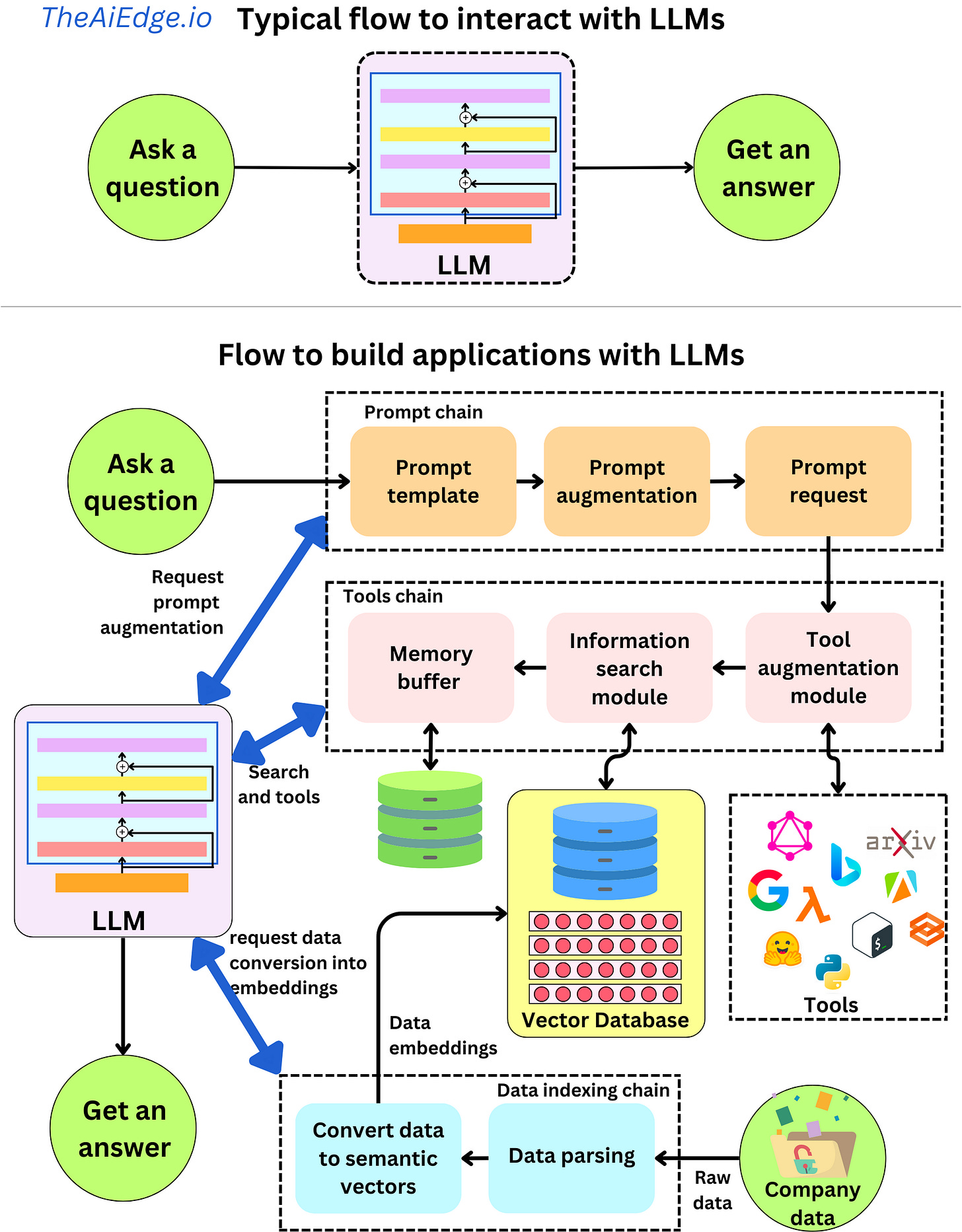

Agentic reinforcement learning represents a significant advancement in artificial intelligence, enabling systems to tackle complex, multi-step interactive tasks that require sophisticated reasoning and sequential decision-making. Unlike traditional reinforcement learning, ARL typically involves large language models or other foundation models as the core reasoning component, allowing agents to understand natural language instructions, break down complex problems, and execute multi-step plans.

Despite its theoretical promise, ARL has been plagued by practical implementation problems. "ARL remains highly unstable, often leading to training collapse," the researchers note in their paper. This instability manifests as sudden performance degradation, failure to converge, or complete breakdown of learning processes after promising initial progress. The problem has constrained researchers' ability to scale ARL systems to larger environments, longer interaction horizons, and more complex tasks.

The ARLArena Solution: Standardization and Analysis

The ARLArena framework approaches this problem systematically through two main components: a clean, standardized testbed for evaluating ARL systems, and a comprehensive analysis methodology that decomposes policy gradient into four core design dimensions. This structured approach allows researchers to isolate and examine the specific factors contributing to training instability.

By creating a controlled and reproducible testing environment, ARLArena enables apples-to-apples comparisons between different ARL approaches and configurations. This standardization is particularly valuable in a field where inconsistent evaluation methodologies have made it difficult to determine whether performance differences stem from algorithmic improvements or implementation details.

SAMPO: Stable Agentic Policy Optimization

Through their systematic analysis, the researchers identified the dominant sources of instability in ARL systems and developed SAMPO (Stable Agentic Policy Optimization), a novel optimization method specifically designed to mitigate these issues. SAMPO incorporates several innovations that address the unique challenges of training agentic systems, including:

- Gradient stabilization techniques that prevent the explosive growth or vanishing of gradients during training

- Adaptive learning rate mechanisms that respond to the specific dynamics of agentic learning

- Regularization strategies that maintain policy diversity while preventing collapse

- Robust credit assignment that properly attributes rewards to the complex sequence of decisions made by agentic systems

Empirical results demonstrate that SAMPO achieves consistently stable training and strong performance across diverse agentic tasks, representing a significant breakthrough in making ARL systems practical and reliable.

Implications for AI Development

The ARLArena framework and SAMPO method have far-reaching implications for the field of artificial intelligence. First, they enable more systematic exploration of algorithmic design choices in ARL, accelerating innovation in this critical area. Researchers can now test new ideas in a controlled environment with confidence that their results will be reproducible and comparable.

Second, the stability improvements open the door to scaling ARL systems to more complex real-world applications. Areas that stand to benefit include:

- Autonomous systems that require sophisticated, multi-step reasoning

- Personalized AI assistants that can learn and adapt to individual user needs

- Scientific discovery agents that can autonomously design and execute experiments

- Robotic control systems that require complex sequential decision-making

Third, the framework provides practical guidance for building stable and reproducible LLM-based agent training pipelines, addressing a critical need in both academic research and industrial applications.

The Broader Context of Agentic AI Development

The ARLArena research arrives at a pivotal moment in AI development, as the field increasingly focuses on creating systems that can not only understand and generate content but also take meaningful actions in complex environments. This shift from passive AI to active, agentic AI represents one of the most important frontiers in artificial intelligence research.

Recent developments in multi-agent reinforcement learning frameworks for personalized healthcare applications demonstrate the growing interest in creating AI systems that can adapt to individual needs while maintaining privacy and accuracy. The stability improvements offered by ARLArena could accelerate progress in these sensitive application areas by making training more reliable and predictable.

Future Directions and Challenges

While ARLArena represents a significant step forward, several challenges remain. The researchers note that further work is needed to extend the framework to even more complex environments and to integrate with emerging AI architectures. Additionally, as ARL systems become more stable and capable, ethical considerations around autonomous decision-making will become increasingly important.

The research community will need to develop robust testing methodologies for safety and alignment in agentic systems, building on the stability foundations provided by frameworks like ARLArena. There's also the challenge of computational efficiency—making stable ARL training accessible to researchers and developers with limited resources.

Conclusion

The ARLArena framework and its accompanying SAMPO optimization method represent a crucial breakthrough in making agentic reinforcement learning practical and scalable. By solving the fundamental stability problem that has plagued ARL systems, this research opens new possibilities for creating sophisticated AI agents that can tackle complex, real-world problems.

As the field of artificial intelligence continues its rapid evolution, frameworks like ARLArena that provide systematic, reproducible approaches to fundamental challenges will be essential for translating theoretical advances into practical applications. The stability revolution in agentic AI has begun, and its implications will likely be felt across the entire spectrum of AI research and development.

Source: ARLArena: A Unified Framework for Stable Agentic Reinforcement Learning, arXiv:2602.21534