A new open-source framework called ART (Agent Reinforcement Trainer) is poised to transform how AI engineers train intelligent agents by automating one of the most challenging aspects of reinforcement learning: reward function design. Developed by researchers and engineers, ART combines GRPO (Group Relative Policy Optimization) with RULER (Reward Understanding through Learning and Evaluation of Rewards) to create an automatic reward system that could dramatically accelerate AI agent development.

The Reward Engineering Bottleneck

Reinforcement learning has long been hampered by what researchers call the "reward engineering problem." Traditional approaches require human experts to meticulously design reward functions that guide AI agents toward desired behaviors. This process is notoriously difficult—poorly designed rewards can lead to agents finding unintended shortcuts or failing to learn meaningful behaviors altogether.

For example, in training a robot to walk, a simple reward based solely on forward movement might result in the robot learning to fall forward rather than developing proper walking gaits. Similarly, in game-playing agents, overly simplistic rewards can lead to exploitation of game mechanics rather than genuine strategic understanding.

How ART Solves the Problem

The ART framework addresses this fundamental challenge through its innovative combination of GRPO and RULER. GRPO represents an advancement in policy optimization techniques that allows for more stable and efficient training of reinforcement learning agents. Meanwhile, RULER serves as the automatic reward generation system that learns appropriate reward functions through interaction with the environment.

According to the announcement by developer Akshay Pachaar, this combination "eliminates the need to hand-craft reward functions"—a claim that, if validated, could represent a significant breakthrough in reinforcement learning methodology.

Technical Architecture and Implementation

While specific implementation details continue to emerge, the framework appears to operate on several key principles:

Automatic Reward Discovery: RULER likely employs techniques from inverse reinforcement learning or reward shaping to infer appropriate reward structures from demonstrations or environmental feedback.

Group-Based Optimization: GRPO's group relative approach may enable more efficient exploration of policy spaces by comparing and learning from multiple agent behaviors simultaneously.

Open-Source Accessibility: Being released as open-source software ensures that the broader AI community can examine, validate, and contribute to the framework's development.

The GitHub repository referenced in the announcement provides the complete codebase, documentation, and examples for researchers and engineers to begin experimenting with the framework immediately.

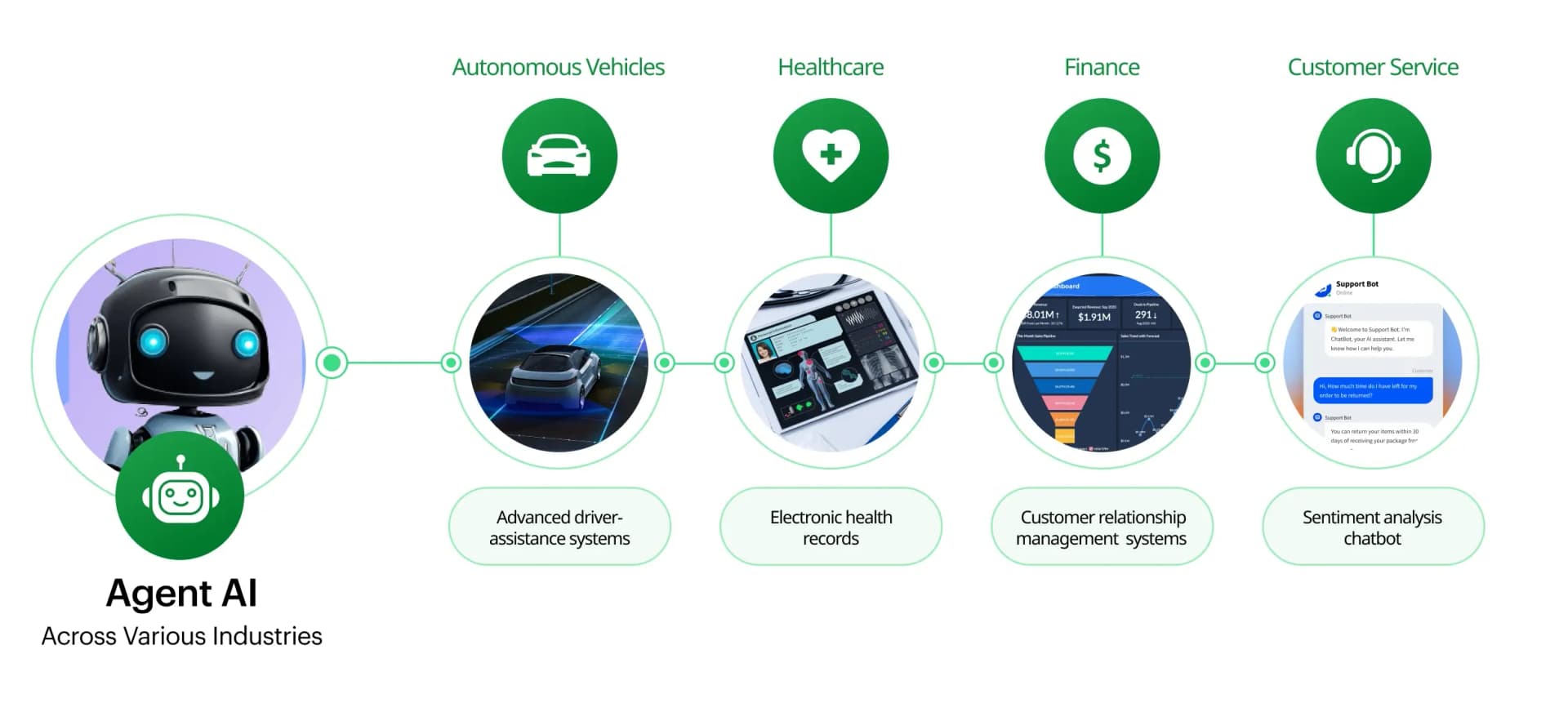

Potential Applications and Impact

The implications of automated reward engineering extend across numerous domains:

Robotics: Training physical robots could become significantly faster and more reliable, as engineers no longer need to spend weeks or months fine-tuning reward functions for complex motor tasks.

Game AI: Development of non-player characters and game-playing agents could accelerate, with the system automatically discovering rewards that lead to engaging and challenging behaviors.

Autonomous Systems: Self-driving vehicles, drones, and other autonomous systems could benefit from more robust learning processes that don't rely on fragile, hand-crafted reward structures.

Scientific Research: AI systems for scientific discovery could explore solution spaces more effectively when freed from human biases in reward design.

Challenges and Considerations

Despite its promising approach, ART faces several challenges that the AI community will need to address:

Interpretability: Automatically generated rewards may be difficult for humans to understand or audit, potentially creating "black box" systems where it's unclear why agents behave as they do.

Safety Alignment: Ensuring that automatically discovered rewards align with human values and safety constraints remains a critical concern, particularly for real-world applications.

Scalability: The computational requirements of automatic reward generation combined with policy optimization need to be manageable for practical applications.

Validation: The framework will require extensive testing across diverse environments and tasks to establish its effectiveness relative to traditional approaches.

The Future of Agent Training

ART represents a significant step toward what many researchers call "reward-free reinforcement learning"—systems that can learn effective behaviors without explicit reward engineering. As the framework evolves, we may see:

- Hybrid Approaches: Combining automatic reward generation with human oversight for critical applications

- Domain Specialization: Versions of ART optimized for specific application areas like robotics, gaming, or conversational AI

- Integration with Existing Tools: Incorporation into popular reinforcement learning frameworks like RLlib, Stable Baselines, or OpenAI's Gym ecosystem

Getting Started with ART

For AI engineers interested in experimenting with ART, the GitHub repository provides the starting point. The open-source nature of the project encourages community contributions, bug reports, and extensions that could further enhance the framework's capabilities.

As with any emerging technology, early adopters should approach with both enthusiasm and appropriate skepticism—testing the framework thoroughly in their specific domains while contributing to the collective understanding of its strengths and limitations.

Source: Original announcement by Akshay Pachaar on X/Twitter with reference to GitHub repository