Communication has emerged as one of the most significant challenges in developing functional multi-agent AI systems. As artificial intelligence progresses from individual chatbots to coordinated teams of specialized agents, researchers are discovering that getting AI agents to effectively communicate and reach agreement presents unique difficulties that mirror human collaborative challenges.

The Communication Challenge in Multi-Agent Systems

Multi-agent systems represent the next frontier in artificial intelligence, where multiple specialized AI agents work together to solve complex problems that would be difficult or impossible for a single agent to handle. These systems promise everything from automated business workflows to sophisticated scientific discovery pipelines. However, as researcher Omar Sar noted in recent work, "Communication is one of the biggest challenges in multi-agent systems."

The fundamental problem is straightforward but profound: when AI agents with different capabilities, perspectives, or objectives need to work together, they must communicate effectively to coordinate their actions, resolve conflicts, and reach consensus on decisions. This becomes particularly challenging when agents have access to different information, operate under different constraints, or prioritize different aspects of a problem.

Testing LLM-Based Agents' Ability to Agree

Recent research has begun systematically testing how well large language model (LLM)-based agents can communicate and reach agreement. The studies typically involve creating multiple AI agents with distinct roles or perspectives and presenting them with scenarios requiring negotiation, collaboration, or decision-making.

Early findings reveal fascinating patterns. Some agents demonstrate surprisingly sophisticated negotiation strategies, while others struggle with basic communication protocols. The research examines questions like: Can AI agents recognize when they have conflicting information? Do they know how to resolve those conflicts? Can they reach consensus when their objectives don't perfectly align?

The Mechanics of AI Agreement

At the technical level, getting AI agents to agree involves several complex components. First, agents must be able to express their positions clearly and understand others' positions accurately. This requires robust natural language understanding and generation capabilities. Second, agents need reasoning abilities to evaluate different positions and find common ground. Third, they require some form of decision-making framework to determine when agreement has been reached and what that agreement entails.

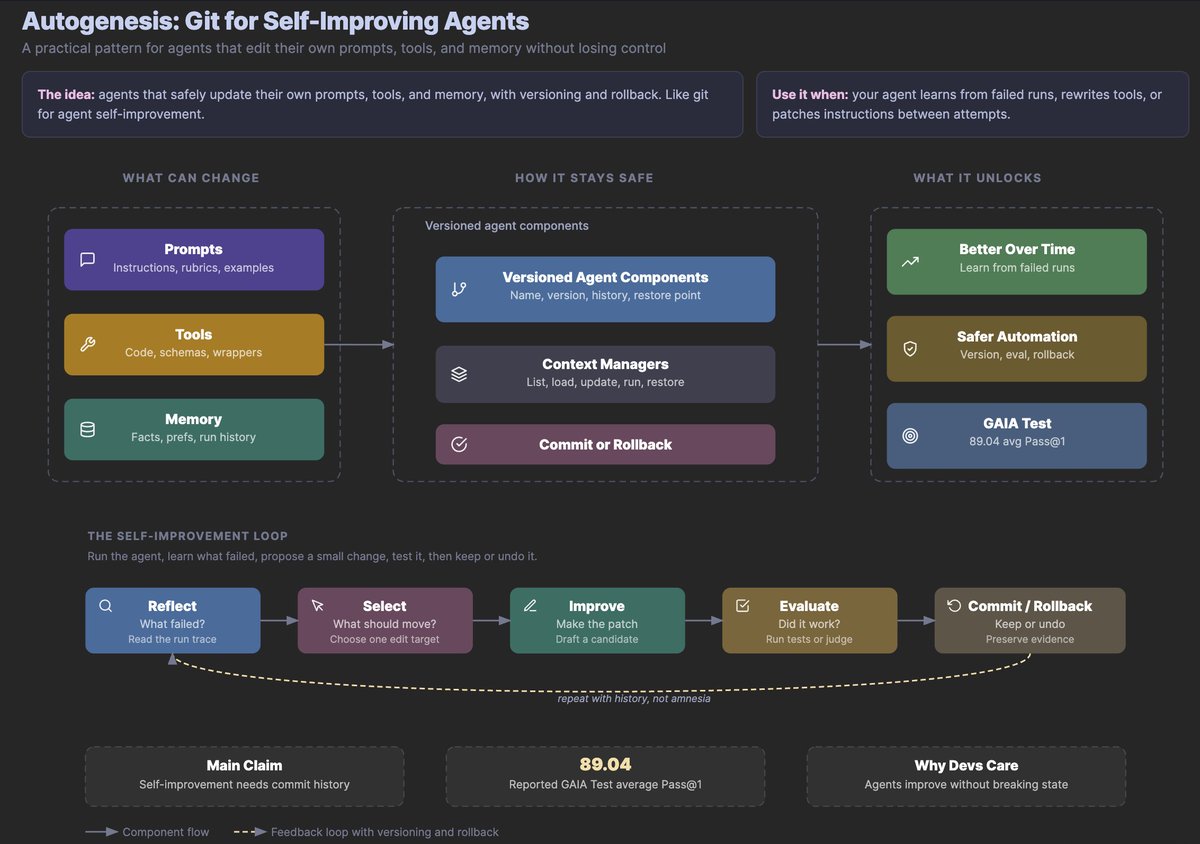

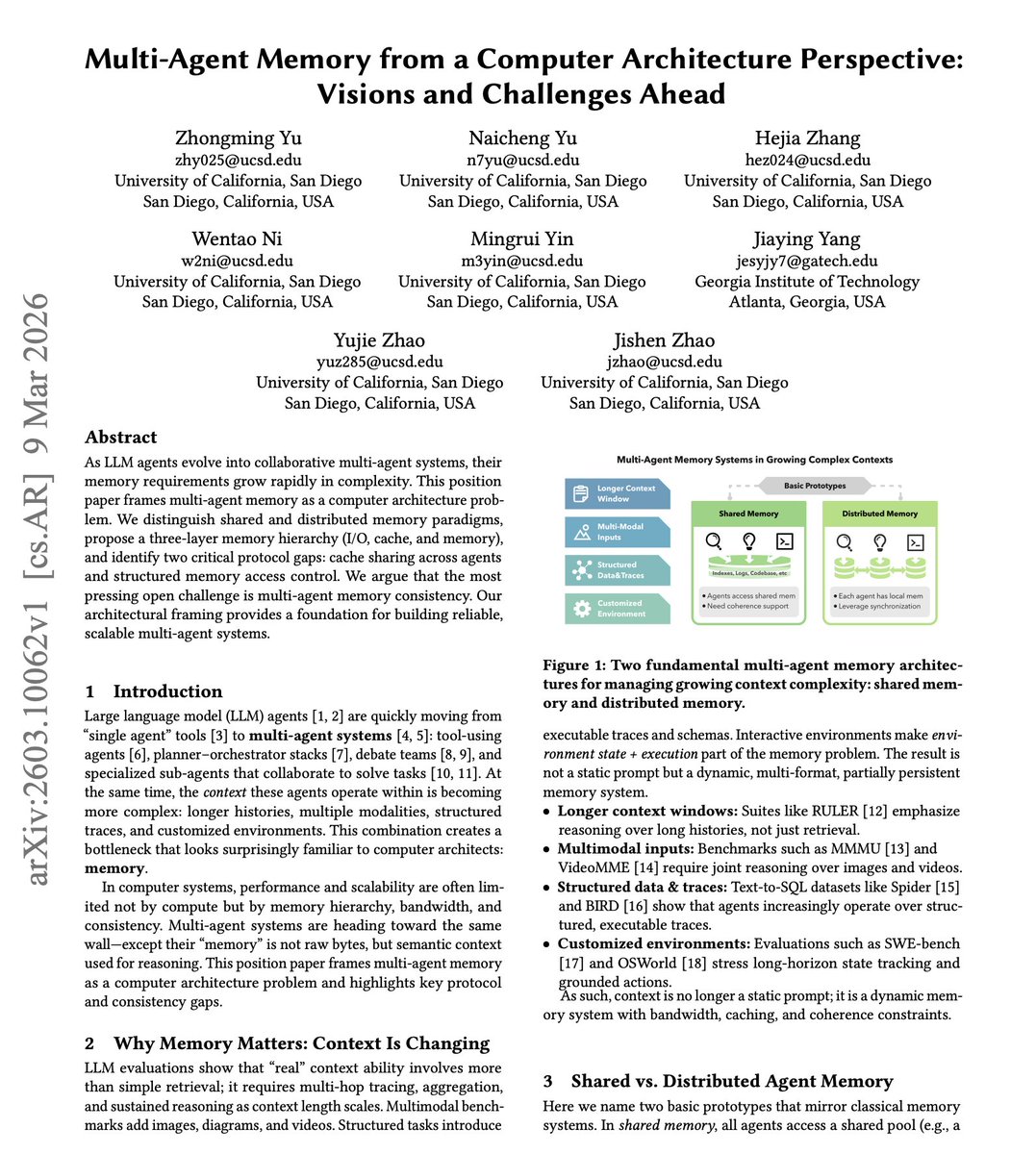

Researchers are experimenting with various approaches to facilitate agreement, including structured communication protocols, shared memory systems, and hierarchical decision-making processes. Some systems implement formal negotiation frameworks borrowed from economics and game theory, while others rely on more organic conversation-based approaches.

Implications for Real-World Applications

The ability of AI agents to reach agreement has profound implications for practical applications. In healthcare, multiple AI systems might need to agree on a diagnosis or treatment plan. In finance, trading algorithms must coordinate to avoid market disruptions. In autonomous vehicles, different vehicle systems must agree on navigation and safety decisions.

When AI agents fail to agree, the consequences can range from inefficient operations to catastrophic failures. A supply chain optimization system where inventory management and shipping agents can't agree could lead to stockouts or overstocking. Emergency response systems where different agents disagree on resource allocation could delay critical aid.

The Human-AI Collaboration Dimension

This research also sheds light on how humans and AI agents might collaborate more effectively. Understanding how AI agents reach (or fail to reach) agreement can inform the design of human-AI teaming interfaces. If we know how AI agents naturally communicate and negotiate, we can build better systems for humans to interact with AI teams.

Some researchers are exploring hybrid approaches where humans help mediate disagreements between AI agents or where AI agents learn negotiation strategies by observing human teams. This bidirectional learning—AI learning from human collaboration and humans learning from AI communication patterns—could accelerate progress in both domains.

Ethical Considerations and Alignment

The question of whether AI agents can agree is deeply connected to AI alignment and safety concerns. If we deploy teams of AI agents to make important decisions, we need assurance that they will reach agreements that align with human values and objectives. Researchers are particularly concerned about scenarios where AI agents might reach agreements that are technically optimal but ethically problematic.

There's also the risk of "groupthink" among AI agents, where the desire for agreement overrides critical evaluation of alternatives. Just as human teams can fall into consensus traps, AI agents might develop similar patterns if not properly designed.

Future Research Directions

Current research is just beginning to scratch the surface of AI agent communication and agreement. Future work will likely explore more complex scenarios involving larger numbers of agents, more diverse agent types, and more ambiguous decision contexts. Researchers are particularly interested in how communication patterns evolve as agents interact repeatedly over time.

Another promising direction involves developing standardized benchmarks for evaluating agent agreement capabilities. Just as we have benchmarks for individual AI performance on specific tasks, we need ways to measure how well AI teams communicate and collaborate.

The Path Forward

As AI systems become more sophisticated and multi-agent architectures become more common, solving the communication and agreement challenge will be crucial. The research highlighted by Omar Sar represents an important step toward understanding and addressing these challenges.

The ultimate goal isn't just to create AI agents that can agree, but to create systems that reach good agreements—decisions that are effective, efficient, and aligned with human values. This requires advances not just in natural language processing, but in reasoning, ethics, and system design.

What makes this research particularly exciting is its interdisciplinary nature. Progress will come from combining insights from computer science, linguistics, psychology, economics, and philosophy. As we build AI systems that increasingly resemble collaborative teams rather than individual tools, understanding how they communicate and agree will become one of the most important areas of AI research.

Source: Research discussed by Omar Sar (@omarsar0) on communication challenges in multi-agent AI systems.