In the rapidly evolving landscape of artificial intelligence, one persistent challenge has limited the capabilities of autonomous agents: memory. As AI researcher Omar Sar (@omarsar0) highlighted in his recent analysis, "One of the biggest challenges with AI agents is memory." When tasks grow longer and more complex, agents typically lose track of what they've learned, what they've tried, and what approaches actually worked. This fundamental limitation has constrained AI's ability to tackle truly ambitious, multi-step problems.

Now, researchers at Accenture have introduced a potential solution that could transform how AI agents operate. Their new system, Memex(RL), gives agents indexed experience memory—a structured, searchable repository of past experiences that can be retrieved precisely when needed, rather than relying on the increasingly inadequate approach of expanding raw context windows.

The Memory Problem in Modern AI

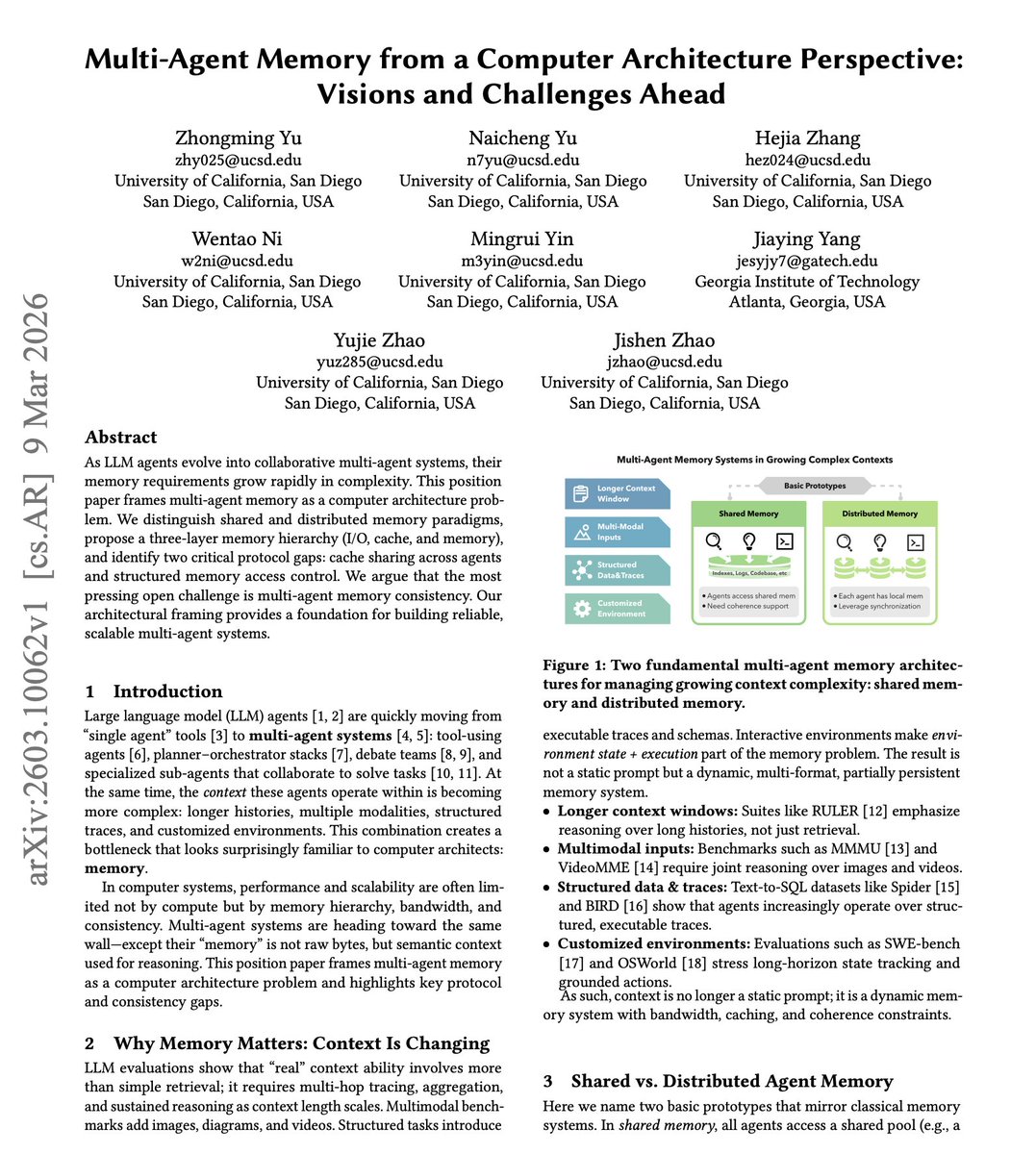

Current AI systems, particularly those built on large language models, face what might be called the "Goldfish Problem." Despite their impressive capabilities in processing information, they lack persistent memory across sessions or even within extended conversations. Each interaction typically exists in isolation, with the model unable to build upon previous experiences in a systematic way.

This becomes particularly problematic for what researchers call "long-horizon tasks"—complex operations that require multiple steps, extended timeframes, and adaptive learning. Examples include deep research projects that span weeks, multi-step coding assignments requiring iterative debugging, or complex planning scenarios that demand learning from previous attempts.

Traditional approaches have tried to address this by expanding context windows—the amount of text a model can consider at once. However, this brute-force method has diminishing returns. Larger context windows increase computational costs exponentially while often failing to solve the fundamental problem: how to identify and retrieve the most relevant past experiences when facing new challenges.

How Memex(RL) Works: Structured Memory for AI Agents

Memex(RL) takes a fundamentally different approach. Instead of simply expanding the context window, the system builds a structured, searchable index of an agent's past experiences. Think of it as giving AI agents their own personal Wikipedia—a carefully organized knowledge base they can consult during complex tasks.

The system operates through several key mechanisms:

Experience Indexing: As an agent completes tasks, Memex(RL) automatically indexes the experiences, creating structured representations that capture not just what happened, but the context, outcomes, and lessons learned.

Intelligent Retrieval: When facing new challenges, the agent can query this memory index to retrieve relevant past experiences. This isn't just keyword matching—the system understands semantic relationships and can identify analogous situations from the past.

Reinforcement Learning Integration: The "RL" in Memex(RL) indicates that the system uses reinforcement learning to improve both how memories are stored and how they're retrieved. The agent learns which types of experiences are most valuable to remember and how to best apply past knowledge to current problems.

Practical Applications and Implications

The implications of effective agent memory are profound across multiple domains:

Research and Analysis: AI agents could conduct truly deep research, remembering findings from weeks earlier and connecting disparate pieces of information that would otherwise remain isolated.

Software Development: Programming assistants could maintain context across entire development cycles, remembering why certain approaches were abandoned, what bugs emerged in previous versions, and which solutions proved most effective.

Complex Planning: From business strategy to logistics optimization, agents could learn from past planning cycles, remembering what assumptions proved incorrect and which strategies delivered the best results.

Personal AI Assistants: The technology could eventually power assistants that remember our preferences, past conversations, and learned patterns across months or years of interaction.

The Technical Breakthrough: Scaling Without Context Explosion

Perhaps the most significant technical achievement of Memex(RL) is its approach to scaling. As noted in the research, the system shows "how to scale this without blowing up context length." This addresses one of the most pressing practical concerns in AI deployment: the computational cost of processing ever-larger context windows.

By creating an efficient indexing and retrieval system, Memex(RL) allows agents to access potentially vast stores of past experience without needing to process that entire history with each new query. This makes long-horizon tasks computationally feasible in real-world applications where resources are constrained.

Looking Forward: The Future of Agent Memory

The introduction of Memex(RL) represents more than just another technical paper—it points toward a fundamental shift in how we conceptualize AI capabilities. Memory has long been recognized as a crucial component of intelligence, both artificial and natural. By giving agents structured, searchable memory, researchers are addressing what has been one of the most significant gaps between narrow AI and more general intelligence.

As this technology develops, we can expect to see AI agents that don't just perform tasks but learn from them in persistent ways. They'll build institutional knowledge, develop personal expertise, and become more capable partners in complex problem-solving. The era of AI with genuine memory may be closer than we think, and Memex(RL) appears to be lighting the path forward.

Source: Research from Accenture as highlighted by Omar Sar (@omarsar0) on X/Twitter, with additional details from the published paper.