A new startup, Avoko, is tackling what its founder identifies as the hardest part of building AI products: the fact that AI agents do not behave like humans, and traditional human research methods fail to explain why.

In a detailed thread, founder Hasan T. announced the launch of Avoko, described as the first platform built to "interview agents directly and map their actual behavior." The core premise is that while foundation models have become powerful, the unpredictable and often brittle nature of agentic systems built on top of them is the primary bottleneck for product development.

Key Takeaways

- Avoko has launched a platform designed to interview AI agents directly to map their actual behavior.

- This tackles the primary bottleneck in AI product development: agents' non-human, unpredictable actions that traditional user research cannot diagnose.

What Happened

Hasan T., a product builder in the AI space, publicly launched Avoko. The platform's stated goal is to provide developers and product teams with tools to understand why their AI agents behave the way they do. The announcement frames the problem clearly: the model itself (e.g., GPT-4, Claude 3) is often not the limiting factor. Instead, it's the emergent, non-human behavior of multi-step, tool-using agents that derails product reliability and user experience.

Traditional user research methods—A/B testing, surveys, interviews with human users—are insufficient for diagnosing failures in agentic logic, hallucinated tool calls, or infinite loops in reasoning. Avoko proposes a solution by creating a platform where the agent itself can be "interviewed" and its decision-making process mapped and analyzed.

Context

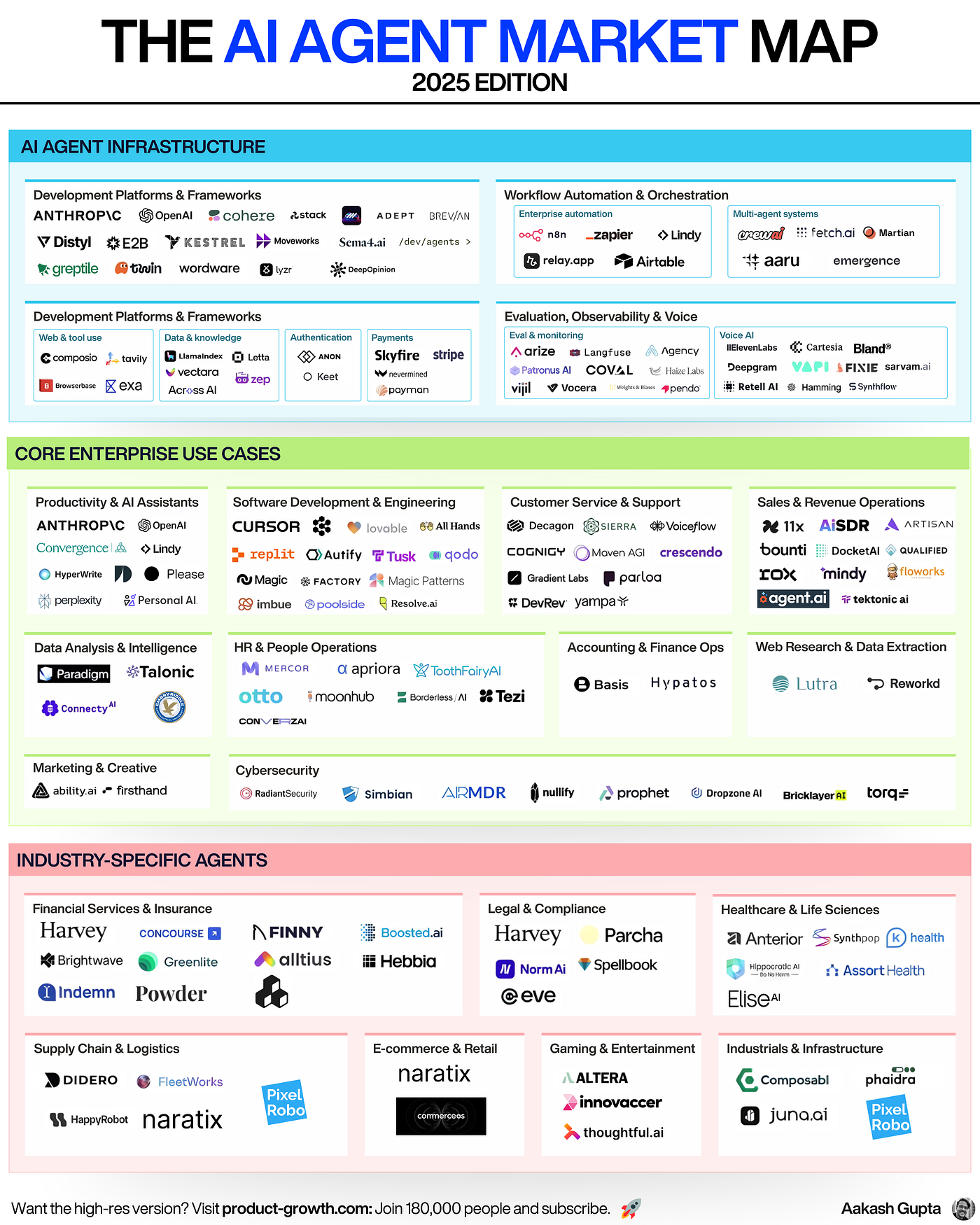

The launch comes amid an industry-wide push toward AI agents—systems that can autonomously perform multi-step tasks using tools like web browsers, calculators, and APIs. Major labs like OpenAI, Anthropic, and Google DeepMind are heavily invested in agent research, while startups like Cognition Labs (with its AI software engineer, Devin) and MultiOn have brought agentic capabilities to the forefront.

However, as noted in our previous coverage of Devin's technical report, a significant gap exists between demos of agent capabilities and their reliable, production-ready deployment. Debugging an agent's failure is notoriously difficult, often requiring developers to sift through massive, unstructured logs of chain-of-thought reasoning and tool calls.

Avoko positions itself squarely in this emerging AI observability and evaluation sector, which includes companies like Weights & Biases, Langfuse, and Arize AI, but with a specific focus on interactive, diagnostic "interviews" rather than passive metric tracking.

How It Works (As Described)

While the full technical details of the Avoko platform are not disclosed in the announcement thread, the concept of "interviewing agents" suggests a move beyond static evaluation benchmarks (like SWE-Bench for coding or WebArena for web navigation).

Instead, the platform likely allows developers to:

- Conduct interactive sessions with their agent, posing follow-up questions to its actions in real-time.

- Visualize the agent's decision tree, mapping out the branches of reasoning, tool selections, and context evaluations that led to a particular output or failure.

- Isolate failure points in complex, multi-turn agent workflows, distinguishing between a model misunderstanding, a tool-use error, or a prompt engineering flaw.

- Create reproducible test suites based on these interactive interviews to catch regressions in agent behavior.

The promise is to shift debugging from an artisanal, log-scrolling exercise to a structured, analytical process.

gentic.news Analysis

This launch is a direct response to a critical, growing pain point in the AI engineering stack. For the past year, the industry narrative has been dominated by model capabilities—context lengths, reasoning scores on MMLU, and cost-per-token. Avoko's thesis correctly identifies that the next major hurdle is system reliability. As companies like Sierra and Klarna deploy conversational AI agents at scale for customer service, the cost of unpredictable agent behavior is no longer just a developer annoyance; it's a business risk.

The focus on "interviewing" aligns with a broader trend toward more sophisticated evaluation frameworks. OpenAI's recent launch of Model Spec for steering model behavior and Anthropic's work on constitutional AI are attempts to make AI outputs more predictable and aligned. Avoko applies a similar principle of specification and inspection but at the level of the agentic system, not the base model.

However, the technical challenge Avoko faces is immense. "Mapping actual behavior" of a stochastic, reasoning LLM is fundamentally different from profiling deterministic software. The platform's success will hinge on its ability to provide insights that are genuinely actionable—not just another layer of visualization on top of an inscrutable process. If it can effectively reduce the mean-time-to-diagnosis for agent failures, it will become an essential tool in the AI product development lifecycle.

This also highlights a competitive frontier. Established ML observability players will likely rapidly develop or acquire similar capabilities. The company to watch is Weights & Biases, which has deep integration with AI training workflows and has been expanding into LLM evaluation. Avoko's first-mover focus on interactive agent debugging could give it a niche advantage, but the space is poised to become crowded quickly.

Frequently Asked Questions

What is an AI agent?

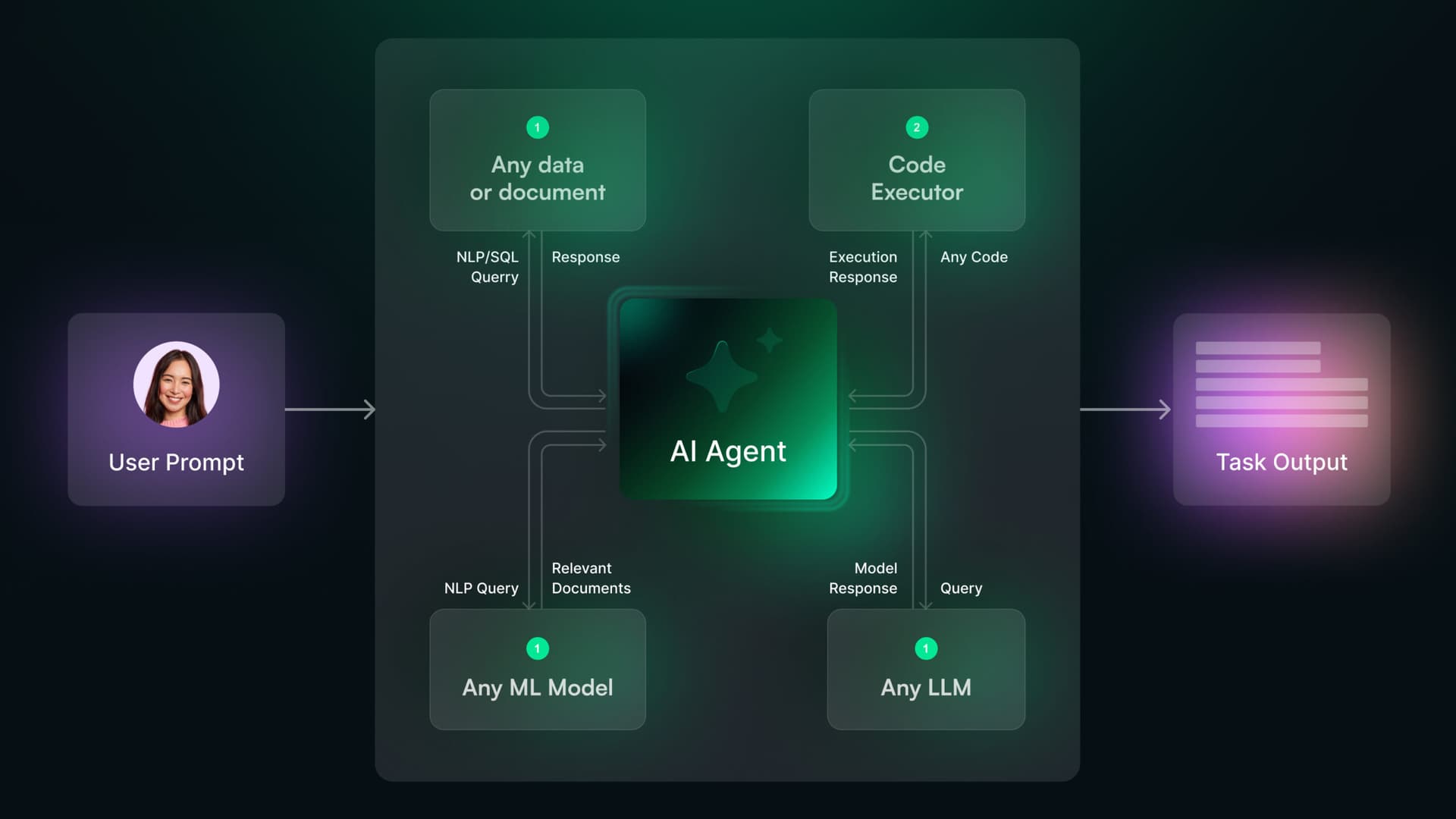

An AI agent is a system that uses a large language model (LLM) as a reasoning engine to autonomously perform multi-step tasks. Unlike a simple chatbot that responds to a single prompt, an agent can plan a sequence of actions, such as searching the web, performing calculations, writing code, or using software APIs, to achieve a defined goal without constant human intervention.

Why is debugging AI agents so hard?

Debugging AI agents is difficult because their failures are often emergent and non-deterministic. An error might stem from a misunderstanding in the initial user instruction, a flaw in the pre-programmed reasoning steps (the "agent framework"), a hallucinated tool call, an unexpected API response, or the base model's knowledge cutoff. Traditional software debugging tools are designed for deterministic code paths and are ill-suited for tracing the probabilistic reasoning of an LLM.

How is Avoko different from existing AI observability tools?

Existing AI observability tools (e.g., Langfuse, Arize) primarily focus on tracking metrics, logging inputs/outputs, and monitoring for issues like latency or cost. Avoko's proposed differentiation is active, interactive diagnosis—the ability to "interview" the agent. This suggests a more hands-on, exploratory debugging environment designed to answer "why" an agent failed, rather than just reporting "that" it failed.

Who is the target user for a platform like Avoko?

The primary users are AI engineers, product managers, and developer teams who are building and deploying production applications powered by AI agents. This includes teams working on AI coding assistants, customer support automation, personal AI assistants, data analysis bots, and any other complex, multi-step automated workflow where reliability and predictable behavior are critical.