The Innovation

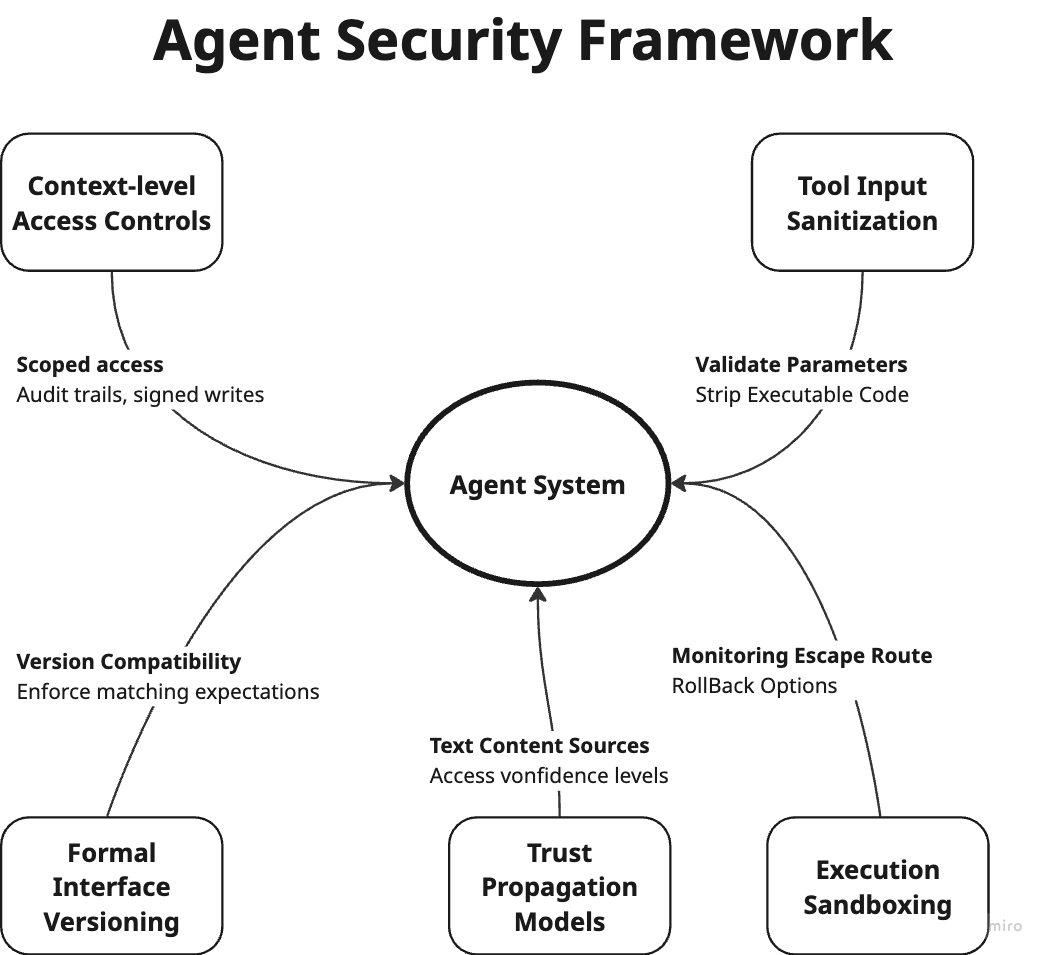

This is not a new AI model or algorithm, but a critical governance framework: a practical methodology for auditing deployed AI systems. The approach recognizes that traditional software testing is insufficient for probabilistic AI systems that learn from data and adapt. The framework evaluates AI across five dimensions:

- Accuracy: Beyond basic performance metrics to include real-world reliability and drift detection.

- Dataset Adequacy: Assessing whether training data represents real-world scenarios and edge cases.

- Bias and Fairness: Testing for discriminatory outcomes across protected attributes (age, gender, ethnicity, etc.).

- Regulatory Compliance: Ensuring adherence to GDPR, CCPA, and emerging AI-specific regulations.

- Security Resilience: Testing for adversarial attacks, data poisoning, and model extraction vulnerabilities.

The framework emphasizes that the question has shifted from "Does the model work?" to "Can this system survive an audit?" This represents a maturity shift from experimental AI to production AI that must withstand regulatory scrutiny, ethical challenges, and public accountability.

Why This Matters for Retail & Luxury

Luxury retailers are deploying AI across critical customer-facing functions where governance failures directly impact brand equity and customer trust:

Personalization & Recommendation Engines: LVMH, Kering, and Richemont use AI to suggest products, curate collections, and personalize customer journeys. An un-audited system might recommend only certain price points to specific demographics, creating perception of exclusion or discrimination.

Visual Search & Virtual Try-On: AI-powered visual search (like Farfetch's) and virtual try-on tools (used by Burberry and others) rely on computer vision models. Without proper auditing, these systems may perform poorly on diverse skin tones, body types, or cultural aesthetics, alienating customer segments.

Dynamic Pricing & Inventory Optimization: AI models that adjust prices or allocate inventory based on demand signals must be audited for fairness and compliance. Price discrimination based on location or browsing history could violate consumer protection laws.

Clienteling & CRM: AI that predicts customer lifetime value or identifies "high-potential" clients must be examined for bias. If the system disproportionately identifies customers from certain neighborhoods or backgrounds, it could reinforce historical biases in marketing outreach.

Fraud Detection: AI systems flagging suspicious transactions must balance security with customer experience. False positives that disproportionately affect certain customer segments create friction and damage relationships.

Business Impact & Expected Uplift

While AI auditing frameworks don't directly generate revenue, they protect against catastrophic losses and enable sustainable AI adoption:

Risk Mitigation Value:

- Regulatory Fines: GDPR violations can reach €20 million or 4% of global turnover. The EU AI Act proposes fines up to €35 million or 7% of global turnover for high-risk AI violations.

- Brand Damage: A single public incident of AI bias (like a virtual try-on failing for darker skin tones) can generate negative press that erodes brand equity built over decades. Luxury brands' reputation for excellence is particularly vulnerable.

- Customer Trust Erosion: Bain & Company research shows 70% of luxury consumers consider brand ethics and sustainability in purchasing decisions. Trustworthy AI governance aligns with these values.

Positive Business Impact:

- Increased AI Adoption Confidence: Properly audited systems can be deployed more broadly and confidently, accelerating ROI from AI investments.

- Reduced Implementation Costs: Early auditing identifies issues before full deployment, avoiding costly rework. Industry benchmarks suggest proper governance reduces AI project failure rates by 30-50%.

- Competitive Differentiation: In a 2023 Capgemini survey, 62% of consumers said they would place higher trust in companies whose AI systems are ethical and transparent. For luxury brands competing on exclusivity and trust, this is particularly valuable.

Time to Value: Implementing an auditing framework typically shows value within the first quarter through risk identification and prevention of deployment delays. Full maturity takes 6-12 months as processes become institutionalized.

Implementation Approach

Technical Requirements:

- Data Infrastructure: Access to training datasets, production data streams, and metadata about data provenance.

- Testing Tools: Bias detection libraries (IBM's AI Fairness 360, Google's What-If Tool), explainability tools (SHAP, LIME), and security testing frameworks.

- Monitoring Systems: MLops platforms (MLflow, Kubeflow, DataRobot) with drift detection and performance monitoring capabilities.

- Team Skills: Data scientists with ethics training, legal/compliance experts familiar with AI regulations, and potentially external audit specialists.

Complexity Level: Medium for companies with existing AI deployments. Requires customizing the framework to specific use cases and integrating with existing systems, but doesn't require novel research.

Integration Points:

- CRM/CDP Systems: To audit customer segmentation and personalization models.

- E-commerce Platforms: To test recommendation engines and search algorithms.

- PIM Systems: To ensure product attribute data doesn't introduce bias.

- Legal/Compliance Systems: To document audit results and demonstrate regulatory compliance.

- Quality Assurance Processes: Integrating AI audits into existing software release cycles.

Estimated Effort:

- Initial Framework Setup: 4-8 weeks to select tools, define processes, and train teams.

- First Use Case Audit: 2-4 weeks per major AI system (personalization engine, pricing model, etc.).

- Full Program Maturity: 6-12 months to audit all production AI systems and establish ongoing monitoring.

Governance & Risk Assessment

Data Privacy Considerations:

Auditing AI systems requires access to sensitive customer data and model outputs. This must be handled with strict GDPR/CCPA compliance:

- Use anonymized or synthetic data where possible for testing

- Ensure proper consent for data usage in model development and auditing

- Implement data minimization principles—only access what's necessary for the audit

- Maintain clear documentation of data lineage and processing purposes

Model Bias Risks:

Luxury fashion and beauty AI faces particular bias challenges:

- Skin Tone Bias: Virtual try-on and beauty recommendation systems trained predominantly on lighter skin tones

- Body Type Exclusion: Size recommendation algorithms that don't accommodate diverse body shapes

- Cultural Insensitivity: Style recommendation engines that misinterpret cultural attire or accessories

- Economic Bias: Personalization systems that prioritize high-net-worth customer patterns exclusively

Regular bias audits should include diverse testing panels and adversarial testing to identify edge cases.

Maturity Level: Production-ready framework with proven methodologies. The concepts are well-established in financial services and healthcare AI governance. Luxury retail adoption is accelerating but still early compared to these regulated industries.

Honest Assessment:

This is ready to implement now for any luxury retailer with production AI systems. The framework is not experimental—it's a necessary evolution of responsible AI practices. However, the specific application to luxury contexts (addressing aesthetic bias, cultural sensitivity, brand alignment) requires customization beyond generic frameworks.

For retailers just beginning their AI journey, implementing auditing from the first prototype is significantly easier than retrofitting governance onto deployed systems. The most forward-thinking approach is to "shift left"—integrating audit considerations into the AI development lifecycle from day one, rather than treating auditing as a final checkpoint before deployment.

Strategic Recommendation: Start with your highest-risk AI application (likely customer-facing personalization or recommendations), conduct a thorough audit using this framework, and use the findings to create a brand-specific AI ethics charter. This positions your brand as a leader in trustworthy AI while protecting your most valuable asset: customer trust.