Recent discussions in AI development circles have highlighted a critical limitation: the simple AGENTS.md file approach that works for small prototypes fails dramatically when applied to serious software projects. As AI coding assistants like Claude Code become more sophisticated, developers are discovering that single-prompt instructions hit a ceiling fast when dealing with complex, large-scale codebases.

This problem isn't theoretical—it's a practical barrier that's emerging as teams attempt to scale AI-assisted development beyond modest prototypes. The solution, documented in a groundbreaking new paper titled "Codified Context," represents a fundamental shift in how we think about AI's role in software development.

The Scaling Problem: Why Simple Prompts Fail

According to researcher Omar Sar, whose work inspired the paper, "A 1,000-line prototype can be fully described in a single prompt. A 100,000-line system cannot." This observation captures the core challenge: as projects grow in complexity, AI systems need more than just initial instructions—they need ongoing, structured memory about how the project works, what patterns to follow, and what mistakes to avoid.

The traditional approach of using a single markdown file to guide AI agents works well for small projects but becomes unmanageable for serious software development. The AI lacks context about architectural decisions, domain-specific patterns, and historical lessons learned from previous development sessions. This leads to repetitive explanations, inconsistent implementations, and the same mistakes being made repeatedly.

The Codified Context Solution: A Three-Tier Memory Architecture

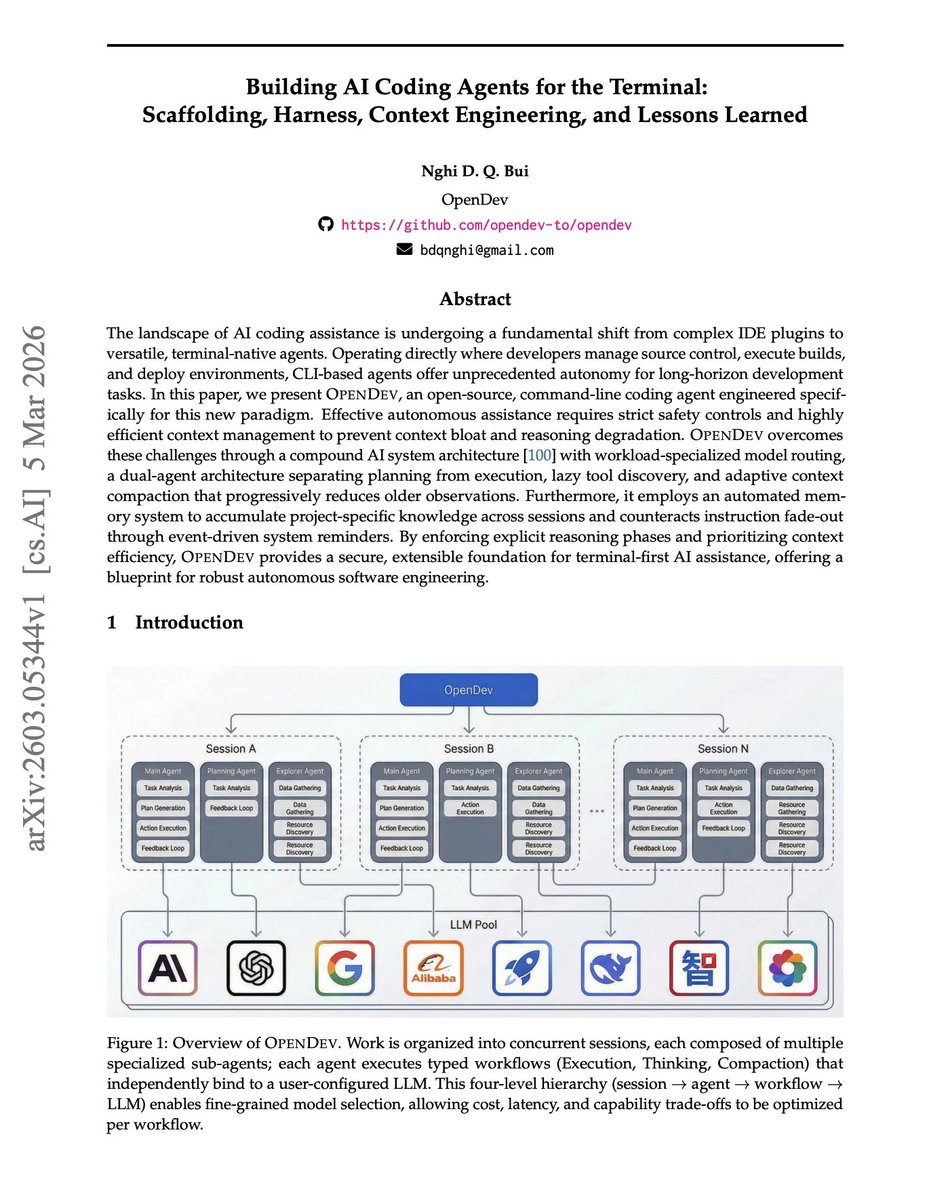

The research paper documents a practical solution developed during the real-world development of a 108,000-line C# distributed system across 283 development sessions over 70 days. The system employs a sophisticated three-tier memory architecture:

1. Hot-Memory Constitution (660 lines, always loaded)

This foundational layer contains the essential rules, principles, and core conventions that govern the entire project. It's always available to the AI agent, providing the basic framework for all development decisions.

2. Specialized Domain-Expert Agents (19 agents, 9,300 lines total)

These are invoked per specific task types, each containing deep knowledge about particular domains or architectural patterns within the system. Rather than having one general-purpose agent, the system employs specialized experts for different aspects of development.

3. Cold-Memory Knowledge Base (34 specification documents, ~16,250 lines)

This extensive repository contains detailed specifications, architectural decisions, and historical context that can be queried on demand via an MCP (Model Context Protocol) retrieval server. It serves as the long-term memory of the project.

Real-World Results and Metrics

The implementation produced impressive results across 283 development sessions:

- 2,801 human prompts

- 1,197 agent invocations

- 16,522 autonomous agent turns

- Approximately 6 autonomous turns per human prompt

- Knowledge-to-code ratio of 24.2%

Perhaps most importantly, the system wasn't designed upfront through theoretical planning. Each new agent and specification emerged organically from real failures, recurring bugs, architectural mistakes, and forgotten conventions. The documentation evolved into what the researchers call "load-bearing infrastructure"—essential components that agents depend on as memory rather than mere reference material.

The Evolution from Documentation to Infrastructure

This represents a fundamental shift in how we think about project documentation. Traditional documentation serves as reference material that humans might consult occasionally. In contrast, codified context becomes active infrastructure that AI agents rely on constantly to make decisions.

As the paper explains, each piece of knowledge was "codified so it could never require re-explanation again." This transforms documentation from passive information storage into active system components that prevent repetitive errors and ensure consistency across development sessions.

Implications for the Future of AI-Assisted Development

The success of this approach has several important implications:

- Scalability: It demonstrates that AI-assisted development can scale beyond small prototypes to serious enterprise systems.

- Specialization: The move toward specialized domain-expert agents suggests future AI development tools will need modular, composable intelligence rather than monolithic systems.

- Evolutionary Design: The organic emergence of agents and specifications from real failures suggests successful AI development systems will need to support continuous learning and adaptation.

- Knowledge Management: The 24.2% knowledge-to-code ratio indicates that successful AI development requires significant investment in structured knowledge representation.

Practical Applications and Next Steps

For development teams looking to implement similar systems, the research suggests several key principles:

- Start with a basic constitution and let specialized agents emerge from actual development needs

- Implement retrieval systems that can efficiently query large knowledge bases

- Treat documentation as living infrastructure that evolves with the project

- Measure and optimize the knowledge-to-code ratio as a key metric of system effectiveness

The paper also highlights the importance of tools like the MCP (Model Context Protocol) retrieval server for managing access to cold memory, suggesting that future AI development platforms will need sophisticated context management capabilities.

Conclusion: Toward More Intelligent Development Partners

The "Codified Context" approach represents a significant step forward in making AI true partners in software development rather than just sophisticated code generators. By giving AI systems structured, evolving memory about projects, we enable them to work more effectively on complex systems over extended periods.

As AI continues to transform software development, approaches like this three-tier memory architecture will likely become standard practice for serious development work. The days of simple prompt-based AI assistance are giving way to more sophisticated, memory-aware systems that can truly understand and contribute to large-scale software projects.

Source: Research on Codified Context architecture for AI-assisted development, documented in a paper referenced by Omar Sar (@omarsar0) on X/Twitter.