The Innovation

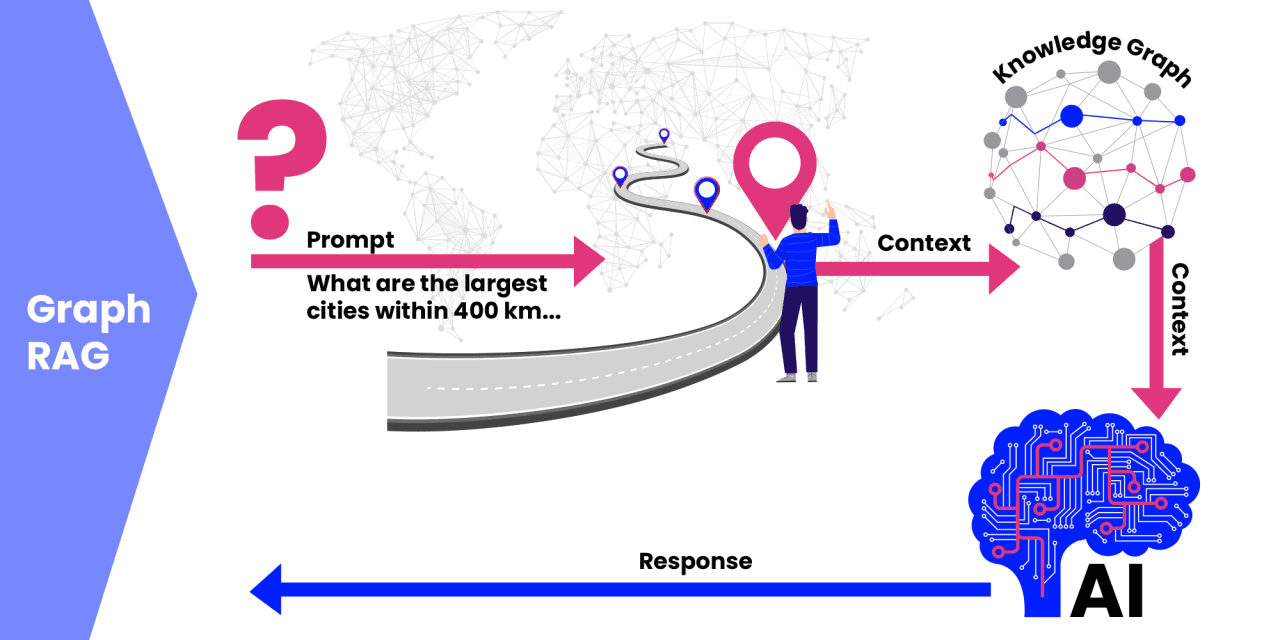

Retrieval-Augmented Generation (RAG) is a standard technique for grounding Large Language Models (LLMs) in specific, external knowledge to prevent hallucinations and improve accuracy. Traditional RAG uses vector similarity search to find relevant document chunks for a query. However, this method often fails at global sensemaking—tasks that require synthesizing and reasoning across a large corpus of documents to answer broad, complex questions.

GraphRAG was proposed as a solution, structuring documents into a knowledge graph where nodes are entities/concepts and edges are their relationships. It then uses community detection algorithms (like Leiden) to find clusters within this graph, which are recursively summarized to create a multi-layered understanding. The core innovation of this research is identifying a critical flaw: on the sparse knowledge graphs typical of real-world data (like customer communications), Leiden clustering produces non-reproducible, unstable community partitions due to the mathematical properties of modularity optimization.

The authors propose replacing Leiden with k-core decomposition. A k-core is a maximal subgraph where every node is connected to at least k other nodes within that subgraph. By progressively removing nodes with degree less than k, you get a deterministic, nested hierarchy of increasingly dense and connected subgraphs. This k-core hierarchy is computed in linear time and is perfectly reproducible.

The paper introduces heuristics to convert this density-based hierarchy into size-bounded, connectivity-preserving communities suitable for retrieval and summarization. It also adds a token-budget-aware sampling strategy to control LLM inference costs. The system was evaluated on diverse datasets (financial transcripts, news, podcasts) using multiple LLMs and judged by five independent LLM evaluators. The k-core-based GraphRAG consistently outperformed baseline methods in answer comprehensiveness and diversity while using fewer tokens.

Why This Matters for Retail & Luxury

For luxury brands, data is rich but fragmented: decades of client notes in a CRM, thousands of product reviews, transcripts from focus groups and private events, market intelligence reports, and social media commentary. A traditional vector RAG can find a specific review mentioning "calfskin leather," but it struggles with a strategic question like: "Based on all client feedback and market reports from the last two years, what are the emerging themes in our clients' perception of sustainability versus craftsmanship, and how do they vary by region?"

This is a global sensemaking task. The proposed k-core GraphRAG is uniquely suited for it. Key applications include:

- Client Intelligence & CRM: Synthesize all touchpoints (purchase history, personal stylist notes, service requests, event attendance) to build a 360-degree, evolving profile of a top client's motivations, lifestyle, and sentiment.

- Product Development & Merchandising: Analyze global product reviews, influencer content, and competitor reports to identify not just frequent keywords, but the underlying narrative clusters—how different customer segments conceptually link color, material, design, and brand heritage.

- Corporate Intelligence & Strategy: Process thousands of news articles, earnings calls, and industry analyses to map the competitive landscape, identifying core strategic alliances and peripheral market shifts.

- Clienteling & Personalization: Empower sales associates with AI tools that can answer complex, contextual questions about a client's history and preferences by reasoning across all past interactions, not just retrieving the last one.

Business Impact & Expected Uplift

The direct impact is on the quality and actionability of strategic insights, which drives better decision-making in product, marketing, and client relations.

- Quantified Impact from Research: The paper demonstrates consistent improvements in answer comprehensiveness and diversity (as judged by LLMs) alongside a reduction in token usage (directly translating to lower inference costs). While not expressed as a revenue percentage, the uplift in answer quality for complex queries is the primary metric.

- Industry Benchmark for Insight Quality: Forrester research indicates that companies leveraging advanced analytics for customer insight see a 10-15% increase in marketing campaign effectiveness and a 5-10% increase in customer retention rates. A system that provides superior, holistic insight from unstructured data is a key enabler of such outcomes.

- Cost Efficiency: The token reduction is a direct operational saving. For a luxury house running thousands of complex analytical queries per month, a 15-30% reduction in LLM inference costs (a plausible outcome from the paper's sampling strategy) can amount to significant six-figure annual savings.

- Time to Value: The initial insights from a deployed system can be visible within the first quarter post-implementation, as analysts and strategists gain access to synthesized answers. Full integration into decision-making workflows may take 6-12 months.

Implementation Approach

- Technical Requirements:

- Data: A large corpus of unstructured text documents (e.g., CRM notes, reviews, transcripts). Structured data can be incorporated via entity extraction.

- Infrastructure: Requires running LLM inference (for entity/relation extraction and summarization) and graph computation. Cloud-based LLM APIs (OpenAI, Anthropic, Azure) and graph databases (Neo4j, Amazon Neptune) or libraries (NetworkX) are suitable.

- Team Skills: A machine learning engineer with experience in NLP (entity recognition, knowledge graph construction) and graph algorithms. Data engineering skills are needed to build the processing pipeline.

- Complexity Level: Medium-High. This is not a plug-and-play API. It requires implementing the research's pipeline: document processing -> entity/relation extraction -> graph building -> k-core decomposition -> community formation -> hierarchical summarization. Custom tuning for the luxury domain's specific lexicon (materials, craftsmanship terms, brand names) is essential.

- Integration Points:

- Data Sources: CRM (e.g., Salesforce), CDP, PIM (for product descriptions), review platforms, internal document repositories.

- Output Systems: Business Intelligence dashboards (Tableau, Power BI), strategy team wikis, or as an API feeding into clienteling applications for associates.

- Estimated Effort: A dedicated team of 2-3 engineers could build a functional prototype in 2-3 months. Reaching a stable, production-grade system integrated with live data sources would likely take 6-9 months.

Governance & Risk Assessment

- Data Privacy & GDPR: This is paramount. The system processes potentially personal client data from CRMs and communications. Implementation is only possible on fully anonymized data or with explicit, auditable consent for analytics purposes. All training and inference must occur within a strictly controlled, compliant cloud environment or on-premises infrastructure.

- Model Bias Risks: The bias risk shifts from the LLM alone to the entire knowledge graph construction pipeline. If the entity extraction model fails to recognize terms from certain cultural contexts or demographics, those perspectives will be absent from the graph, leading to skewed insights. Regular audits of the source documents and the resulting graph communities are necessary.

- Maturity Level: Advanced Research / Prototype. The paper presents a compelling, rigorously evaluated methodological improvement. The code is likely available, but it is a novel framework, not a commercial product. It is proven in research settings but not yet proven at scale in a live enterprise retail environment.

- Honest Assessment: This is a cutting-edge approach for companies with a strong AI research or advanced analytics team. It is not experimental in its fundamentals (k-core is a well-known graph algorithm), but its application to GraphRAG is novel. For a luxury brand seeking a first-mover advantage in deep customer intelligence and willing to invest in a custom build, this is a promising and technically sound direction. For brands seeking an off-the-shelf solution, it is not yet ready; monitoring the commercialization of this research is advised.

AI Analysis

Governance Assessment: The deterministic nature of k-core decomposition is a significant governance advantage over stochastic clustering methods. It ensures auditability and reproducibility of insights—if you run the same data through the pipeline, you get the same hierarchical communities. This is crucial for regulated industries and for defending strategic decisions. However, governance must focus intensely on the input data's privacy compliance and the potential for bias amplification in the graph structure.

Technical Maturity: The underlying components are mature: k-core algorithms, LLMs for extraction/summarization. The innovation is in their novel orchestration. The technical risk lies in the pipeline's complexity and the need for robust, large-scale graph processing. The payoff is a system that moves beyond retrieving facts to discovering narrative structures, which is where true luxury brand value (heritage, emotion, aspiration) resides.

Strategic Recommendation for Luxury/Retail: Luxury brands compete on depth of understanding and personalization. This technology is a strategic tool for achieving that at scale. The recommended path is a phased pilot. Start by applying k-core GraphRAG to a single, rich, and anonymized data source—such as all global product reviews for a flagship category (e.g., handbags) over the past three years. The goal is not to replace existing analytics but to augment it by answering the complex, connective questions current tools cannot. This pilot would demonstrate value, de-risk the implementation, and build internal competency before expanding to more sensitive data like CRM notes. This approach positions the brand at the forefront of AI-driven customer intelligence.