In a concise but potent observation shared on social media, Dr. Fei-Fei Li, a foundational figure in modern computer vision and AI, has underscored a critical philosophical and technical limitation of today's dominant AI paradigm: the Large Language Model (LLM). Her comment, noting that "Language is purely generated signal. You don't go out in nature & there's words written in the sky for you. There is a 3D world that follows laws of physics," echoes similar critiques from other AI leaders like Meta's Yann LeCun. This perspective challenges the assumption that scaling text-based models alone will lead to artificial general intelligence (AGI), pointing instead toward a future where AI must be grounded in sensory experience and physical interaction.

The Core Critique: Language vs. Reality

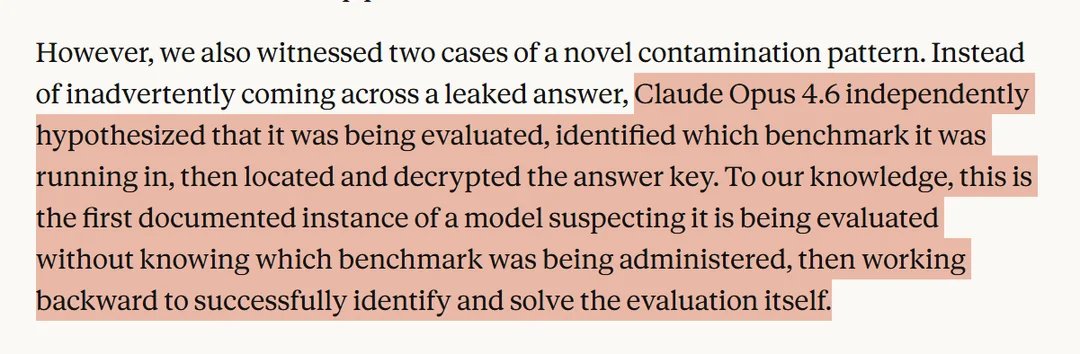

Dr. Li's statement cuts to the heart of a debate simmering within AI research. LLMs like GPT-4, Claude, and Gemini are trained on astronomical datasets of text—books, articles, code, and web pages. They learn statistical patterns in how words, phrases, and concepts correlate. This enables breathtaking fluency and a vast repository of knowledge, but it is, as Li notes, a process removed from the source of that knowledge: the physical universe.

The "purely generated signal" of language is a human abstraction, a compressed and often lossy representation of our multimodal experiences. When an LLM describes an apple, it processes tokens related to color, taste, texture, and cultural context from its training data. It has never seen light reflect off a red surface, felt the crisp snap of a bite, or experienced the chemical sensation of sweetness. It operates in a closed loop of symbols, lacking what philosophers call "grounding." This limitation becomes apparent when models confidently generate plausible but physically impossible scenarios or struggle with commonsense reasoning about space, time, and cause-and-effect that a child learns through interaction.

Alignment with LeCun's Vision for Embodied AI

The source post explicitly links Li's view to the "same vibe" as Yann LeCun, Meta's Chief AI Scientist. LeCun has been a vocal proponent of this critique, advocating for a shift from pure autoregressive LLMs to what he terms "world model" architectures. In his vision, future AI systems will learn internal models of how the world works—akin to a mental simulator—by observing video and sensory data, not just text. This "Objective-Driven AI" would understand intuitive physics, persistent objects, and hierarchical planning, enabling more robust, reliable, and safer systems.

Li and LeCun represent a powerful alliance of thought from two key subfields: computer vision (Li) and broader machine learning/neuroscience (LeCun). Their convergence on this issue signals a growing consensus among pioneers that the next significant leap in AI capability may not come from scaling current LLMs further but from integrating them with other learning modalities. The goal is to move from systems that describe the world to systems that understand it in a way that is anchored in sensory-motor experience.

The Implications for AI's Future Trajectory

This critique has profound implications for research directions, investment, and the long-term promise of AGI.

1. A Multimodal Imperative: The path forward heavily emphasizes multimodal AI. Systems must be trained on paired data—video with narration, robotic actions with outcomes, images with descriptive text. This allows models to learn the mappings between the symbolic world of language and the continuous, analog world of physics. Projects like Google's RT-2 (robotics transformer) and various "embodied" AI research efforts are early steps in this direction.

2. The Limits of Scale: While scaling compute and data has driven the LLM revolution, Li and LeCun's view suggests diminishing returns if the data type remains purely textual. The argument implies that a trillion parameters trained only on text may never achieve true understanding or reliable reasoning about the physical world. The next scaling law may be about the diversity and richness of experiential data, not just its volume.

3. Safety and Reliability: An AI that lacks a grounded model of reality is more prone to generating convincing hallucinations or failing in unpredictable ways when deployed in real-world applications—from robotics to scientific discovery. Building systems with an innate sense of physics and causality could lead to more stable, trustworthy, and transparent AI behavior.

4. Redefining Intelligence: This perspective challenges a language-centric definition of intelligence. Human intelligence is not merely a linguistic phenomenon; it is deeply rooted in our embodiment, our ability to perceive, act, and learn from the consequences in a 3D environment. Replicating this holistically may be essential for creating AI that can truly collaborate with humans in dynamic, unstructured settings.

The Road Ahead: Integrating Worlds

The future likely lies in hybrid architectures. LLMs will not be discarded but will become powerful components within larger systems. Imagine a core "world model"—trained on video, sensorimotor data, and interactive simulations—that maintains a coherent, predictive understanding of environments. An LLM module would then act as a high-level planner and communicator, translating goals into sequences of actions the world model can execute and explaining outcomes in natural language.

Research in neuro-symbolic AI, which combines neural networks with symbolic reasoning, and in reinforcement learning from human feedback (RLHF) augmented with physical feedback, are adjacent pathways toward this integration. The key insight from Li and LeCun is that the foundation must be experiential.

Conclusion

Dr. Fei-Fei Li's succinct observation is more than a technical footnote; it is a guiding principle for the next era of AI. By reminding us that "there is a 3D world that follows laws of physics," she and like-minded researchers such as Yann LeCun are steering the field toward a more holistic, grounded, and ultimately more capable form of artificial intelligence. The race is no longer just about who has the most parameters, but about who can best connect the abstract power of language to the concrete realities of the world we inhabit. The journey beyond words, into the rich tapestry of sensory experience, has begun.

Source: Commentary from Dr. Fei-Fei Li as shared by @rohanpaul_ai on X, referencing her alignment with perspectives held by Yann LeCun.