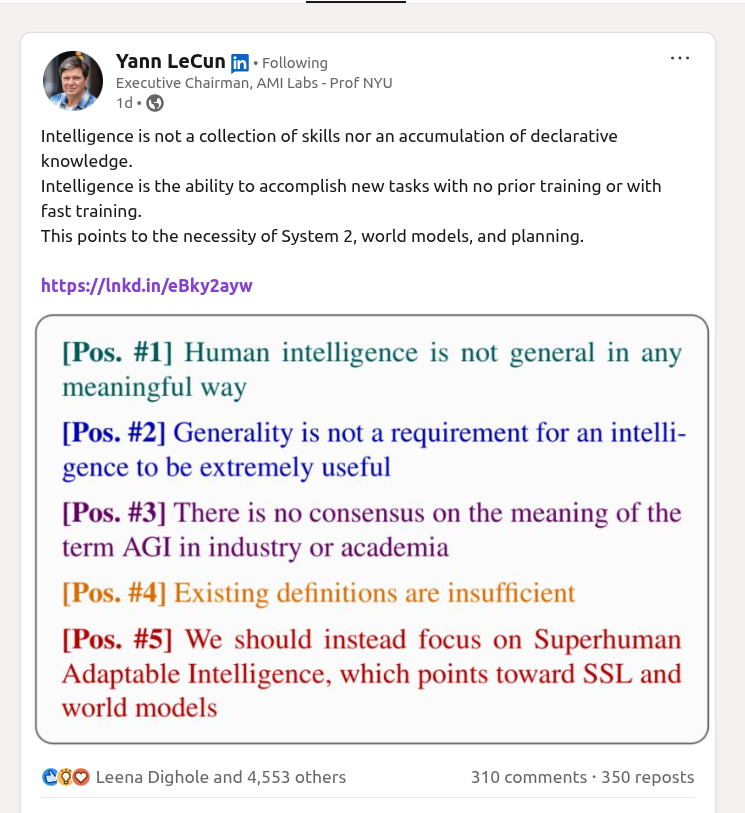

Meta's Chief AI Scientist Yann LeCun has reignited a crucial debate in artificial intelligence with his recent critique of large language models (LLMs). In a series of discussions and presentations, the Turing Award winner and deep learning pioneer has articulated why current LLM architectures, despite their impressive capabilities in text generation and pattern recognition, fall fundamentally short of achieving what he calls "real-world intelligence."

The Core Argument: Training Data vs. Understanding

LeCun's central contention revolves around the distinction between statistical pattern matching and genuine comprehension. "The biggest LLM is trained on more text than a human reads in their entire lifetime," he notes, yet these systems "don't have a fraction of the understanding of the world that a house cat has." This striking comparison highlights what LeCun sees as a fundamental mismatch between scale and substance in current AI approaches.

The limitation stems from what LLMs fundamentally are: next-token predictors operating on statistical correlations within their training data. While they can generate remarkably coherent text by predicting probable sequences, they lack what LeCun calls "world models"—internal representations of how the physical and social world actually works. A human doesn't need to read thousands of descriptions of gravity to understand that dropping a glass will likely cause it to break; this understanding emerges from embodied experience and intuitive physics.

Architectural Limitations of Current LLMs

LeCun identifies several specific architectural constraints that prevent LLMs from achieving true intelligence:

Lack of persistent memory: Unlike biological intelligence, which maintains continuous learning and memory consolidation, LLMs have fixed training windows and cannot continuously update their knowledge without catastrophic forgetting or expensive retraining.

Absence of planning capabilities: Humans and animals constantly make predictions about future states and plan actions accordingly. Current LLMs operate in a reactive mode without the ability to simulate multiple possible futures or reason about cause and effect over extended sequences.

No embodiment or sensory grounding: LLMs process disembodied text without connection to sensory experiences, physical interactions, or the rich multimodal understanding that characterizes biological intelligence.

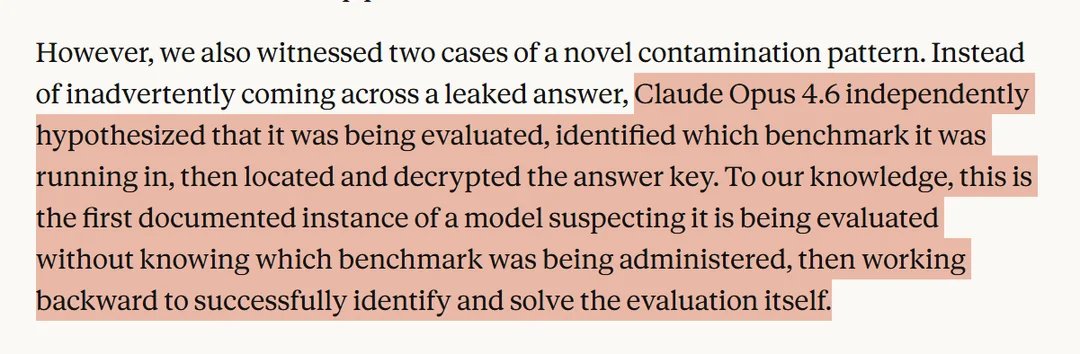

Inability to reason about uncertainty: While humans naturally handle ambiguous situations and reason with incomplete information, LLMs tend to generate confident-sounding responses even when they lack actual understanding.

The Path Forward: Alternative Architectures

LeCun doesn't merely criticize current approaches but points toward what he believes are more promising directions. He advocates for:

- Joint Embedding Predictive Architectures (JEPA): Systems that learn by predicting representations rather than reconstructing inputs, allowing for more efficient learning of abstract concepts

- Hierarchical planning systems: AI that can break down complex tasks into subgoals and reason about actions at multiple time scales

- World model learning: Systems that develop internal models of how the world works through observation and interaction

- Energy-based models: Alternative to purely generative approaches that can better handle uncertainty and multiple possible outcomes

These approaches, LeCun argues, would move AI beyond pattern recognition toward systems that can reason, plan, and understand the world in ways more analogous to biological intelligence.

Industry Implications and Research Directions

LeCun's critique comes at a pivotal moment in AI development. While companies continue to invest billions in scaling existing LLM architectures, his perspective suggests that diminishing returns may be inevitable without fundamental architectural innovations. This has significant implications for:

- Research funding priorities: Should resources shift from scaling current models to exploring alternative architectures?

- Commercial applications: What are the limits of current LLMs for tasks requiring true understanding or reasoning?

- AI safety: Systems that lack genuine understanding may be more prone to unpredictable failures or manipulation

The Broader Philosophical Debate

LeCun's position places him in an ongoing debate about the nature of intelligence and the best paths toward artificial general intelligence (AGI). On one side are proponents of the "scaling hypothesis" who believe current architectures will eventually yield AGI through sufficient scale and data. On the other are researchers like LeCun who argue that new fundamental breakthroughs are necessary.

This debate echoes earlier discussions in AI history about symbolic vs. connectionist approaches, though with modern twists. LeCun's perspective suggests that pure connectionism—even at massive scale—may be insufficient for achieving true intelligence.

Practical Consequences for AI Development

For developers and companies working with current LLMs, LeCun's critique offers important cautions:

- Recognizing the boundaries: Understanding what LLMs can and cannot do is crucial for responsible deployment

- Hybrid approaches: Combining LLMs with other AI techniques may yield more robust systems

- Research diversification: Investing in alternative architectures alongside scaling efforts

As LeCun summarized in his presentation: "We need to move beyond just making bigger language models and start building systems that actually understand the world." This challenge represents what may be the next major frontier in artificial intelligence research.

Source: Analysis based on Yann LeCun's public statements and presentations as referenced in @rohanpaul_ai's coverage.