In a concise but profound statement that has reverberated through the AI community, Yann LeCun, Meta's Chief AI Scientist and Turing Award winner, has offered what may become a foundational definition for the next generation of artificial intelligence. His declaration that "Intelligence is not a collection of skills nor an accumulation of declarative knowledge" challenges the very premise of how most current AI systems are designed and evaluated.

The Core Definition

LeCun's complete definition reads: "Intelligence is the ability to accomplish new tasks with no prior training or with fast training. This points to the necessity of System 2, world models, and planning."

This seemingly simple statement contains multiple revolutionary implications. First, it distinguishes between what current AI systems excel at (skill accumulation through massive training) and what true intelligence represents (adaptability to novel situations). Second, it explicitly connects this capability to specific cognitive architectures that most current AI lacks.

The System 1 vs. System 2 Distinction

The reference to "System 2" comes from psychologist Daniel Kahneman's dual-process theory of cognition. System 1 represents fast, automatic, intuitive thinking—exactly what today's deep learning systems excel at. When you ask ChatGPT a question or have Midjourney generate an image, you're witnessing System 1-style processing: pattern recognition based on statistical regularities in training data.

System 2, by contrast, represents slow, deliberate, logical reasoning. It's what humans use when solving complex problems, planning multi-step tasks, or reasoning through novel situations. Current AI systems have remarkably little System 2 capability, which explains their brittleness when faced with truly novel challenges.

World Models: The Missing Piece

LeCun's emphasis on "world models" points to what many researchers believe is the critical missing component in today's AI. A world model is an internal representation of how the world works—not just statistical correlations between inputs and outputs, but causal understanding of relationships, physics, and consequences.

Humans develop sophisticated world models from relatively little data. A child who has never seen a tower of blocks fall doesn't need thousands of examples to understand that removing a bottom block will cause collapse. This intuitive physics emerges from an internal model of how objects interact in space.

Current AI systems lack such models. They recognize patterns but don't understand causes. They can describe what happens but not why it happens or what might happen next in novel situations.

Planning: From Reactive to Proactive Intelligence

The third component—planning—represents the ability to simulate multiple possible futures and select optimal actions. This requires both world models (to accurately simulate outcomes) and System 2 reasoning (to evaluate alternatives).

Most current AI is fundamentally reactive: given an input, produce an output. Planning transforms systems from reactive to proactive, enabling them to work toward goals over extended time horizons, anticipate obstacles, and adjust strategies when initial plans fail.

Implications for AI Development

LeCun's definition suggests that the current paradigm of scaling up existing architectures—bigger models, more data, more compute—will eventually hit fundamental limits. No matter how many skills we accumulate or how much declarative knowledge we encode, we won't achieve true intelligence without System 2 capabilities, world models, and planning.

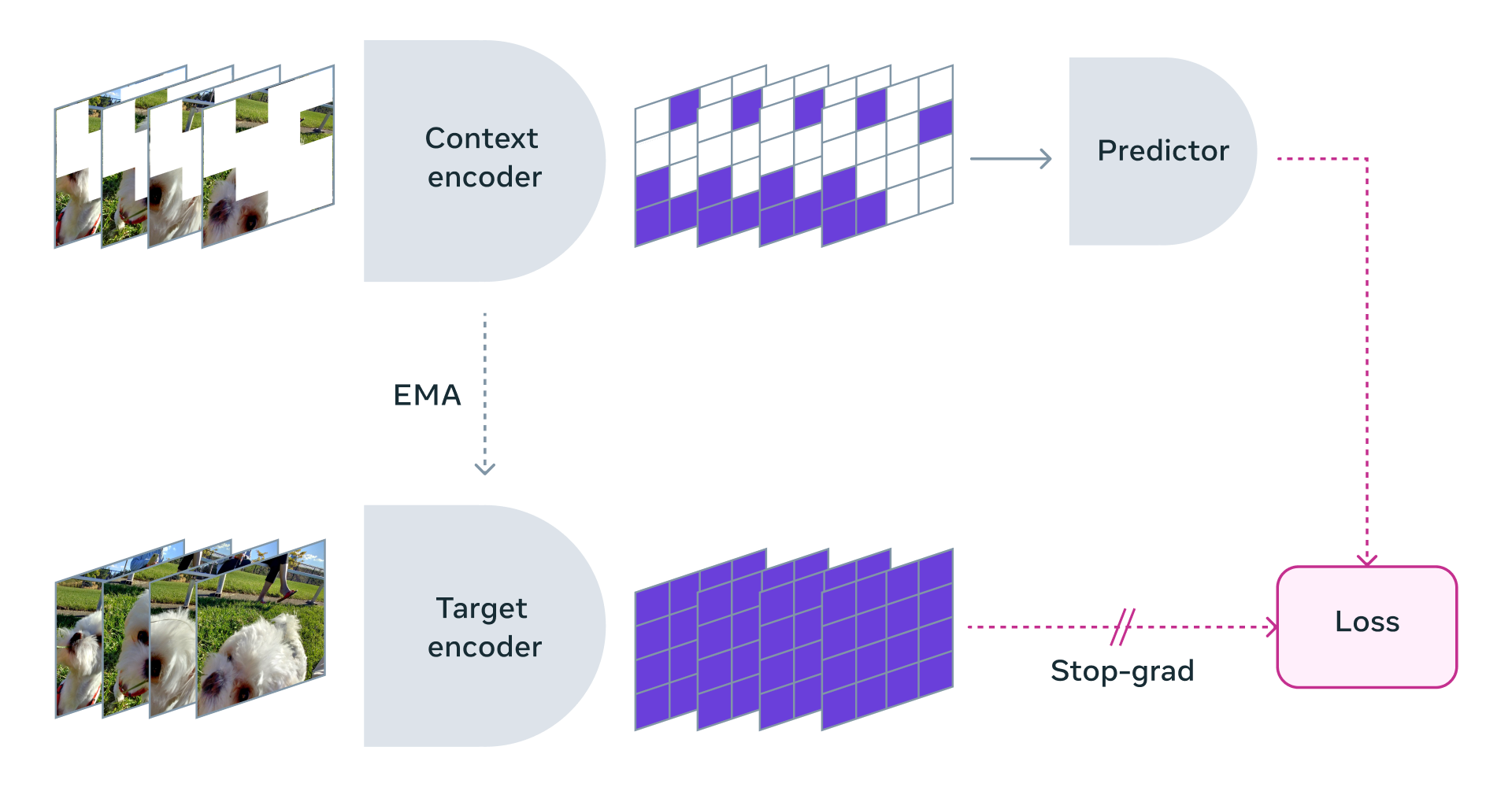

This aligns with LeCun's long-standing advocacy for alternative architectures like his proposed Joint Embedding Predictive Architecture (JEPA), which aims to enable systems to learn world models through self-supervised learning rather than massive labeled datasets.

The Training Paradox

The definition also highlights what might be called the "training paradox" of current AI. Today's most impressive systems require staggering amounts of training data—essentially the entire internet—to achieve their capabilities. Yet human intelligence demonstrates the opposite pattern: we learn new tasks with minimal examples because we can leverage our world models and reasoning capabilities.

LeCun's framework suggests that future AI breakthroughs won't come from even larger training datasets but from architectures that can learn world models efficiently and reason about them effectively.

Practical Applications and Limitations

Understanding intelligence through this lens helps explain why current AI excels in some domains while failing spectacularly in others. Large language models can write convincing essays but struggle with simple logical puzzles that require multi-step reasoning. Computer vision systems can identify objects with superhuman accuracy but can't predict what will happen if those objects are rearranged in novel ways.

This framework also suggests new evaluation metrics for AI systems. Rather than measuring performance on specific tasks, we might evaluate how quickly systems can adapt to truly novel challenges or how well they can plan in unfamiliar environments.

The Path Forward

LeCun's definition doesn't just describe intelligence—it provides a roadmap for achieving more general artificial intelligence. The research priorities it suggests include:

- Developing architectures that support System 2 reasoning alongside System 1 pattern recognition

- Creating learning algorithms that can efficiently acquire world models from limited experience

- Building planning capabilities that can operate over extended time horizons

- Integrating these components into cohesive systems

This represents a significant shift from the current focus on scaling and fine-tuning. It suggests that the next AI revolution won't come from making existing approaches bigger but from fundamentally rethinking how we architect intelligent systems.

Conclusion: A New North Star for AI Research

Yann LeCun's definition of intelligence provides what the field has often lacked: a clear, operational definition that distinguishes between what current systems do and what truly intelligent systems should do. By emphasizing adaptability over skill accumulation, and by explicitly linking this capability to specific cognitive architectures, he offers both a critique of current approaches and a vision for what comes next.

As AI continues to transform society, having a clear understanding of what intelligence actually is—and what it isn't—becomes increasingly important. LeCun's framework reminds us that true intelligence isn't about knowing everything but about being able to figure out anything, even with limited prior experience. This insight may prove more valuable than any technical breakthrough in guiding the development of more capable, more reliable, and ultimately more beneficial artificial intelligence.

Source: Yann LeCun via @rohanpaul_ai on X/Twitter