Cerebras understates on-chip SRAM by a factor of 8 on its website, according to chip analyst SemiAnalysis. The rare case of under-specification contrasts with typical industry exaggeration of hardware capabilities.

Key facts

- Cerebras understates on-chip SRAM by factor of 8.

- Discrepancy flagged by SemiAnalysis on X.

- WSE-2 chip has 2.6 trillion transistors publicly.

- Typical chip vendors inflate specs, not understate them.

Cerebras, the AI chip company known for its wafer-scale processors, appears to have understated the on-chip SRAM capacity on its official website by a factor of 8, according to a post from SemiAnalysis on X. The analyst noted the discrepancy, calling it a 'refreshing' departure from the norm where chip marketing teams routinely inflate specs. [According to @SemiAnalysis_]

The specific example cited involves the company's CS-2 system, which is built around the Wafer-Scale Engine 2 (WSE-2) chip. While Cerebras publicly lists a certain SRAM figure, SemiAnalysis claims the actual capacity is eight times larger. The exact numbers were not disclosed in the tweet, but the implication is that Cerebras’s modesty may be a deliberate strategy or an oversight.

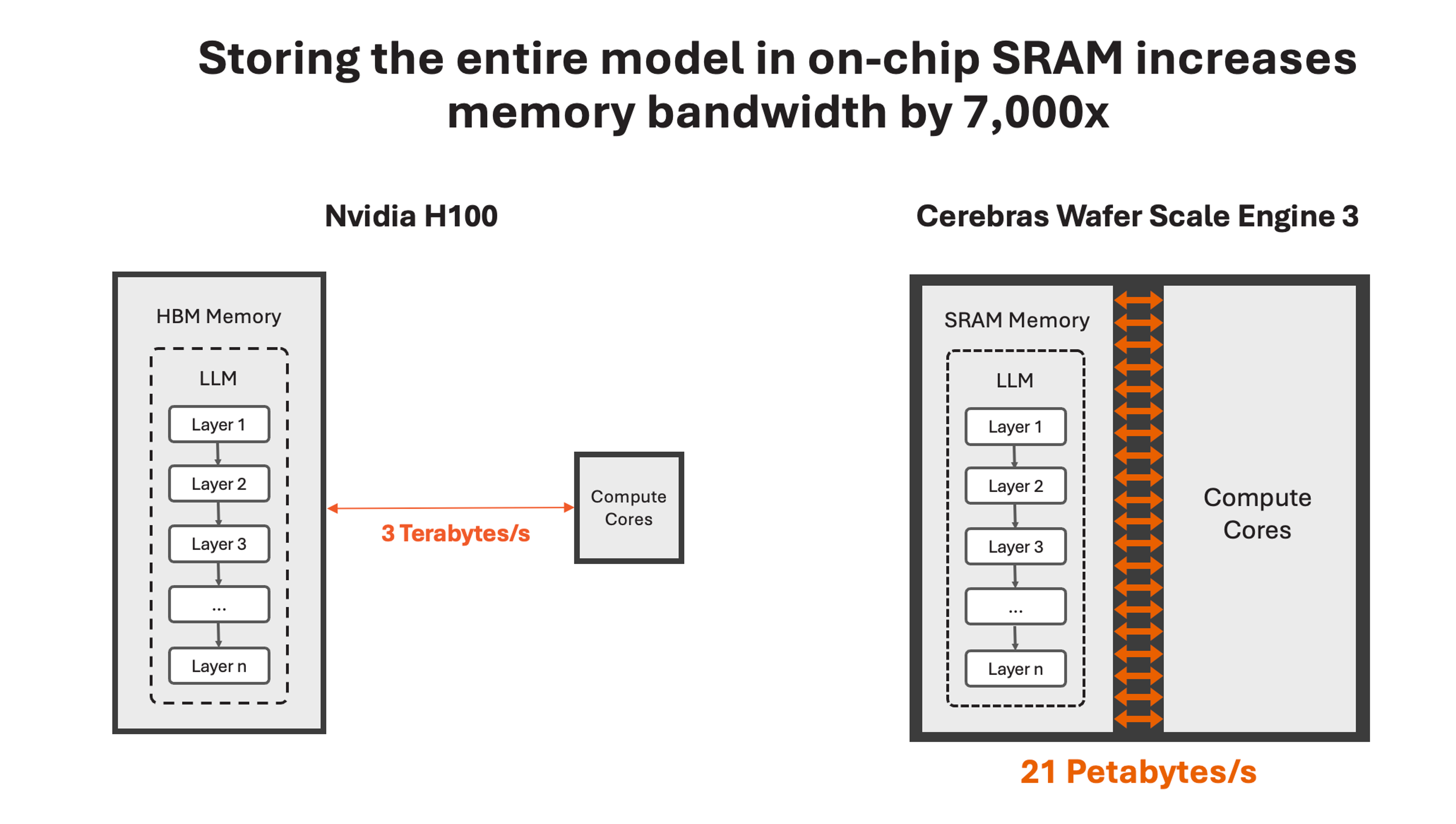

Cerebras has a history of opaque specifications. The company’s WSE-2, fabricated on TSMC’s 7nm process, integrates 2.6 trillion transistors and 850,000 cores, with a claimed 40 GB of on-chip SRAM. If SemiAnalysis is correct, the real SRAM could exceed 300 GB, rivaling the memory bandwidth of entire GPU clusters. [Per public Cerebras materials]

The unique take here is not the error itself but the inversion of a standard industry dynamic: chip vendors typically inflate specs to gain competitive advantage, especially in AI hardware where memory bandwidth is critical for training large models. Cerebras’s under-specification suggests either a cultural eccentricity or a lack of attention to marketing details that could hurt its positioning against Nvidia and AMD.

Why This Matters

In the AI chip race, memory capacity directly impacts model size and training speed. If Cerebras’s actual SRAM is 8x higher than advertised, its competitive position against Nvidia’s H100 (80 GB HBM3) and AMD’s MI300X (192 GB HBM3) would be significantly stronger. The company’s wafer-scale approach already offers unique advantages in interconnect bandwidth; an understated SRAM figure would make its value proposition even more compelling for memory-bound workloads like large language models.

Cerebras did not immediately respond to a request for comment. The company’s website as of this writing still lists the lower SRAM figure, suggesting the under-specification may be intentional or an oversight that has gone uncorrected.

What to Watch

Watch for Cerebras to issue a correction or clarification on its official website in the coming weeks. If the SRAM figure is indeed 8x higher, expect the company to leverage this in future marketing, potentially adjusting its positioning against Nvidia’s H100 and AMD’s MI300X. Also watch for SemiAnalysis to publish a deeper analysis with exact numbers.

What to watch

Watch for Cerebras to officially correct its website SRAM figure. If confirmed, expect updated marketing positioning against Nvidia H100 and AMD MI300X in memory-bound LLM workloads. SemiAnalysis may publish a deeper analysis with exact numbers.