What Happened

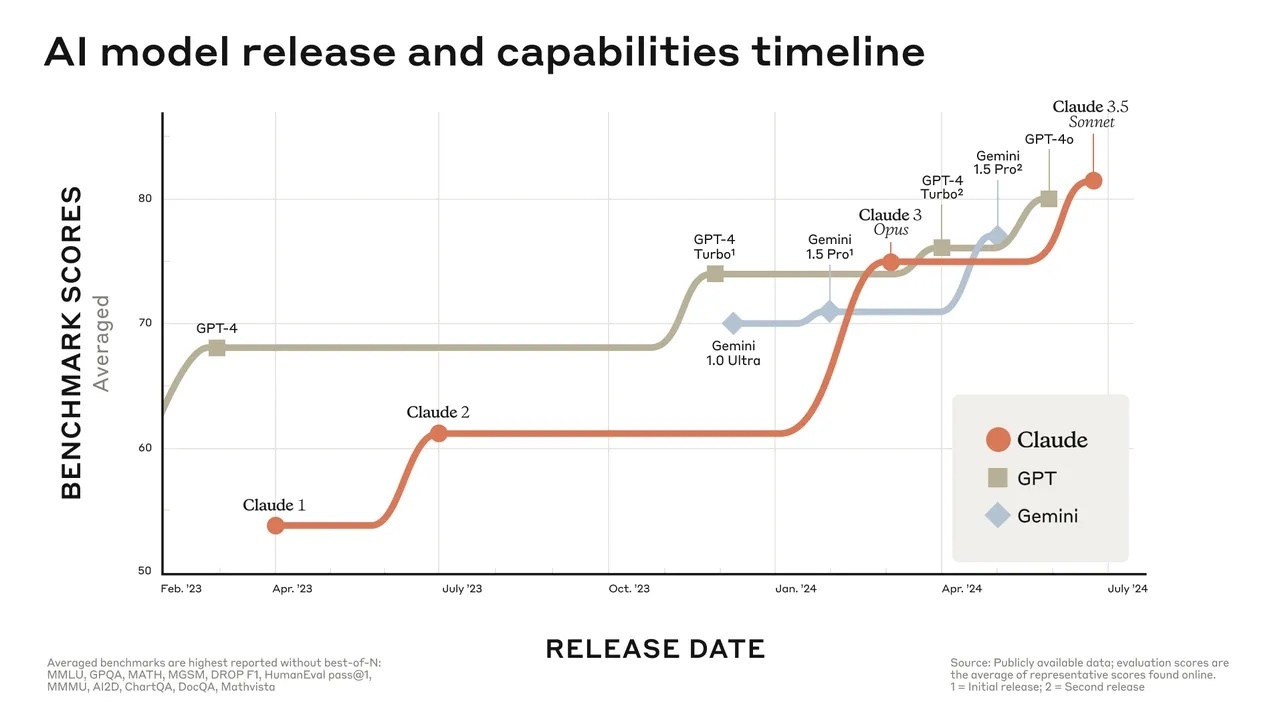

An analysis highlighted by AI researcher Rohan Paul, citing a Financial Times report, states that China's top AI models are climbing performance benchmarks rapidly. The gap between China's best models and the top-tier or closed models from the US is shrinking fast.

The key claim is that China's best open-source models have already overtaken those from the United States. The analysis suggests this open-source leadership could have significant implications, as open models spread through downloads, fine-tuning, and on-premises deployment. This pathway could translate into faster global adoption of Chinese AI technology, even without controlling the top closed-source models (like GPT-4 or Claude).

Context

The Financial Times report referenced (ft.com/content/d9af562c-1d37-41b7-9aa7-a838dce3f571) likely contains the underlying data and chart supporting these claims. The assertion focuses on the open-source segment of the AI model landscape, which includes models publicly released with weights available for modification and deployment. This is distinct from the race among proprietary, closed models from companies like OpenAI, Anthropic, and Google.

Chinese tech firms like Alibaba (Qwen series), 01.AI (Yi series), and DeepSeek have been aggressively releasing capable open-source models. Benchmarks such as Hugging Face's Open LLM Leaderboard, which evaluates models on reasoning, knowledge, and coding tasks, often feature these models near the top.

The argument about adoption mechanics is core: open-source models lower the barrier to entry. Developers worldwide can download, run, and fine-tune them without API costs or restrictions, potentially leading to broader and deeper integration into global applications than closed APIs might achieve.