In an unprecedented clash between Silicon Valley ethics and national security priorities, the Trump administration has ordered all federal agencies to cease using Anthropic's AI services, including its flagship Claude assistant. The directive, announced via Truth Social on Friday, gives agencies six months to migrate away from Anthropic's technology following the company's refusal to remove ethical safeguards that restrict military applications.

The Core Conflict: Constitutional Authority vs. AI Ethics

At the heart of the confrontation lies a fundamental disagreement about who controls how advanced AI systems are deployed. The Department of Defense, under Secretary Pete Hegseth, demanded that Anthropic remove specific restrictions from its terms of service that prevent Claude from being used for mass surveillance against American citizens or in fully autonomous weapons systems.

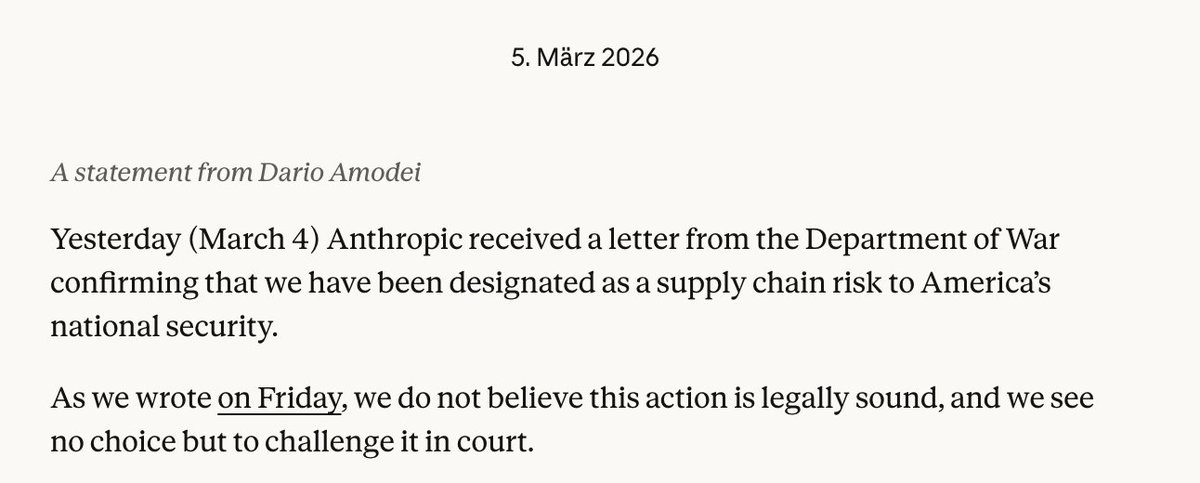

Anthropic CEO Dario Amodei responded with a public statement explaining the company's position: "We cannot in good conscience comply with a request to remove these fundamental safeguards. Our Constitutional Principles, which include avoiding undemocratic concentration of power and preventing catastrophic risks, require us to maintain these boundaries."

The military had threatened to cancel a $200 million contract and designate Anthropic as a "supply chain risk" to national security if the company didn't comply. Secretary Hegseth followed through on this threat shortly after President Trump's announcement, stating on X: "Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

The Presidential Response and Its Implications

President Trump's Truth Social post framed the conflict in stark terms: "The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution." He warned of "major civil and criminal consequences" if Anthropic doesn't cooperate during the phase-out period.

This represents one of the most significant interventions by a U.S. president into private sector AI governance. The order affects not just defense applications but all federal use of Anthropic's services, potentially impacting dozens of agencies that have integrated Claude into their operations.

Anthropic's Constitutional Principles and Their Practical Impact

Anthropic's ethical framework, which the company calls its "Constitutional AI" approach, represents a novel attempt to embed values directly into AI systems. The company has positioned itself as taking a more cautious approach than competitors like OpenAI and Google, particularly regarding potentially dangerous applications.

The specific safeguards at issue include:

- Prohibitions against using Claude for mass surveillance of populations

- Restrictions on deployment in fully autonomous weapons systems

- Limitations on uses that could concentrate power undemocratically

These restrictions reflect growing concerns within the AI safety community about how powerful language models might be weaponized or used for authoritarian purposes. However, the Pentagon views them as unacceptable constraints on national defense capabilities.

Broader Industry Context and Reactions

The confrontation occurs against a backdrop of increasing tension between AI companies and government agencies. Earlier this year, Google and OpenAI employees signed an open letter expressing solidarity with Anthropic's position on AI safety, suggesting industry-wide support for maintaining ethical boundaries.

Other AI companies are now watching closely, as the outcome could establish precedent for how much control private companies retain over their technologies' applications. The situation raises fundamental questions about whether AI developers can—or should—maintain veto power over how governments use their creations.

Technical and Operational Challenges of the Phase-Out

The six-month migration period presents significant challenges for federal agencies. Many have integrated Claude into critical workflows, from document analysis to customer service applications. Replacing these systems with alternatives from companies like OpenAI or Google—or developing in-house solutions—will require substantial resources and potentially create security vulnerabilities during transition periods.

For the Department of Defense specifically, the loss of Anthropic's technology could impact various AI research and development programs. The military has increasingly relied on commercial AI providers rather than developing all capabilities internally, making this disruption particularly significant.

International Implications and Geopolitical Considerations

The U.S. government's standoff with a leading AI company sends signals to international competitors and allies alike. China and other nations are watching how democratic societies balance innovation, ethics, and national security in the AI domain.

Some analysts suggest that if American AI companies impose strict ethical constraints that foreign competitors don't match, it could create strategic disadvantages. Others argue that maintaining strong ethical standards represents a competitive advantage in building trust with global partners.

Legal and Regulatory Questions

The confrontation raises unresolved legal questions about the extent of presidential authority over private companies' terms of service. While the government can choose not to contract with specific vendors, the threat of "civil and criminal consequences" for non-cooperation during the phase-out period represents uncharted territory.

Additionally, the designation of Anthropic as a "supply chain risk" could have far-reaching implications for the company's commercial business beyond government contracts, as many private sector partners also work with the military.

The Future of AI Governance

This conflict highlights the growing need for clearer frameworks governing AI deployment in sensitive contexts. Currently, there's a regulatory gap between companies' self-imposed ethical guidelines and government requirements, with no established process for resolving conflicts.

Some experts are calling for congressional action to establish clearer rules of the road, while others believe these conflicts should be resolved through existing contract law and market mechanisms.

What Comes Next

Anthropic now faces difficult decisions about whether to maintain its ethical stance at the cost of significant government business and potential legal challenges, or to negotiate some compromise. The company's response will likely influence how other AI firms approach similar conflicts with government agencies.

Meanwhile, federal agencies must scramble to replace Anthropic's technology while maintaining operational continuity. The six-month timeline suggests the administration expects this to be a complex but manageable transition.

Source: Engadget, The Verge, and Anthropic's official statement

This developing story represents a critical moment in the relationship between AI developers and government entities, with implications that will likely shape the industry for years to come.