The Defense Advanced Research Projects Agency's Biological Technologies Office (BTO) has issued a solicitation to lease 50 Nvidia HGX H100 GPU systems for its Novel Optical and Digital Electromagnetic Spectrum (NODES) program. The procurement notice specifies that while newer hardware can be proposed, any alternative must be ready for delivery within one month of contract award, indicating urgent computational needs for defense-related biological research.

Key Takeaways

- DARPA's Biological Technologies Office is procuring 50 Nvidia HGX H100 GPU systems for its NODES program, with hardware delivery required within one month.

- This represents a significant government investment in AI infrastructure for biological research applications.

What DARPA Is Acquiring

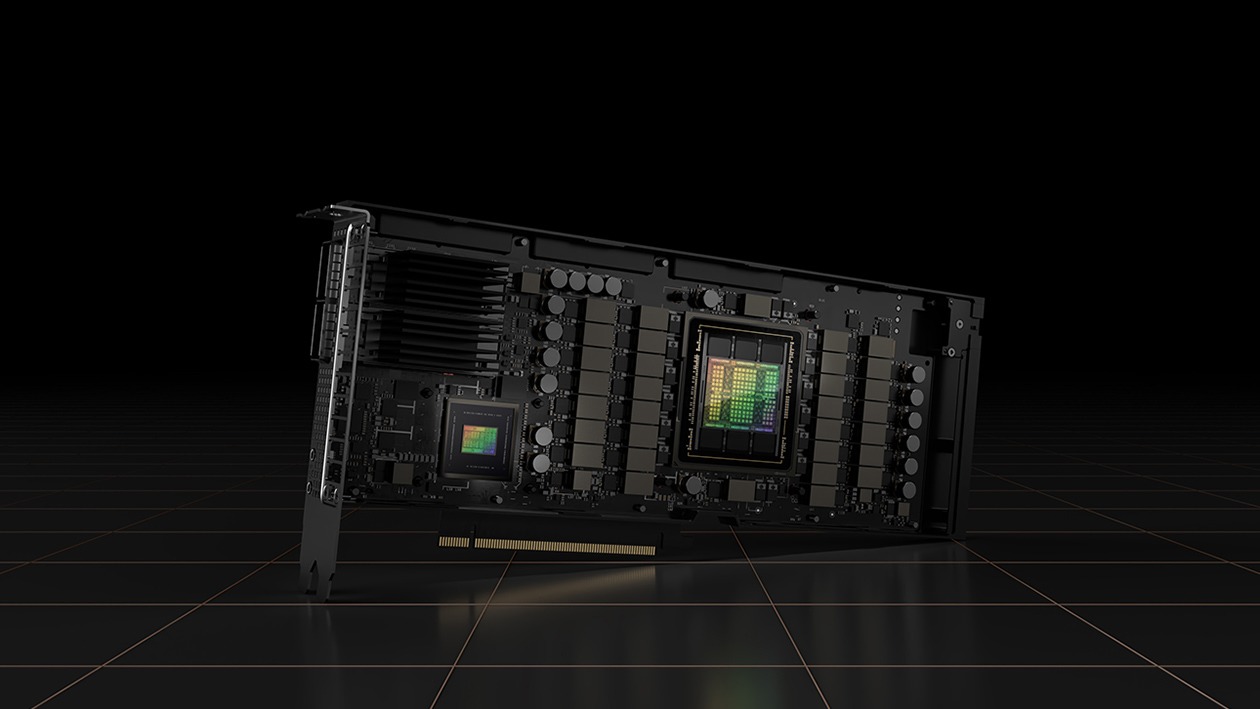

The solicitation calls for 50 Nvidia HGX H100 systems, which are enterprise-grade AI computing platforms. Each HGX H100 system typically contains 8 H100 GPUs connected via Nvidia's NVLink technology, though the exact configuration isn't specified in the public notice. The H100, built on Nvidia's Hopper architecture, has been the workhorse of AI training and inference since its 2022 launch, though it has since been succeeded by the Blackwell architecture in Nvidia's product lineup.

The one-month delivery requirement for any proposed alternative hardware is particularly notable. This tight timeline suggests the NODES program has immediate computational requirements that cannot wait for newer architectures like Blackwell to become widely available. It also indicates that existing H100 inventory or readily available alternatives are preferred over waiting for next-generation hardware.

The NODES Program Context

The NODES program falls under DARPA's Biological Technologies Office, which focuses on leveraging biological systems for national security applications. While specific NODES program details are classified, DARPA's biological programs typically involve areas like biosensing, bio-manufacturing, and human performance enhancement. The substantial GPU allocation suggests NODES involves significant AI modeling work, potentially including:

- Protein structure prediction and design

- Genomic analysis and synthetic biology

- Biological sensor data processing

- Neuromorphic computing research

- Biological system simulation

The scale of this procurement—50 systems representing potentially 400 H100 GPUs—places it among significant government AI infrastructure investments. For comparison, many academic supercomputing centers operate with similar GPU counts.

Technical Specifications and Alternatives

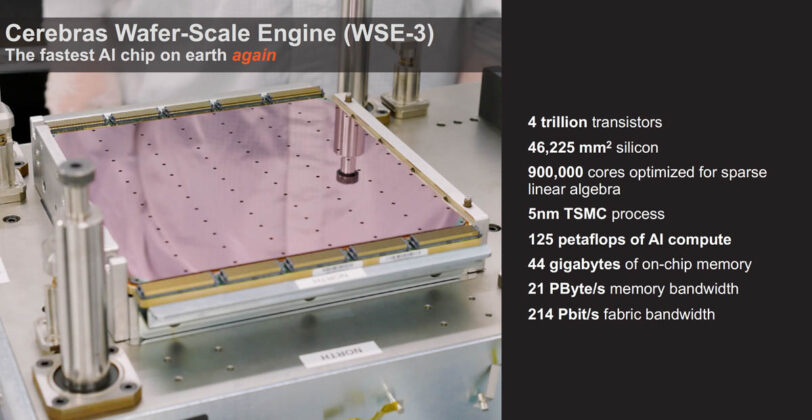

While the solicitation specifically mentions H100 systems, it does allow for "newer hardware" proposals. Given the current market landscape in April 2026, potential alternatives could include:

- Nvidia Blackwell systems: Nvidia's successor to Hopper, announced in 2024 and now commercially available

- AMD Instinct MI300 series: AMD's competitive data center GPU offerings

- Custom AI accelerators: From companies like Cerebras, SambaNova, or Groq

However, the one-month readiness requirement significantly narrows the field. Only hardware with existing inventory and proven software stacks would qualify, which likely explains why H100 systems remain specified despite being a previous-generation product.

Market and Supply Chain Implications

This procurement comes amid ongoing AI chip competition and supply chain challenges. Nvidia's H100 faced significant shortages through 2024-2025, though supply has improved with the transition to Blackwell. A government order of this size for "previous generation" hardware suggests:

- Proven software ecosystem: The H100 benefits from mature CUDA libraries and frameworks critical for research

- Immediate availability: Unlike newer chips that may have allocation queues

- Budget considerations: H100 systems may offer better value as prices adjust for older hardware

The defense timing is also noteworthy—this follows multiple recent Nvidia developments, including their April 19th launch of Nemotron 3 Super and Audio Flamingo Next models, and their April 20th collaboration with Adobe and WPP on enterprise AI agents.

gentic.news Analysis

This DARPA procurement reveals several important trends in government AI adoption. First, it demonstrates that even cutting-edge defense research programs sometimes prioritize immediate availability and proven ecosystems over the absolute latest hardware. The H100, while succeeded by Blackwell, represents a mature platform with extensive software support—critical for complex biological research where development time on new hardware stacks would delay projects.

Second, this aligns with the broader trend of specialized AI infrastructure for scientific domains. We've seen similar patterns in climate modeling, pharmaceutical research, and materials science. DARPA's biological focus suggests AI is becoming essential for understanding complex biological systems, potentially for applications like rapid threat detection (pathogens, toxins), human performance optimization, or bio-manufacturing for defense needs.

Third, the timing is interesting given recent competitive pressures on Nvidia. With UALink 2.0 aiming to challenge NVLink for AI clusters (as we covered April 20) and increasing competition from AMD, Cerebras, and custom silicon, this substantial government order reinforces Nvidia's entrenched position in research institutions. It also follows our April 22 coverage of SemiAnalysis reporting that customer feedback is driving the industry toward disaggregated inference architectures—though this DARPA procurement appears focused on training or simulation workloads given the H100's capabilities.

The one-month delivery requirement for alternatives suggests DARPA is genuinely open to competition but needs immediate capability. This creates an opportunity for Nvidia competitors who can demonstrate equivalent performance with existing inventory and software compatibility—a high bar that few can meet in such a short timeframe.

Frequently Asked Questions

What is DARPA's NODES program?

The Novel Optical and Digital Electromagnetic Spectrum (NODES) program is a DARPA Biological Technologies Office initiative focused on leveraging advanced sensing and computing technologies for biological applications. While specific details are classified, such programs typically involve biosensing, bio-manufacturing, or human performance research with national security implications.

Why is DARPA using H100 GPUs instead of newer Blackwell chips?

The solicitation allows for newer hardware proposals but requires one-month delivery. This suggests that H100 systems are either immediately available in sufficient quantity or that their mature software ecosystem (CUDA, libraries, frameworks) is critical for the research timeline. Blackwell systems might have allocation queues or require software adaptation that would delay the program.

How powerful is a 50-system H100 cluster?

Assuming each system contains 8 H100 GPUs (standard HGX configuration), this represents 400 H100 GPUs. Each H100 delivers approximately 34 teraflops of FP64 performance or 1,979 teraflops of FP8 tensor performance. The total cluster would provide significant computational power for AI training, simulation, or large-scale inference tasks.

What biological research requires this much AI compute?

Potential applications include protein structure prediction (similar to AlphaFold but potentially for novel proteins), genomic analysis for pathogen detection, simulation of biological systems for threat assessment, or AI-driven design of biological sensors. The scale suggests either very large models or numerous parallel experiments.

Can other companies bid besides Nvidia?

Yes, the solicitation explicitly states that "newer hardware can also be proposed." However, any alternative must be ready for delivery within one month and presumably offer equivalent or better performance for the target applications. This gives an advantage to companies with existing inventory and proven software compatibility.