What Happened

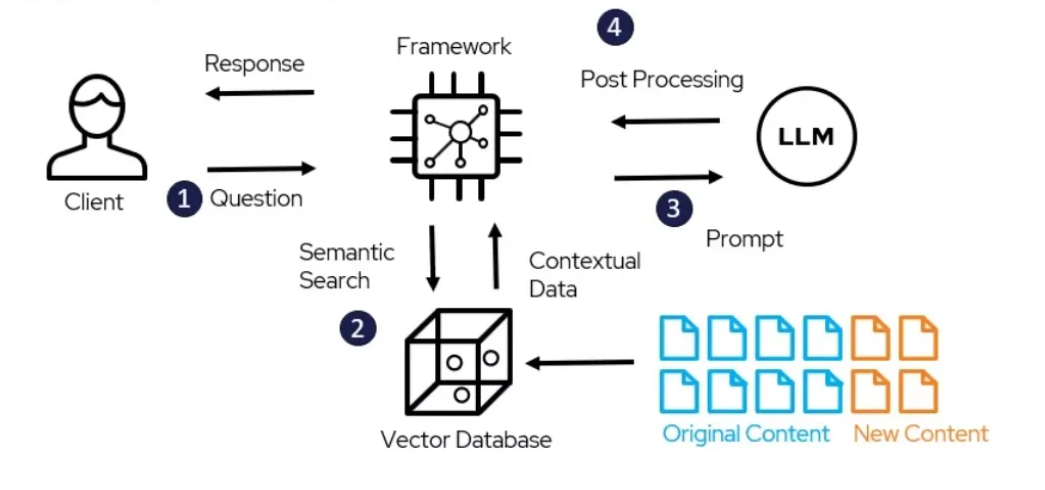

A recent paper from DigitalOcean tackles a practical bottleneck in shipping production AI agents: how to efficiently identify the most valuable user-agent conversations for human review without incurring massive LLM costs.

The problem is widespread. Teams running AI agents in production accumulate thousands of interaction logs, but manually reviewing all of them is infeasible, and using an LLM to evaluate every trajectory quickly becomes prohibitively expensive. The paper proposes a signal-based sampling approach that uses lightweight, deterministic rules to score trajectories, then selects the highest-signal ones for review.

The Method: Three Signal Types

The framework computes behavioral signals directly from trajectory data using deterministic heuristics. No LLM calls required. The signals fall into three categories:

Interaction signals from the user-agent dialogue: user rephrasing or correcting the agent (misalignment), agent producing near-duplicate responses (stagnation), user asking to talk to a human or abandoning the session (disengagement), and user confirming something worked (satisfaction). These are detected through normalized phrase matching, similarity checks, and simple discourse heuristics.

Execution signals from tool calls and runtime events: a tool call that doesn't advance the task indicates failure; repeated calls with identical or drifting inputs suggest a loop. These are straightforward to extract from execution logs.

Environment signals covering rate limits, context overflow, and API errors. These are useful for diagnosis but not for training, since they reflect system constraints rather than agent decisions.

Each trajectory receives a composite score based on which signals fire, and the highest-scoring ones are sampled for review.

Key Results

On τ-bench, the authors compared three approaches across 100 trajectories:

Random sampling 54% Length-based heuristic (longer conversations) 74% Signal-based sampling 82%Signal-based sampling means roughly 4 out of every 5 sampled trajectories are genuinely useful for improving the agent. The bigger win shows up in successful trajectories. Among conversations where the agent completed the task correctly, signal sampling still identified useful patterns in 66.7% of cases vs 41.3% for random. These are subtle issues like policy violations, inefficient tool use, and unnecessary steps that don't break the task but still matter for optimization.

How It Compares

Random sampling is the simplest baseline but wastes most of the annotation budget on uninformative conversations, since most production agents handle routine requests just fine. Filtering for longer conversations improves informativeness to 74%, but longer conversations skew heavily toward outright failures, so you surface obvious breakdowns but miss subtle issues hiding in conversations where the agent technically succeeded.

Signal-based sampling bridges this gap by catching both obvious failures and subtle optimization opportunities, achieving 82% informativeness overall and 66.7% on successful trajectories alone.

What This Means in Practice

The framework runs without any LLM overhead and can sit always-on in a production pipeline. Teams can deploy it to continuously surface the most valuable trajectories for review, turning a manual bottleneck into an automated signal-gathering process. The approach is already integrated into Plano, an open-source AI-native proxy that handles routing, orchestration, guardrails, and observability in one place.

gentic.news Analysis

This work addresses a pain point that has been growing across the industry as agent deployments scale. We've covered similar challenges in our reporting on agent evaluation frameworks — notably LangSmith's trace analysis and Arize AI's observability tools. What sets DigitalOcean's approach apart is its explicit focus on cost efficiency: no LLM calls means it's suitable for always-on pipelines without budget surprises.

The 82% informativeness rate is impressive, but the real value may be in the 66.7% figure for successful trajectories. Most evaluation frameworks focus on catching failures, but optimizing successful trajectories for efficiency and policy compliance is where long-term production gains live. This aligns with a broader industry shift we've observed: teams moving from "does the agent work?" to "how can we make it work better?"

The fact that DigitalOcean has open-sourced the implementation in Plano suggests they see this as a platform play rather than a research artifact. Expect other observability and evaluation platforms to integrate similar lightweight signal-based approaches in the coming quarters.

Frequently Asked Questions

How does signal-based sampling compare to using an LLM judge?

LLM judges evaluate each trajectory individually, which costs API credits and scales linearly with log volume. Signal-based sampling uses deterministic rules — no LLM calls — so it can run on all 80k trajectories for near-zero cost, then only the top 100 need human review. The trade-off is that signals capture predefined patterns, while an LLM judge can catch novel issues.

Can I use this with any agent framework?

Yes, as long as you have access to the interaction logs (user and agent messages), tool call records, and environment errors. The signal rules are framework-agnostic. DigitalOcean's Plano proxy integrates it natively, but you can implement the same logic against any log store.

What types of issues does signal sampling miss?

It misses issues that don't produce measurable behavioral signals — for example, a conversation where the agent gave a correct but suboptimal answer that the user accepted without complaint. The framework catches overt failures, loops, inefficiencies, and policy violations, but not all quality dimensions.

How do I tune the signal thresholds for my use case?

The paper uses fixed heuristics, but in practice you'd adjust phrase matching thresholds and similarity cutoffs based on your domain. Start with the defaults from the paper and iterate: review a batch of low-signal trajectories to check for false negatives, then tune accordingly.

.png&w=1920&q=75)