A new research paper tackles a core bottleneck in deploying LLM-powered autonomous agents: efficiently navigating massive tool libraries for complex, multi-step tasks. The work, posted to arXiv on April 13, 2026, makes a dual contribution: it introduces a new, large-scale evaluation benchmark called SLATE and proposes a novel search algorithm, Entropy-Guided Branching (EGB), which improves task success rates by approximately 15%.

The fundamental challenge is one of search complexity. An agent tasked with "refund a defective headset and order a replacement with expedited shipping" might have access to hundreds of API tools across user management, inventory, payment, and logistics systems. Exhaustively exploring all possible action sequences is computationally prohibitive, while greedy search can lead agents down incorrect paths with no mechanism for recovery.

What the Researchers Built: The SLATE Benchmark & EGB Algorithm

The team first built SLATE (Synthetic Large-scale API Toolkit for E-commerce), a benchmark designed to move beyond static, single-path evaluations. SLATE simulates a realistic e-commerce backend with over 1,200 distinct API tools across 15 modules (e.g., user_profile, order_fulfillment, payment_gateway). Its key innovation is context-awareness and path flexibility. Unlike benchmarks that validate against one "golden" trajectory, SLATE accepts any sequence of API calls that achieves the correct end state (e.g., a refund issued, a new order placed). This reflects real-world validity, where multiple procedural paths can lead to the same business outcome.

Initial evaluations on SLATE revealed that current state-of-the-art LLM agents (using techniques like ReAct or DFSDT) struggle with two issues: poor self-correction (once on a wrong path, they rarely backtrack effectively) and inefficient search (wasting compute on low-probability branches).

Motivated by this, the researchers developed Entropy-Guided Branching (EGB), an uncertainty-aware search algorithm. At its core, EGB uses the LLM's own predictive entropy—a measure of uncertainty in its next-tool prediction—to guide the search tree's expansion.

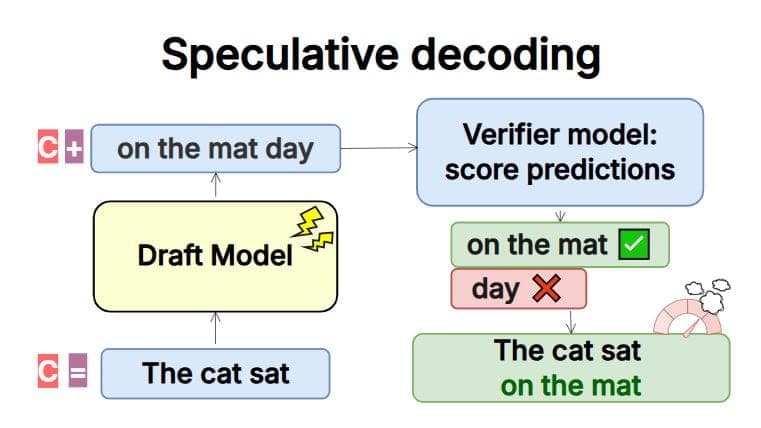

How Entropy-Guided Branching Works

Think of planning as navigating a tree. Each node is a state (e.g., "user logged in, item identified"), and each branch is a potential API call. Standard breadth-first search expands all branches equally; depth-first search goes deep down one path. EGB is more strategic:

- Predict & Score: At a given state, the LLM predicts a probability distribution over all possible next tools. The entropy of this distribution is calculated.

- High Entropy = High Uncertainty: A high entropy score means the LLM is unsure about the correct next step (e.g., it can't decide between

check_inventoryorapply_coupon). - Dynamic Branching: Instead of following only the top-1 prediction, EGB dynamically expands more branches from high-entropy nodes. It follows fewer branches from low-entropy nodes where the model is confident.

- Optimized Trade-off: This creates an optimal exploration-exploitation balance. The system explores more thoroughly at decision points where the model is confused (exploration) and commits to paths where the model is confident (exploitation), all while strictly bounding total computational budget (LLM calls).

The technical implementation involves integrating EGB into a Monte Carlo Tree Search (MCTS)-like framework, where the entropy signal prunes and prioritizes the search tree in real-time.

Key Results: A 15% Lift in Success Rate

The paper presents extensive experiments on the SLATE benchmark. The EGB-powered agent is compared against strong baselines including Chain-of-Thought (CoT), ReAct, and Tree-of-Thoughts (ToT) style planners.

Table: Performance comparison on the SLATE benchmark. EGB achieves a ~15 percentage point improvement in success rate over the ReAct baseline while using fewer tool calls on average.

The results show EGB achieving a 76.4% task success rate, a significant improvement over the 61.3% of ReAct and 65.1% of a Depth-First Search with Self-Talk (DFSDT) agent. Crucially, it does this while reducing the average number of tool calls (a proxy for cost and latency) from 18.7 to 14.9. The Path Efficiency Score, a metric defined in the paper that combines success with trajectory optimality, also saw a marked increase.

The ablation studies confirm that the entropy guidance is the key driver. A version of EGB that branches randomly or based solely on probability magnitude fails to match its performance.

Why It Matters: Toward Reliable, Scalable Agentic AI

This work addresses two critical gaps in the agent toolkit: evaluation and core search algorithms. SLATE provides a much-needed, large-scale, and flexible testbed for the research community. As noted in our recent coverage of agent frameworks like HARPO and production patterns for Claude Agents, robust evaluation is a prerequisite for reliable deployment.

EGB offers a principled, model-introspective method for making agentic search computationally tractable. It doesn't require additional fine-tuning; it's a plug-in improvement for existing LLM-based planners. For enterprises looking to deploy agents over large internal API surfaces—a common scenario in fintech, e-commerce, and enterprise SaaS—this type of efficiency gain directly translates to lower inference costs and more reliable task completion.

The paper explicitly links scalable agent planning to progress toward more capable AI systems, a topic of intense discussion as forecasters recently revised AGI timelines forward. Efficient, reliable tool-use is a foundational capability on that path.

gentic.news Analysis

This research arrives at a pivotal moment for agentic AI. The trend we've observed—from theoretical frameworks to production systems—is now hitting the hard problem of search efficiency at scale. The introduction of SLATE is a direct response to the community's need for better benchmarks, a need highlighted just last week when MIT and Anthropic released a benchmark revealing systematic limitations in AI coding assistants. SLATE's context-aware, multi-path validation sets a new standard for evaluating real-world tool use.

The Entropy-Guided Branching algorithm is a clever application of information theory to a practical engineering problem. It recognizes that the LLM's own uncertainty is a valuable signal, not just noise to be marginalized. This aligns with a broader shift from treating LLMs as static oracles to treating them as reasoning engines whose internal states can be queried and leveraged, as seen in MIT/Stanford/Google's work on LLMs self-improving their prompts.

Practically, EGB's significance is its potential to lower the compute barrier for complex agentic workflows. As Ethan Mollick recently discussed, compute constraints are a "double bind" for AI growth. Algorithms that achieve more with fewer LLM calls are therefore not just academically interesting; they are economically essential for scaling. This work dovetails with industry efforts like the hybrid inference architecture blueprint recently proposed by Intel and SambaNova, aiming to make agentic AI workloads more feasible.

The paper's focus on e-commerce is also strategic. It's a domain with clear business value, complex state, and vast tool sets—a perfect testbed for stress-testing agent reliability. Success here paves the way for adoption in similarly structured domains like travel booking, logistics, and enterprise IT orchestration.

Frequently Asked Questions

What is Entropy-Guided Branching (EGB) in simple terms?

EGB is a search strategy for AI agents that tells them "when you're unsure, look around more; when you're sure, commit." It uses the AI's own confidence score (entropy) to decide how many possible next steps to explore, making the planning process much smarter and more efficient than trying everything or always following the first idea.

How is the SLATE benchmark different from other AI agent tests?

Most benchmarks check if an agent follows one exact script of actions. SLATE is more realistic: it only checks if the agent reaches the correct final goal (e.g., "item refunded"), no matter which valid sequence of API calls it uses to get there. This allows for multiple correct solutions and better measures an agent's robust problem-solving ability.

Does EGB require training a new AI model?

No. EGB is a planning algorithm, not a new model. It works with existing LLMs (like GPT-4 or Claude) by intelligently guiding how the model explores possible action sequences during a task. It's a drop-in improvement for many existing agent frameworks.

What are the real-world applications of this research?

The primary application is any system where an AI agent needs to complete a multi-step task using a large set of tools or APIs. This includes automated customer service (handling returns, upgrades), internal business process automation (onboarding, procurement), and complex data analysis workflows that involve querying multiple databases and software tools.